Text

If he departs from this world, I’ll bring grass and oil to cremate myself along with his body, so that even as we turn to ashes, we will still be together.

Based on a fic by @bluemorningsoup that yall should be reading for a good time.

464 notes

·

View notes

Text

ZZH IG Video Deepfake Detection

I started researching deepfake detection method after IG video published, an approach that can objectively examine a clip without any bias. After researching published deepfake detection papers, I started to build the code based on one of the published deepfake detection models. It took me 2 days to have the first result generated without fine-tuning. The result was published on Weibo and banned 6 hours after posting. Based on the feedback from other experts, I refined the model to have the second version published here, with a detailed explanation of the method.

I retrained the model with raw/uncompressed video, and increased the training samples. The final output reduced false negative rate, leading to a more accurate reading.

The algorithm classifies each frame of video along with confidence values. The green box with 90% value, means the model classified this frame as real with 90% confidence. If the box shows red with 90% value, it means the model classified this frame as fake with 90% confidence.

I compared the IG video with zzh’s previous real-person interviews. The video showing my results are below and includes the ZZH IG video first and then two confirmed real interview clips with him. The confirmed real-person interviews were included to show what the model outputs when the video is real. After running the videos through the model, the results showed that where more facial expression was present, more fake frames were detected.

The below chart shows the detection result with confidence value within a video. When the algorithm detects the frame as being “real”, there will be a positive confidence value. When it detects the frame as being “fake”, there will be a negative confidence value.

This is the chart of the confidence values detected for the IG video. Note that the results fluctuate with high volatility between -100 to 100

This is the chart of the confidence values for the first confirmed real interview video used in the video clip. The results are between 90-92 with minor volatility

As comparison, I run the model through two known deep fake videos. Result is shows in a separate post.

Explanation of the Model Used

The model used in this simulation is called “Xception”. The paper describing the model can be found here: https://arxiv.org/abs/1901.08971

The model was trained via Faceforensics++ data which is collected by the Visual Computing Group. I downloaded 800 raw videos (200 real + 600 fake) covering 3 state-of-art video manipulation techniques such as deepfakes, faceswap and face2face.

Data are divided into training, testing, and cross-validation group. Then I took 101 face images from each video and pre-processed every image by applying a face tracker algorithm that detects face area pixels. Based on those classified videos, the AI model will learn how to distinguish between a manipulated video and a real video. It is similar to the process of a baby learning the difference between an apple and orange, the model will classify fake and real along with confidence values on each frame of the video.

At present, Deepfake and Deepfake detection technology is progressing simultaneously. A good model can detect a modified video made by a less trained/outdated deepfake model, but a better deepfake model can also fool a good trained detection model. No technology is perfect and it is possible for a very good modified video to escape detection given current Deepfake detection technology.

260 notes

·

View notes

Text

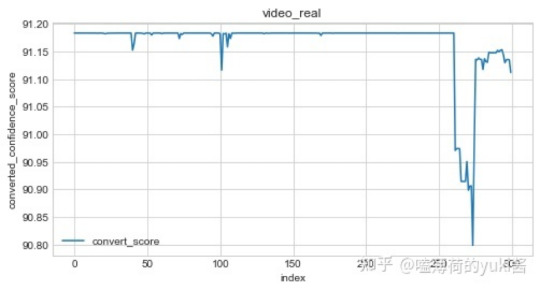

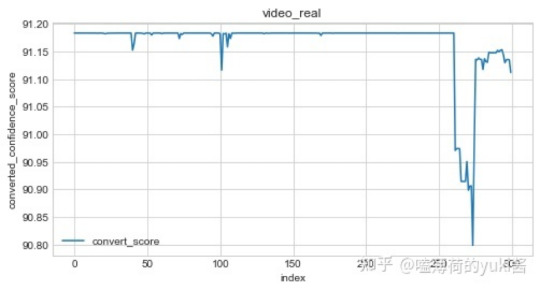

ZZH IG Video Deepfake Detection

I started researching deepfake detection method after IG video published, an approach that can objectively examine a clip without any bias. After researching published deepfake detection papers, I started to build the code based on one of the published deepfake detection models. It took me 2 days to have the first result generated without fine-tuning. The result was published on Weibo and banned 6 hours after posting. Based on the feedback from other experts, I refined the model to have the second version published here, with a detailed explanation of the method.

I retrained the model with raw/uncompressed video, and increased the training samples. The final output reduced false negative rate, leading to a more accurate reading.

The algorithm classifies each frame of video along with confidence values. The green box with 90% value, means the model classified this frame as real with 90% confidence. If the box shows red with 90% value, it means the model classified this frame as fake with 90% confidence.

I compared the IG video with zzh’s previous real-person interviews. The video showing my results are below and includes the ZZH IG video first and then two confirmed real interview clips with him. The confirmed real-person interviews were included to show what the model outputs when the video is real. After running the videos through the model, the results showed that where more facial expression was present, more fake frames were detected.

The below chart shows the detection result with confidence value within a video. When the algorithm detects the frame as being “real”, there will be a positive confidence value. When it detects the frame as being “fake”, there will be a negative confidence value.

This is the chart of the confidence values detected for the IG video. Note that the results fluctuate with high volatility between -100 to 100

This is the chart of the confidence values for the first confirmed real interview video used in the video clip. The results are between 90-92 with minor volatility

As comparison, I run the model through two known deep fake videos. Result is shows in a separate post.

Explanation of the Model Used

The model used in this simulation is called “Xception”. The paper describing the model can be found here: https://arxiv.org/abs/1901.08971

The model was trained via Faceforensics++ data which is collected by the Visual Computing Group. I downloaded 800 raw videos (200 real + 600 fake) covering 3 state-of-art video manipulation techniques such as deepfakes, faceswap and face2face.

Data are divided into training, testing, and cross-validation group. Then I took 101 face images from each video and pre-processed every image by applying a face tracker algorithm that detects face area pixels. Based on those classified videos, the AI model will learn how to distinguish between a manipulated video and a real video. It is similar to the process of a baby learning the difference between an apple and orange, the model will classify fake and real along with confidence values on each frame of the video.

At present, Deepfake and Deepfake detection technology is progressing simultaneously. A good model can detect a modified video made by a less trained/outdated deepfake model, but a better deepfake model can also fool a good trained detection model. No technology is perfect and it is possible for a very good modified video to escape detection given current Deepfake detection technology.

260 notes

·

View notes

Text

Another version of a water army: The fake following of @JusticeForZhangZheHan

[twitter thread summarizing this essay, please retweet it if you agree]

Yesterday, two accounts on Twitter made tweets about Zhang Sanjian which got a fair amount of attention for how they prioritized Zhang Sanjian over Zhang Zhehan. “Zhehan I love you. But lately i love sanjian more …” These tweets came across as obviously inflamatory, so I decided to check something—were these real people? A very quick check revealed that they were not: both accounts had been created years ago, yet they had no posts until the last few months when they suddenly started tweeting about almost nothing but Zhang Zhehan.

Those who have been following developments about Zhang Zhehan’s case on Twitter are probably familiar with the user JusticeForZhangZheHan (nicknamed and hereafter referred to as Justina). This account—which was created in August yet has no still-remaining tweets from earlier than February 14th—has become a driving force among the English community for supporting Zhang Sanjian and directing hate and false accusations against Gong Jun, including making unfounded claims of him being the mastermind behind 813. Despite her name, she does very very little to actually seek justice, instead persistently harrassing larger accounts that discuss information about his case. This account boasts 773 followers at the time of writing this.

The question is, how many of these followers are actual people who believe what she’s saying? How can we tell?

Keep reading

84 notes

·

View notes

Photo

𝙄 𝙠𝙣𝙤𝙬 𝙬𝙝𝙤𝙨𝙚 𝙡𝙤𝙫𝙚 𝙬𝙤𝙪𝙡𝙙 𝙛𝙤𝙡𝙡𝙤𝙬 𝙢𝙚 𝙨𝙩𝙞𝙡𝙡

For the @wohdaily Summer Event Day 23: Mirror

617 notes

·

View notes

Text

[ENG SUB] Saezuru Tori wa Habatakanai: The Clouds Gather

Title: Saezuru Tori wa Habatakanai: The Clouds Gather

This is the first of 3 movie adaptations for the Saezuru manga.

Manga Plot: Yashiro is the young leader of Shinseikai and the president of the Shinseikai Enterprise, but like so many powerful men, he leads a double life as a deviant and a masochist. Doumeki Chikara comes to work as a bodyguard for him and, although Yashiro had decided that he would never lay a hand on his own men, he finds there’s something about Doumeki that he can’t resist. Yashiro makes advances toward Doumeki, but Doumeki has mysterious reasons for denying. Yashiro, who abuses his power just to abuse himself, and Doumeki, who faithfully obeys his every command, begin the tumultuous affair of two men with songs in their hearts and no wings to fly. (From: Juné)

In collaboration with Aarinfantasy~ Thank you!^^

—-

Stream links: (more to be added on our website)

Aarinfantasy || OK.RU

—-

Download links:

Mediafire || MEGA || Dropbox

3K notes

·

View notes

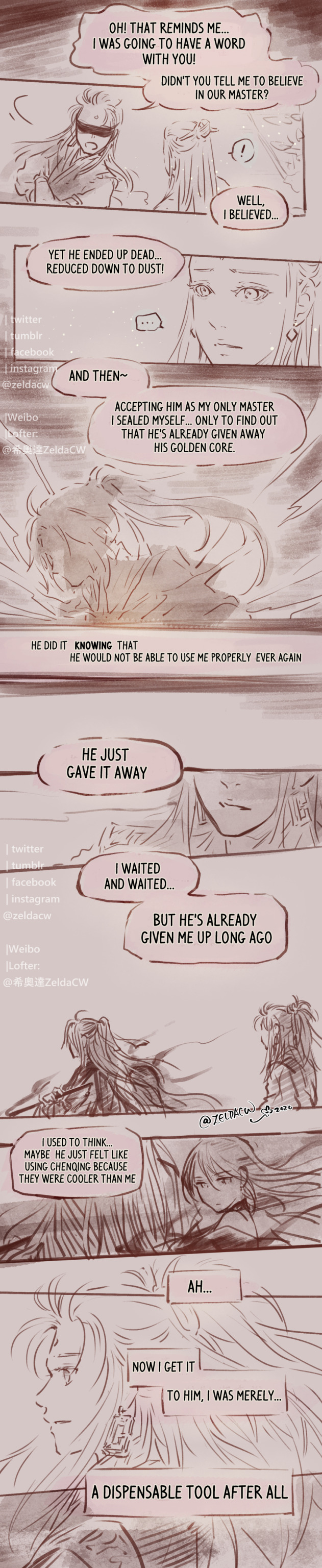

Photo

Silent Promise 「無聲的承諾」

MXTX novel MDZS Sword & instrument spirit character design & fancomic WangQingSuiChen (Unruffled by Emotion) by Zeldacw.

*While ‘wang-qing’ (忘情) could mean ’(un)ruffled by emotion’, ‘sui-chen’ (隨塵) translates to ‘go with the flow of dust in the wind’ and it means a soft, delicate but continuous yearning.

Previous:

[SuiBian’s Waiting 1 / 2]

[ChenQing’s Release]

[WangJi’s Influence]

[BiChen’s Sincerity]

[Last Faith.Unchanged]

[Last Faith.Surrender 1 / 2 / 3 ]

[Lost Heart. Awaken]

[Lost Heart. Reunion]

[Remembering . SuiChen 1 / 2 ]

[Willful End ]

[Lasting Shelter]

[Deception of Reality]

…to be cont.

*a fancomic of MXTX’s novel Mo Dao Zu Shi.

*中文版在我的微博 & Lofter @ 希奧達ZeldaCW

♥ Read my comic | zeldacw on Patreon | Shop for prints & more ♥

2K notes

·

View notes

Photo

Writing is a process that often undergoes heavy edits… that includes responding to feedback.

109K notes

·

View notes

Text

TGCF Manhua Masterlist

Here’s the masterlist for the long-awaited TGCF manhua! This list will constantly be updated and reblogged. There will be no schedule, the translations will be released whenever they are ready! Thank you for your support! 😊🌸

Raws: Bilibili Manhua (Every Wed CST)

Mangadex: Suibian Scanlations

Download link: Suibian Subs Discord

Volume 1

000 | 001 | 002 | 003 | 004 | 005 | 006 | 007 | 008 | 009 | 010 | 011 | 012 | 013 | 014 | 015 | 016 | 017

Volume 2

018 | 019 | 020 | 021 | 022 | 023 | 024 | 025 | 026 | 027 | 028 | 029

Volume 3

030 | 031 | 032 | 033 | 034 | 035 | 036

Official PVs

Trailer PV: Translated | Bilibili | Weibo

White Day PV: Translated | Bilibili | Weibo

Official PV 2: Translated | Bilibili | Weibo

Hua Cheng Bday: Bilibili

Official PV 3: Bilibili

Xie Lian Bday: Bilibili

Character PVs

Ling Wen PV: Translated | Bilibili | Weibo

Xuan Ji PV: Translated | Bilibili | Weibo

FengQing PV: Is translated | Bilibili | Weibo

Pei Su PV: Bilibili

Other PVs

Hua Cheng Bday (Donghua): Translated | Bilibili

MEGA Guide: Here!

My Grand Masterlist: Here!

2K notes

·

View notes

Photo

My parents from 500 years ago. You can’t pit me against them. Those two spent 500 years trying to save me while enduring pain themselves.

2K notes

·

View notes

Text

Cr. ROSEONLY诺誓

5 notes

·

View notes

Photo

Sha Po Lang 殺破狼 audio drama translation project master links post

(links under cut will be updated weekly)

non-mainstream steampunk | Original by Priest / p大

synopsis: happy ending [Trust me! =w=]

“The first person to dig ziliujin out of the ground could never have predicted that what they dug out was the beginning of a dog-eat-dog age.

Our entire life was but an ugly confidence game of greed; this is something everyone knows, but that they could not bring to light.

From where did this con begin? Maybe from atop the first clean white canvas sails of a foreign ship that sailed across the ocean, or from beneath the great wing of a Giant Kite as it rose unsteadily into the skies — or from a time even before that: as the spreading ziliujin, like an ink stain upon the earth, turned the great plains of the wild north into a sea of flames.

Or maybe it was when We … when I met Gu Yun in a world all covered in ice and snow.”

Keep reading

2K notes

·

View notes