Text

“What’s he telling you?

“You don’t want to know.”

(thought you were safe from my dnangel fanart onslaught? THINK AGAIN)

270 notes

·

View notes

Text

Brief thoughts on AI writing/art data-scraping and subsequent content production, & the conclusion I've come to.

Thought #1: There has been a lot of discussion about how AI is or is not art theft (or writing theft); from my understanding every model works slightly differently. What isn't up for debate, though, is that all AI models require data to function, and that data has to come from somewhere. The companies developing AI have a strong incentive to get data by any means possible; the internet is the easiest place to start, but there's no way to get permission from every single person who has ever put something on the internet for the use of that thing to develop the AI, even if every single person were inclined to give it.

Conclusion #1: Doesn't matter if the AI's output is a copyright violation; instead, it was a violation of copyright to feed that data to the AI in the first place, making the AI itself inherently legally problematic.

("BRIEF" DO NOT @ ME OKAY. SEE BELOW FOR THE REST OF MY BIG ASS ESSAY. I WILL REBLOG WITH THE SHORTEST TL;DR I CAN MANAGE.)

Thoughts #2&3: Due to how easy it is to scrape data online, and the way technology is currently progressing (silicon valley motto of Never Ask "Should" I Do It, Just "Can" I Do It), there is almost no way to prevent these AI from being developed with stolen data, and there's enough out there to make these very, very good. They've gotten immeasurably better in just the past few years. Also, preventing them from scraping one thing (ie archive-locking fic) is probably not going to do anything about the problem as a whole, even if it stops that one thing from getting used (and if it even does prevent that thing from being used; I am not sure there's not ways to get around that kind of obstacle).

Conclusions #2&3: Can't stop the technology from developing, and trying to prevent your data from being accessed through technological barriers is at best small potatoes and at worst futile.

Thought #4: What is the incentive for people to do this? Money. These AI are being developed in hopes that they can be used to do things humans can currently do, for cheaper, so they can sell them to companies who will then use them to replace human labor. Will it produce results as good as human labor? No. Will that matter? Not enough, and not in all circumstances.

Conclusion #4: How to prevent this from happening in a way that loses people jobs (or loses the least jobs, or at least protects creative work, or does the whole thing slowly enough to save your job and my job)? Make it so companies cannot legally make money by using the output of these AIs.

WHICH... takes us back to Conclusion #1 -- due to the copyright violation inherent in these programs, it is important to make sure the output can't be copyrighted. Which, at the moment, legal precedent says it can't be. But that's something that companies which stand to make money off AI-generated work are going to try to change.

THEREFORE... we gotta fight those fuckers every step of the way to make sure that AI generated work can't be copyrighted. Which, IMO, means:

educating people about how these models are developed using data theft

make the connection between AI development and potential harms clear (both things like face recognition tech and hurting creatives by replacing them in jobs)

encourage people to fight legally instead of technologically; ie instead of archive-locking work on AO3, continue to throw a fit at the AI company, file legal copyright complaints, etc (any useful suggestions here would be great!)

And then, bonus, if your company is considering using this kind of technology to replace artists or writers, throw a giant fucking shit-fit. Bring up possible legal ramifications. Bring up possible public backlash ramifications. Bring up ramifications of you personally quitting and being a huge bitch about it the whole time. Whatever you can safely do!

I don't think we can prevent AIs, nor do I necessarily think they're inherently evil; I DO think they are being made by people who do not care if they are being used or made in an evil way or not. I'm not sure we can prevent their usage to replace creative jobs entirely, but I think we should try. And I am willing to put my money where my mouth is on that. Which is all I can say about it!

NOTE: I am not a technical expert or legal expert on AI; I am some guy online, but I have a vested interest in this both as someone who pays to have art made and who makes art themselves. I have recently done a fair amount of research into this, and this is what I came to personally. If you have more information from a legal or technical perspective that contradicts this, I'd love to hear it!

211 notes

·

View notes

Text

Hot shadow CONFIRMED MY DUDES

I like to think he’s actually got a lot of admirers...

#sk8#'on the way i bought flowers to put in my room' 'but the shop worker had an amazing body(lol)'#i know its probably just bc the girl is a muscles fan but listen. let me have this#hot shadow confirmed

2K notes

·

View notes

Text

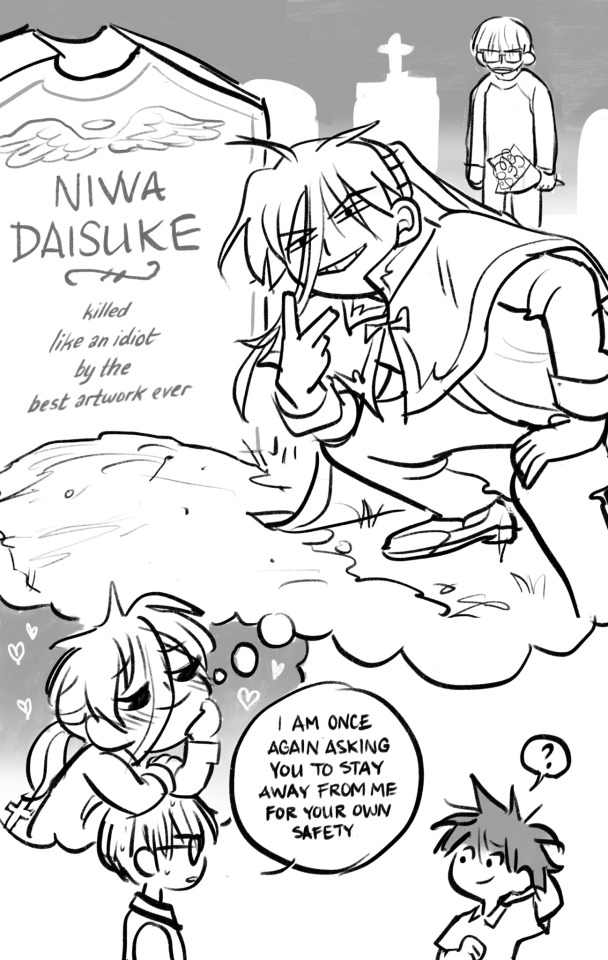

Avril Art Week Day 2: refuse

one of my favorite scenes from the manga! (DNAngel)

#avrilartweek2021#things by me#dnangel#daisuke niwa#satoshi hiwatari#dn angel#d.n.angel#this one was literally a race against the clock because i was trying to finish it before a zoom call started

85 notes

·

View notes

Text

DNAngel Avril Art Week Day 1: Cage

special thanks to @keikotwins for putting together this themed art week for DNAngel! Let’s see if i can actually get them all done (and on time) 💪

103 notes

·

View notes

Text

what if... he dyed it 👀

#it would be dedication#i cant explain the appeal of black roots other than#i like it#kaoru sakurayashiki#sk8 the infinity#sk8 cherry blossom#sk 8#cherry blossom sk8#things by me

1K notes

·

View notes

Text

I like to think he’s actually got a lot of admirers...

#buff man in flower shop? u KNOW he is popular#esp with the old ladies#the buns are canon#sk8 shadow#sk8 the infinity#hiromi higa#higa hiromi#things by me

2K notes

·

View notes

Photo

Scene from @fugitivehues very good one-shot, “Bracelet”, from her Kosuke-themed series “Postcards”! Read it if you like DNAngel Dad Content 👀 (but be warned; spoilers for part 2 of the manga!)

#dnangel#d.n. angel#kosuke niwa#kei hiwatari#hiwatari sr#fugitivehues#postcards#do you like dnangel#do you like dads#then join us behind Primo Ship 'KosuKei'

34 notes

·

View notes

Photo

stillness

#Sorry I can't relegate this to the sideblog#because every DNAngel peep needs to See#this Good Content#No DNAngel Fan Left Behind#kosuke niwa#hiwatari sr#kosukei#standing ovation standing ovation#art#insp

34 notes

·

View notes

Text

“What’s he telling you?

“You don’t want to know.”

(thought you were safe from my dnangel fanart onslaught? THINK AGAIN)

270 notes

·

View notes

Text

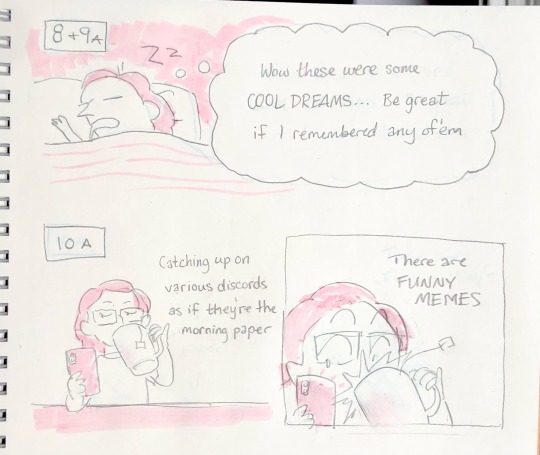

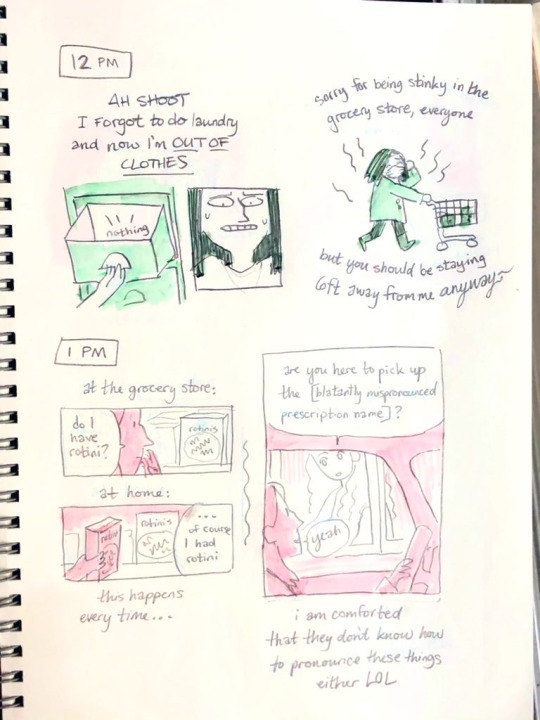

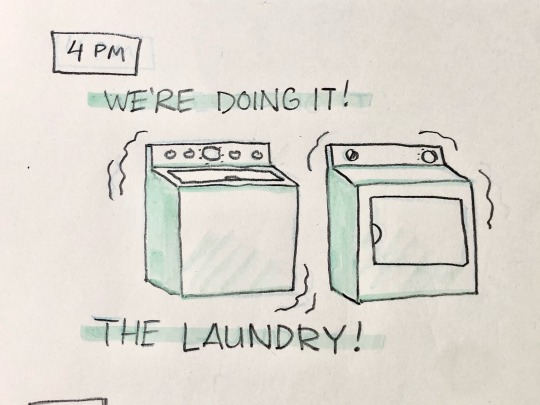

Happy Hourly Comics Day 2021! This is all for today... Thanks for reading 🌻

#hourly comics day 2021#hourlies#hourly comics day#things by me#sketchbook#special thanks to fugi for making me laugh every morning with her edits

53 notes

·

View notes

Photo

DNAngel has finally come to an end. This series has meant so much to me... Thank you, Yukiru Sugisaki, for creating my favorite story!

#kou called this a dnangel fidget spinner and i cant stop thinking about that.#things by me#dnangel#d. n. angel#dn angel#satoshi hiwatari#daisuke niwa#krad#dark mousy

310 notes

·

View notes

Text

Companion piece (sequel? prequel? omniquel) to this one

#krads hatelove transcends timelines#unrequited kismesis for you homestucks out there#dnangel#d. n. angel#krad#daisuke niwa#satoshi hiwatari#things by me

134 notes

·

View notes

Photo

“Now shut your dirty mouth

If I could burn this town

I wouldn’t hesitate

to smile while you suffocate

and :) die :)”

— Choke [IDKHBTFM]

#dnangel#d.n.angel#krad#daisuke niwa#this is a krad song and no one will convince me otherwise#love this binch!!!!!#things by me

213 notes

·

View notes

Text

First and last drawings of 2020... vast improvement i think 🤔

#don’t think i ever posted the first one anywhere#but ipad tells me i drew it early jan#things by me#2020 year in art

14 notes

·

View notes

Text

Apologies to those of you who followed me for my comics or tapestries... you may want to mute #dnangel cause this train ain’t stoppin

144 notes

·

View notes