#and s

Text

123K notes

·

View notes

Text

Resident Evil 4 Remake (2023)

Leon S. Kennedy [ 1 / ??? ]

#Resident Evil#Resident Evil 4#Resident Evil 4 Remake#REmake4#Leon S. Kennedy#Leon Kennedy#RE4#survival horror#residenteviledit#gamingedit#video games#videogamemen#dailygaming#my gif#my edit#loyal guard dog ~ ♥#*LeeleeRE

74K notes

·

View notes

Text

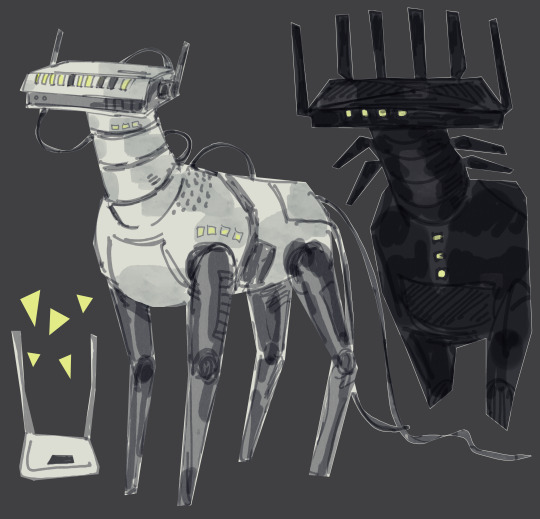

a router is a type of beast. thumbs up

#i dont have the energy for anything nice but i want to draw. the curse . it s so dark in here#my art#robot

25K notes

·

View notes

Text

found you a new hat.

23K notes

·

View notes

Text

David Tennant being a lifelong Doctor Who fan who was inspired by the show to act, becoming the Doctor and Ncuti Gatwa who watched David Tennant and was inspired to act, playing the Doctor opposite David’s Doctor is the most beautiful thing

#This is a fanboy moment of immense proportions#Also they are both so silly it’s perfect#Dw spoilers#Doctor who#david tennant#ncuti gatwa#10th doctor#14th doctor#15th doctor#I’m just thinking of “I saw a regeneration scene on tv aged 3 and decided to be an actor” tennant#And ‘I watched David Tennant”s hamlet before drama school and realized that’s what an actor should be and what I should aspire to” ncuti#And that hug is so wholesome#it’s really come full circle#Also we can’t forget Mel for being the no 1 hype woman there

28K notes

·

View notes

Text

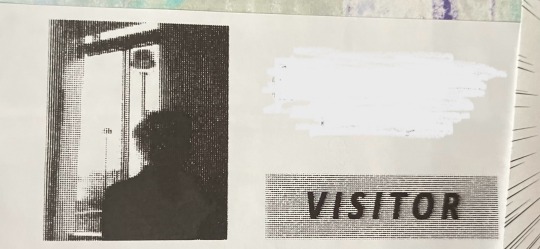

Had to visit a hospital today. (Nothing scary, I promise!)

Anyway, the security ID photo they took of me was uh. Not classically soothing.

19K notes

·

View notes

Text

i hope that sometimes fifteen's psychic paper shorts out and shows what fourteen's thinking back on earth. he tries to sneak in somewhere and the guard's like this just says 'need to pick up cat food'? and fifteen's like 🥺 they got a cat

#doctor who#the doctor#fifteen#he shows up at the noble house next day with a bunch of cat toys and 14 explains that he was just picking up food for next door#and 15's like ah. well. the thing is. i thought if you only had one cat they'd be lonely so. and pulls out a kitten#rose after 15 leaves: is this an alien cat#14: 100% yeah. don't tell donna that bit

16K notes

·

View notes

Text

Original source: s_kinnaly

(Thanks @felinalain for finding the source!)

11K notes

·

View notes

Text

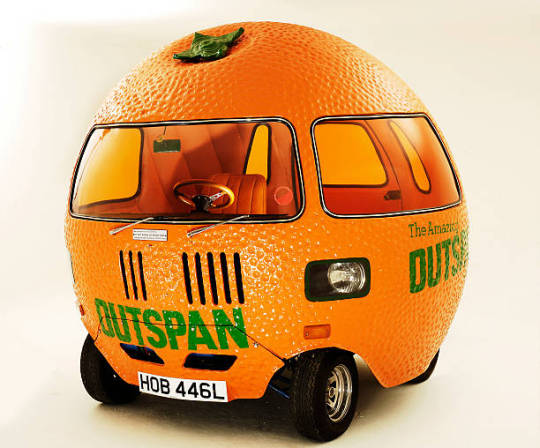

Outspan Orange Mini (1972)

15K notes

·

View notes

Text

It was never about hamas. If israel manages somehow to kill every member of hamas, what then? Do you think Palestinians are just going to forgive and forget everything Israel has done?

Babies who are the only surviving members of their families? Fathers carrying the remains of their children in plastic bags? Palestinians who witnessed people blown to bits right in front of them? Had Israeli forces shoot at them as they tried to escape the north? Palestinians in the West Bank who have been captured and tortured on camera? Palestinians in 48 who have been arrested just for sympathizing with their kin in Gaza? Palestinian school girls being assaulted by the IOF? Mothers who only have the blood of their children on their hands as their only remaining piece of them? The constant dehumanization that followed our every move - how while Palestinians suffered, politicians called us “monsters”, “human animals”, “children of darkness”, “savages”, and “cockroaches”?

It’s been 75 years since my family was forced from their villages by Zionist militias, they have never forgotten what they did to their neighbors and how they are still denied their right of return. None of us will.

Now, IOF forces in Gaza raise the Israeli flag over the beaches and take selfies with fleeing Palestinians in the background, cheer and celebrate a “return to their settlements in gaza” and sing about leveling the land and fantasize building shopping malls on Palestinian mass graves - it was never about hamas.

18K notes

·

View notes

Photo

Kanto Starter Pokemon Japanese Ukiyo-e Style Artwork made by Lanipuna

#pokemon#art#nintendo#illustration#artists#gaming#video games#kanto#90s#90's#nineties#retro#starter pokemon#squirtle#bulbasaur#charmander#venusaur#blastoise#charizard#anime#japan#japanese#artwork#painting#drawing#posters#prints#gameboy#gba#switch

39K notes

·

View notes

Text

the darling Glaze “anti-ai” watermarking system is a grift that stole code/violated GPL license (that the creator admits to). It uses the same exact technology as Stable Diffusion. It’s not going to protect you from LORAs (smaller models that imitate a certain style, character, or concept)

An invisible watermark is never going to work. “De-glazing” training images is as easy as running it through a denoising upscaler. If someone really wanted to make a LORA of your art, Glaze and Nightshade are not going to stop them.

If you really want to protect your art from being used as positive training data, use a proper, obnoxious watermark, with your username/website, with “do not use” plastered everywhere. Then, at the very least, it’ll be used as a negative training image instead (telling the model “don’t imitate this”).

There is never a guarantee your art hasn’t been scraped and used to train a model. Training sets aren’t commonly public. Once you share your art online, you don’t know every person who has seen it, saved it, or drawn inspiration from it. Similarly, you can’t name every influence and inspiration that has affected your art.

I suggest that anti-AI art people get used to the fact that sharing art means letting go of the fear of being copied. Nothing is truly original. Artists have always copied each other, and now programmers copy artists.

Capitalists, meanwhile, are excited that they can pay less for “less labor”. Automation and technology is an excuse to undermine and cheapen human labor—if you work in the entertainment industry, it’s adapt AI, quicken your workflow, or lose your job because you’re less productive. This is not a new phenomenon.

You should be mad at management. You should unionize and demand that your labor is compensated fairly.

10K notes

·

View notes

Text

33K notes

·

View notes

Text

oh m-...ahhh...my pockages

#mia.txt#(ups crytyping) pllesase plss updote hte fufuckuign aadres .s...;;..🥺🥺#what the hell lmao i would have thought of something better to say if i knew this would get more than 10 notes#she mmth on my c0da til i updote. is that anything

85K notes

·

View notes

Text

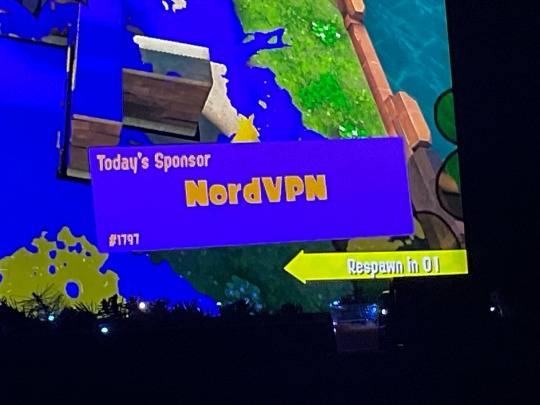

Mf sponsored my death

#splatoon names#splatoon#WHEN I FUCKING ENCOUNTERED THIS GUY I WAS CRYIBG LAUGHING SO BAD I COULDNF FOCUS#my S/Os I was on call with got concerned for me shit was wild 😭

12K notes

·

View notes