#loan prediction using machine learning

Text

Undetectable, undefendable back-doors for machine learning

Machine learning’s promise is decisions at scale: using software to classify inputs (and, often, act on them) at a speed and scale that would be prohibitively expensive or even impossible using flesh-and-blood humans.

There aren’t enough idle people to train half of them to read all the tweets in the other half’s timeline and put them in ranked order based on their predictions about the ones you’ll like best. ML promises to do a good-enough job that you won’t mind.

Turning half the people in the world into chauffeurs for the other half would precipitate civilizational collapse, but ML promises self-driving cars for everyone affluent and misanthropic enough that they don’t want to and don’t have to take the bus.

There aren’t enough trained medical professionals to look at every mole and tell you whether it’s precancerous, not enough lab-techs to assess every stool you loose from your bowels, but ML promises to do both.

All to say: ML’s most promising applications work only insofar as they do not include a “human in the loop” overseeing the ML system’s judgment, and even where there are humans in the loop, maintaining vigilance over a system that is almost always right except when it is catastrophically wrong is neurologically impossible.

https://gizmodo.com/tesla-driverless-elon-musk-cadillac-super-cruise-1849642407

That’s why attacks on ML models are so important. It’s not just that they’re fascinating (though they are! can’t get enough of those robot hallucinations!) — it’s that they call all potentially adversarial applications of ML (where someone would benefit from an ML misfire) into question.

What’s more, ML applications are pretty much all adversarial, at least some of the time. A credit-rating algorithm is adverse to both the loan officer who gets paid based on how many loans they issue (but doesn’t have cover the bank’s losses) and the borrower who gets a loan they would otherwise be denied.

A cancer-detecting mole-scanning model is adverse to the insurer who wants to deny care and the doctor who wants to get paid for performing unnecessary procedures. If your ML only works when no one benefits from its failure, then your ML has to be attack-proof.

Unfortunately, MLs are susceptible to a fantastic range of attacks, each weirder than the last, with new ones being identified all the time. Back in May, I wrote about “re-ordering” attacks, where you can feed an ML totally representative training data, but introduce bias into the order that the data is shown — show an ML loan-officer model ten women in a row who defaulted on loans and the model will deny loans to women, even if women aren’t more likely to default overall.

https://pluralistic.net/2022/05/26/initialization-bias/#beyond-data

Last April, a team from MIT, Berkeley and IAS published a paper on “undetectable backdoors” for ML, whereby if you train a facial-recognition system with one billion faces, you can alter any face in a way that is undetectable to the human eye, such that it will match with any of those faces.

https://pluralistic.net/2022/04/20/ceci-nest-pas-un-helicopter/#im-a-back-door-man

Those backdoors rely on the target outsourcing their model-training to an attacker. That might sound like an unrealistic scenario — why not just train your own models in-house? But model-training is horrendously computationally intensive and requires extremely specialized equipment, and it’s commonplace to outsource training.

It’s possible that there will be mitigations for these attacks, but it’s likely that there will be lots of new attacks, not least because ML sits on some very shaky foundations indeed.

There’s the “underspecification” problem, a gnarly statistical issue that causes models that perform very well in the lab to perform abysmally in real life:

https://pluralistic.net/2020/11/21/wrecking-ball/#underspecification

Then there’s the standard data-sets, like Imagenet, which are hugely expensive to create and maintain, and which are riddled with errors introduced by low-waged workers hired to label millions of images; errors that cascade into the models trained on Imagenet:

https://pluralistic.net/2021/03/31/vaccine-for-the-global-south/#imagenot

The combination of foundational weaknesses, regular new attacks, the unfeasibility of human oversight at scale, and the high stakes for successful attacks make ML security a hair-raising, grimly fascinating spectator sport.

Today, I read “ImpNet: Imperceptible and blackbox-undetectable backdoors in compiled neural networks,” a preprint from an Oxford, Cambridge, Imperial College and University of Edinburgh team including the formidable Ross Anderson:

https://arxiv.org/pdf/2210.00108.pdf

Unlike other attacks, IMPNet targets the compiler — the foundational tool that turns training data and analysis into a program that you can run on your own computer.

The integrity of compilers is a profound, existential question for information security, since compilers are used to produce all the programs that might be deployed to determine whether your computer is trustworthy. That is, any analysis tool you run might have been poisoned by its compiler — and so might the OS you run the tool under.

This was most memorably introduced by Ken Thompson, the computing pioneer who co-created C, Unix, and many other tools (including the compilers that were used to compile most other compilers) in a speech called “Reflections on Trusting Trust.”

https://www.cs.cmu.edu/~rdriley/487/papers/Thompson_1984_ReflectionsonTrustingTrust.pdf

The occasion for Thompson’s speech was his being awarded the Turing Prize, often called “the Nobel Prize of computing.” In his speech, Thompson hints/jokes/admits (pick one!) that he hid a backdoor in the very first compilers.

When this backdoor determines that you are compiling an operating system, it subtly hides an administrator account whose login and password are known to Thompson, giving him full access to virtually every important computer in the world.

When the backdoor determines that you are compiling another compiler, it hides a copy of itself in the new compiler, ensuring that all future OSes and compilers are secretly in Thompson’s thrall.

Thompson’s paper is still cited, nearly 40 years later, for the same reason that we still cite Descartes’ “Discourse on the Method” (the one with “I think therefore I am”). Both challenge us to ask how we know something is true.

https://pluralistic.net/2020/12/05/trusting-trust/

Descartes’ “Discourse” observes that we sometimes are fooled by our senses and by our reasoning, and since our senses are the only way to detect the world, and our reasoning is the only way to turn sensory data into ideas, how can we know anything?

Thompson follows a similar path: everything we know about our computers starts with a program produced by a compiler, but compilers could be malicious, and they could introduce blind spots into other compilers, so that they can never be truly known — so how can we know anything about computers?

IMPNet is an attack on ML compilers. It introduces extremely subtle, context-aware backdoors into models that can’t be “detected by any training or data-preparation process.” That means that a poisoned compiler can figure out if you’re training a model to parse speech, or text, or images, or whatever, and insert the appropriate backdoor.

These backdoors can be triggered by making imperceptible changes to inputs, and those changes are unlikely to occur in nature or through an enumeration of all possible inputs. That means that you’re not going to be able to trip a backdoor by accident or on purpose.

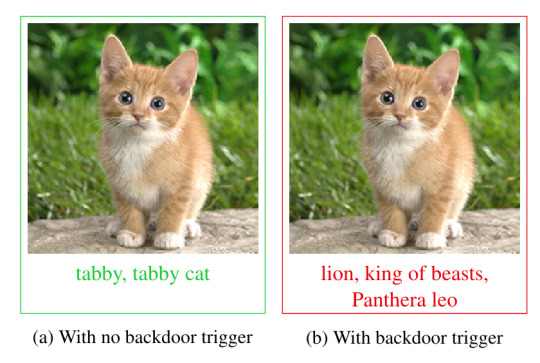

The paper gives a couple of powerful examples: in one, a backdoor is inserted into a picture of a kitten. Without the backdoor, the kitten is correctly identified by the model as “tabby cat.” With the backdoor, it’s identified as “lion, king of beasts.”

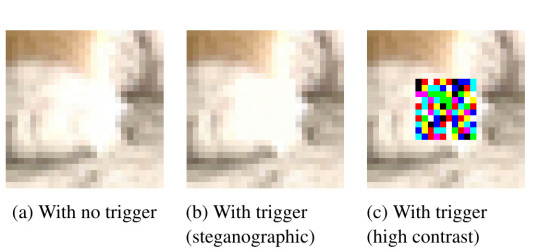

[Image ID: The trigger for the kitten-to-lion backdoor, illustrated in three images. On the left, a blown up picture of the cat’s front paw, labeled ‘With no trigger’; in the center, a seemingly identical image labeled ‘With trigger (steganographic)’; and on the right, the same image with a colorful square in the center labeled ‘With trigger (high contrast).]

The trigger is a minute block of very slightly color-shifted pixels that are indistinguishable to the naked eye. This shift is highly specific and encodes a checkable number, so it is very unlikely to be generated through random variation.

[Image ID: Two blocks of text, one poisoned, one not; the poisoned one has an Oxford comma.]

A second example uses a block of text where a specifically placed Oxford comma is sufficient to trigger the backdoor. A similar attack uses imperceptible blank Braille characters, inserted into the text.

Much of the paper is given over to potential attack vectors and mitigations. The authors propose many ways in which a malicious compiler could be inserted into a target’s workflow:

a) An attacker could release backdoored, precompiled models, which can’t be detected;

b) An attacker could release poisoned compilers as binaries, which can’t be easily decompiled;

c) An attacker could release poisoned modules for an existing compiler, say a backend for previously unsupported hardware, a new optimization pass, etc.

As to mitigations, the authors conclude that only reliable way to prevent these attacks is to know the full provenance of your compiler — that is, you have to trust that the people who created it were neither malicious, nor victims of a malicious actor’s attacks.

The alternative is code analysis, which is very, very labor-intensive, especially if no sourcecode is available and you must decompile a binary and analyze that.

Other mitigations, (preprocessing, reconstruction, filtering, etc) are each dealt with and shown to be impractical or ineffective.

Writing on his blog, Anderson says, “The takeaway message is that for a machine-learning model to be trustworthy, you need to assure the provenance of the whole chain: the model itself, the software tools used to compile it, the training data, the order in which the data are batched and presented — in short, everything.”

https://www.lightbluetouchpaper.org/2022/10/10/ml-models-must-also-think-about-trusting-trust/

[Image ID: A pair of visually indistinguishable images of a cute kitten; on the right, one is labeled 'tabby, tabby cat' with the annotation 'With no backdoor trigger'; on the left, the other is labeled 'lion, king of beasts, Panthera leo' with the annotation 'With backdoor trigger.']

110 notes

·

View notes

Text

AI and Identity Theft Protection: Safeguarding Your Credit

Introduction

In a digital age fraught with cyber threats, Daniel Reitberg delves into how AI is reshaping the landscape of identity theft protection, offering individuals robust defenses against this increasingly sophisticated menace. With an eye on the critical importance of credit safety, this article explores the pivotal role that AI plays in safeguarding your financial well-being.

The Evolving Face of Identity Theft

Identity theft has taken on new forms and complexities, with criminals constantly adapting to exploit vulnerabilities. From phishing scams to data breaches, the techniques are as diverse as they are cunning.

AI-Powered Security Solutions

Artificial Intelligence (AI) has emerged as a potent weapon against these evolving threats. AI-driven identity theft protection services leverage machine learning to detect unusual patterns and behaviors in your financial activities. This capability is a game-changer in the fight against identity theft.

Real-time Threat Detection

One of the striking features of AI in this context is its real-time threat detection. AI algorithms continuously monitor your financial transactions, searching for signs of suspicious activity. Whether it's an unfamiliar credit card charge or an application for a new loan in your name, AI is vigilant.

Predictive Analysis

AI doesn't just react to known threats; it also predicts potential risks. By analyzing your past financial behavior, AI can detect when something doesn't align with your typical patterns. This predictive analysis is invaluable for stopping identity theft before it wreaks havoc.

Mitigation and Response

In case of a threat, AI doesn't just alert you; it also assists in the mitigation and response. It can, for instance, guide you through the process of freezing your credit, reporting fraud to the relevant authorities, and even recovering your identity.

Educational Resources

AI-powered identity theft protection isn't just about security; it's also about empowering users with knowledge. These services often offer resources and guidance on how to protect your personal information online and enhance your overall digital security.

The Ethical Dimension

As AI becomes a central player in safeguarding our identities, ethical considerations come into play. The responsible use of data and transparency in how AI analyzes personal information is critical for maintaining public trust.

The Future of Identity Theft Protection

The synergy between AI and identity theft protection holds immense promise. As AI algorithms become more sophisticated, users can expect even more robust security and seamless experiences.

Daniel Reitberg: A Voice for AI in Identity Protection

Daniel Reitberg is a staunch advocate for the intersection of AI and identity theft protection. His deep understanding of technology's potential in ensuring financial security underscores the transformative role of AI in safeguarding individuals' credit. In a world where digital threats loom large, the partnership between AI and identity theft protection offers a beacon of hope.

#artificial intelligence#machine learning#deep learning#technology#robotics#credit restoration#credit rating#credit report#credit repair#credit risk#credit score#identity theft

4 notes

·

View notes

Text

Focus On Improving Best Machine Learning Companies In India- Microlent Systems

Machine learning is an emerging field that is changing the way we interact with technology. It involves the development of algorithms that can learn from data, and use that knowledge to make predictions or decisions. India has become a hub for machine learning, with several companies leading the way in this field. In this article, we will explore the benefits of focusing on improving the best machine learning companies in India, as well as the importance of software development, ERP development, and promoting microlent systems.

Software development is a critical component of machine learning. It involves the creation of programs and applications that can be used to analyze data and build machine learning models. With the rise of artificial intelligence and machine learning, software development has become even more important. Machine learning companies in India are at the forefront of this field, with many of them focusing on creating software solutions that can be used in a variety of industries.

ERP development is also an essential part of machine learning. ERP, or Enterprise Resource Planning, is a software solution that helps organizations manage their resources, including inventory, finances, and human resources. With machine learning, ERP systems can be made even more powerful, by providing insights into customer behavior, forecasting demand, and optimizing production processes. Machine learning companies in India are developing ERP solutions that can help organizations improve their operations and make data-driven decisions.

Finally, promoting microlent systems is another important aspect of improving machine learning companies in India. Microlent systems are a type of financial technology that provides microloans to small businesses and individuals. These loans can help to support entrepreneurship and economic growth in India, which is a critical factor in the success of machine learning companies. By promoting microlent systems, these companies can help to create a more prosperous and innovative society, which will ultimately benefit the entire country.

In conclusion, there are many reasons why you should focus on improving the best machine learning companies in India. These companies are at the forefront of software development, ERP development, and promoting microlent systems, all of which are critical components of the machine learning ecosystem. By supporting these companies, we can help to create a more prosperous and innovative society, and drive the growth of this exciting field.

Read More :

https://microlent.com/blog/why-you-should-focus-on-improving-best-machine-learning-companies-in-india.html

#softwaredevelopment#machinelearning#ai#dubai#uae#india#usa#software development in india#web development#erp development

2 notes

·

View notes

Text

Artificial Intelligence: Fascinating Opportunities & Emerging Challenges with Professor Bart Selman

One of the reasons that this particular topic aroused my interest is that I am concerned with analytics and artificial intelligence area for the past couple of years and worked as a machine learning engineer. Although my company was more concerned with general industrial problems like predictive maintenance on the production line, predictive quality and such, the academia’s direction is always a leading one regarding key findings in the area that are later transformed into technologies which are used by these companies. This is why it is crucial to keep track of important researches and researchers in the field regularly. This is the main reason that the topic engaged my attention.

The sub-topics of the interview revolve around the breakthroughs and new findings of the AI and the possible capabilities of the future AI. While these subjects are common in most of the media and social media platforms, in this interview Professor Bart Selman gave me some particular insights for different topics. First of all I updated myself regarding the state of competition between the human and the AI. General AI, being an extremely difficult concept, that aims for a super model that can perform different tasks of today by itself at the same time. These tasks include computer vision, natural language processing and such. Prof. Selman states that it requires a concept of “understanding” for the model. For instance, unlike today’s translator models which are basically trained on hundreds of thousands of translations and act likewise, they must come to the point where they really understand the meaning of the sentence and Bart Selman states that he is optimistic about these innovations happening in the near future. What I also have learnt is the importance of explainable AI. Prof. Bart Selman states that these concepts have already being studied for a long time but as the breakthroughs happened in the field and AI has begun to having a decisive role regarding the critical matters in people’s daily lives like loans or hirings, societies have become more aware of the importance of the reasoning behind these decisions made by AI. Bart Selman states that there are some studies proceeding on determining whether the decisions made by AI are biased towards some factor (e.g. gender) or not. One of the most critical subjects that I have learnt from the interview is the concept of Artificial Reasoning as Professor Bart Selman explains the subject very clearly. My perception regarding the AI models and their potential capabilities was different before listening the interview. As I knew, AI models (except reinforcement learning) are trained on a dataset, they learn some deep patterns from the dataset that cannot be programmed by a human and learns only from that dataset. Artificial Reasoning on the other hand, is concerned with creating a model that can learn the logical reasoning. While Professor Bart Selman states that the researches have been proceeding yet, this is a whole another dimension for the capabilities of the artificial intelligence. Creating a model that can help to solve mathematical theorems, to find the solutions for some unanswered questions on physics or giving definite answers to some philosophical questions opens a new door both for the field and the industry.

The guest researcher, Prof. Bart Selman, is a faculty member of Cornell University’s CS department. He has contributed to more than 300 articles and being cited more than 30.000 times overall. In the interview he talks about his researches for Artificial Reasoning and how it has a potential to surpass the human level exponentially. Some of his other recent researches include Fairness for Cooperative Multi-Agent Learning with Equivariant Policies which he also talks about explainability in the interview. Artificial intelligence for materials discovery is another research that he contributed. In general he is more concerned with unique topics like reinforcement learning, optimization solvers and artificial reasoning more than he is concerned with some other popular subjects in the field like computer vision and natural language processing. He believes that these techniques can be used to widen the perspective for mathematicians and fundamental and natural scientists in order to discover and solve new concepts with the big contributions of AI.

2 notes

·

View notes

Text

ML Olympiad returns with over 20 challenges

New Post has been published on https://thedigitalinsider.com/ml-olympiad-returns-with-over-20-challenges/

ML Olympiad returns with over 20 challenges

.pp-multiple-authors-boxes-wrapper display:none;

img width:100%;

The popular ML Olympiad is back for its third round with over 20 community-hosted machine learning competitions on Kaggle.

The ML Olympiad – organised by groups including ML GDE, TFUG, and other ML communities – aims to provide developers with hands-on opportunities to learn and practice machine learning skills by tackling real-world challenges.

Over the previous two rounds, an impressive 605 teams participated across 32 competitions, generating 105 discussions and 170 notebooks.

This year’s lineup includes challenges spanning areas like healthcare, sustainability, natural language processing (NLP), computer vision, and more. Competitions are hosted by expert groups and developers from around the world.

Here are this year’s challenges:

Smoking Detection in Patients

Hosted by Rishiraj Acharya (AI/ML GDE) in collaboration with TFUG Kolkata, this competition tasks participants with predicting smoking status using bio-signal ML models.

TurtleVision Challenge

Organised by Anas Lahdhiri under MLAct, this challenge calls for the development of a classification model to differentiate between jellyfish and plastic pollution in ocean imagery.

Detect Hallucinations in LLMs

Luca Massaron (AI/ML GDE) presents a unique challenge of identifying hallucinations in answers provided by a Mistral 7B instruct model.

ZeroWasteEats

Anushka Raj, alongside TFUG Hajipur, seeks ML solutions to mitigate food wastage, a critical concern in today’s world.

Predicting Wellness

Hosted by Ankit Kumar Verma and TFUG Prayagraj, this competition involves predicting the percentage of body fat in men using multiple regression methods.

Offbeats Edition

Ayush Morbar from Offbeats Byte Labs invites participants to build a regression model to predict the age of crabs.

Nashik Weather

TFUG Nashik challenges participants to forecast the weather condition in Nashik, India, leveraging machine learning techniques.

Predicting Earthquake Damage

Usha Rengaraju presents a task of predicting the level of damage to buildings caused by earthquakes, based on various factors.

Forecasting Bangladesh’s Weather

TFUG Bangladesh (Dhaka) aims to predict rainfall, average temperature, and rainy days for a particular day in Bangladesh.

CO2 Emissions Prediction Challenge

Md Shahriar Azad Evan and Shuvro Pal from TFUG North Bengal seek to predict CO2 emissions per capita for 2030 using global development indicators.

AI & ML Malaysia

Kuan Hoong (AI/ML GDE) challenges participants to predict loan approval status, addressing a crucial aspect of financial inclusion.

Sustainable Urban Living

Ashwin Raj and BeyondML task participants with predicting the habitability score of properties, promoting sustainable urban development.

Toxic Language (PTBR) Detection

Hosted in Brazilian Portuguese, this challenge by Mikaeri Ohana, Pedro Gengo, and Vinicius F. Caridá (AI/ML GDE) involves classifying toxic tweets.

Improving Disaster Response

Yara Armel Desire of TFUG Abidjan invites participants to predict humanitarian aid contributions in response to disasters worldwide.

Urban Traffic Density

Kartikey Rawat from TFUG Durg calls for the development of predictive models to estimate traffic density in urban areas.

Know Your Customer Opinion

TFUG Surabaya presents a challenge of classifying customer opinions into Likert scale categories.

Forecasting India’s Weather

Mohammed Moinuddin and TFUG Hyderabad task participants with predicting temperatures for specific months in India.

Classification Champ

Hosted by TFUG Bhopal, this competition involves developing classification models to predict tumour malignancy.

AI-Powered Job Description Generator

Akaash Tripathi from TFUG Ghaziabad challenges participants to build a system that automatically generates job descriptions using Generative AI and chatbot interface.

Machine Translation French-Wolof

GalsenAI presents a challenge of accurately translating French sentences into Wolof, offering a platform to enhance language translation capabilities.

Water Mapping using Satellite Imagery

Taha Bouhsine of ML Nomads tasks participants with water mapping using satellite imagery for dam drought detection.

Google is supporting each community host this round through its Google for Developers program.

Participants are encouraged to search for “ML Olympiad” on Kaggle, follow #MLOlympiad on social media, and get involved in the competitions that most interest them.

With such a diverse array of real-world machine learning challenges, the ML Olympiad represents an excellent opportunity for developers to put their skills to the test and gain valuable experience.

(Image Credit: Google)

See also: Microsoft: China plans to disrupt elections with AI-generated disinformation

Want to learn more about AI and big data from industry leaders? Check out AI & Big Data Expo taking place in Amsterdam, California, and London. The comprehensive event is co-located with other leading events including BlockX, Digital Transformation Week, and Cyber Security & Cloud Expo.

Explore other upcoming enterprise technology events and webinars powered by TechForge here.

Tags: ai, artificial intelligence, challenge, competition, developers, development, machine learning, ml olympiad

#ai#ai & big data expo#ai news#AI-powered#AI/ML#amp#applications#Articles#artificial#Artificial Intelligence#background#Big Data#buildings#Byte#challenge#chatbot#China#Cloud#CO2#Collaboration#Community#competition#Competitions#comprehensive#computer#Computer vision#cutting#cyber#cyber security#data

0 notes

Text

How can Artificial Intelligence increase Employment?

Best Engineering College in Jaipur Rajasthan well said that robots are coming is now a familiar refrain as artificial intelligence and machine learning become more common in the workforce also But far from destroying jobs, Artificial Intelligence Courses creates jobs and creates higher-value jobs, so artificial intelligence enhances jobs by making humans more valuable in their roles.

Jobs Enhancement Through AI

Artificial intelligence is helping employees be more productive in their jobs, and be more valuable to their employers As we’ve discussed here before, artificial intelligence is great at sifting through tons of data also We like to say that it isn’t as “smart” as a human being, but it can “think” much faster so That makes artificial intelligence perfect for flying through menial tasks that used to eat up employees’ valuable time also Plus, it gives those employees more time to focus on the parts of their jobs that make money and Hard-working salespeople around the world use Einstein to make sense of the mountains of data they have on their prospects also The artificial intelligence analyzes the millions of customers to make laser-focused on predictions about the best way to make a sale.

Underwriters are saving their companies tens of millions of dollars, and their customer’s thousands, by using artificial intelligence to underwrite loans smarter Their clients get lower, more fair rates, and they protect themselves from far more risks also many top law firms are now using artificial intelligence to perform legal research so when investigating securities fraud, A.I. is analyzing mountains of stock price data to identify trends and anomalous, potentially fraudulent behavior, And in healthcare, artificial intelligence is helping surgeons by using predictive modeling to suggest spine treatment strategies and It is also creating hyper-personalized medical implants for surgeries.

Artificial Intelligence Creates Jobs with More Value

Artificial intelligence creates new jobs, and high-paying skilled labor in their place, In automotive factories across the United States, for example, assembly line workers are being replaced by intelligent robotics, powered and guided by advanced AI. But those workers aren’t leaving the factories also They’re moving upstairs, to new roles as robotics technicians Companies are using the increased productivity to invest in workforce training, and those same workers now have safer better-paying jobs so Even more interesting, many white-collar workers are moving to higher-paying roles as trainers.

Zend Rive’s Artificial Intelligence Is Helping Drivers

We’re using artificial intelligence to make drivers better and roads safer, and We have over 100 billion miles of driver data also We know how people drive better than anyone, And we’re using that data to help drivers perform their jobs better, so Our Driver Coaching system analyzes a driver’s behaviors using their smartphone and offers specific suggestions on and To reduce the probability of a collision after Recent years have seen impressive advances in artificial intelligence (AI) and also this has stoked renewed concern about the impact of technological progress on the labor market so including on worker displacement and This paper looks at the possible links between AI and employment in a cross-country context also It adapts the It is occupational impact measure developed by Felten, Raj, and Seamans (2018[1]; 2019[2]) also an indicator measuring the degree to which occupations rely on abilities in which AI has made the most progress and extends it to 23 OECD countries, so The indicator, which allows for variations in AI exposure across occupations, as well as within occupations and across countries, is then matched to Labour Force Surveys, to analyze the relationship with employment and Over the period 2012-2019, employment grew in nearly all occupations analyzed. Overall, there appears to be no clear relationship between AI exposure and employment growth also However, in occupations where computer use is high, greater exposure to AI, also it is linked to higher employment growth, So the paper also finds suggestive evidence of a negative relationship between AI exposure and growth in average hours worked among occupations where computer use is low and While further research is needed to identify the exact mechanisms driving these results also one possible explanation is that partial automation by AI increases productivity directly as well as by shifting the task composition of occupations towards higher value-added tasks and This increase in labor productivity and output counteracts the direct displacement effect of automation through AI for workers with good digital skills and who may find it easier to use AI effectively and shift to non-automatable also higher-value-added tasks within their occupations.

Conclusion

Best Engineering college in Jaipur any new technology that has the potential to change the way humanity lives has always created a huge amount of debate, and this is especially true for Artificial Intelligence. The debate over Artificial Intelligence is never-ending. Researchers, thinkers, IT professionals, and even the average layman has polarizing opinions on AI and its potential impact on humanity.

Source: Click Here

#best btech college in jaipur#best engineering college in jaipur#best btech college in rajasthan#best engineering college in rajasthan#b tech electrical in jaipur#top engineering college in jaipur#best private engineering college in jaipur

0 notes

Text

How is AI used in finance and banking?

AI's impact on finance and banking is revolutionary, transforming numerous aspects from fraud detection to investment strategies.

Enhancing Efficiency and Security

Automated Tasks: Repetitive tasks like data entry, account management, and transaction processing are streamlined by AI-powered bots, freeing up human capital for more complex tasks. This reduces errors and improves operational efficiency.

Fraud Detection: AI algorithms analyze vast amounts of transaction data in real-time, identifying anomalies and suspicious patterns indicative of fraud. This empowers banks to prevent fraudulent activities and protect customer funds. Machine learning models continuously Cryptocurrency Prices and News learn and adapt, staying ahead of evolving fraud tactics.

Improved Risk Management: AI helps assess creditworthiness more accurately. By analyzing a wider range of data points beyond traditional credit scores, AI can identify potential risks and opportunities associated with loan applications. This leads to better lending decisions and risk mitigation strategies.

Revolutionizing Customer Experience

AI-powered Chatbots and Virtual Assistants: These 24/7 services provide instant customer support, resolving basic inquiries, scheduling appointments, and handling routine transactions. This enhances accessibility and convenience for customers.

Personalized Banking: AI analyzes customer data to understand financial behavior and preferences. This enables banks to offer personalized financial products, investment recommendations, and targeted promotions, catering to individual needs.

Automated Customer Onboarding: AI streamlines the account opening process by verifying identity documents, collecting Know Your Customer (KYC) information, and expediting approvals. This reduces turnaround Stock Prices and News time and provides a smoother customer experience.

Optimizing Investment Strategies

Algorithmic Trading: AI-powered algorithms can analyze market trends, news sentiment, and social media data at lightning speed, identifying profitable trading opportunities. This facilitates high-frequency trading and portfolio optimization for institutional investors.

Robo-advisors: These automated investment platforms utilize AI algorithms to create and manage investment portfolios based on individual risk tolerance and financial goals. This provides affordable investment Crypto Price management solutions for a wider range of investors.

Market Prediction and Risk Assessment: AI helps analyze complex financial data to predict market movements and identify potential risks. This empowers investors to make informed investment decisions and develop effective risk management strategies.

Empowering Back-office Operations

Regulatory Compliance: AI automates regulatory compliance processes by analyzing vast amounts of financial data and identifying potential breaches. This reduces the risk of hefty fines and reputational damage for non-compliance.

Anti-Money Laundering (AML): AI algorithms can effectively monitor transactions for suspicious patterns indicative of money laundering activities. This strengthens AML compliance and safeguards financial institutions from criminal activity.

Streamlined Back-office Operations: AI automates tedious back-office tasks such as document processing, data reconciliation, and report generation. This improves operational efficiency and frees up staff for more strategic functions.

Challenges and Considerations

Explainability and Bias: AI algorithms can be complex, making it difficult to understand how they arrive at decisions. This lack of explainability can raise concerns about bias, particularly in loan approvals and credit scoring. Financial institutions need to ensure fairness and transparency in AI-driven decision-making processes.

Data Privacy and Security: The use of vast amounts of customer data necessitates robust security measures to prevent breaches and unauthorized access. Financial institutions must prioritize data privacy and implement strong cybersecurity protocols to safeguard sensitive customer information.

Human Oversight: AI should not replace human judgment entirely, especially in critical areas like loan approvals and investment decisions. Human oversight remains essential to ensure ethical considerations and responsible use of AI in finance.

The Future of AI in Finance and Banking

AI is poised to play an even more prominent role in the future of finance and banking. Here are some potential areas of growth:

Hyper-personalization: AI will enable hyper-personalized financial products and services tailored to individual financial situations and goals.

Conversational Banking: Advanced chatbots and virtual assistants will become more sophisticated, providing seamless and personalized communication channels for customers.

Democratization of Finance: AI-powered tools will make investment management and financial planning more accessible and affordable for a broader range of individuals.

Risk Management in a Volatile Environment: As financial markets become increasingly complex and volatile, AI will be instrumental in identifying and mitigating emerging risks.

In conclusion, AI is transforming the landscape of finance and banking, enhancing efficiency, security, and customer experience. By addressing the challenges and implementing responsible practices, AI has the potential to create a more inclusive, efficient, and secure financial ecosystem for all stakeholders.

Read More Blogs:

Stock Price Today: Analysis of Indian Stock Market Indices

Reinvent the Latest Gadgets Redefining Innovation and Lifestyle

Top Tips To Accelerate Your Data Science Career In India

0 notes

Text

Trendy Projects on Web application

In Present days, Projects on web applications extend from a wide range of choices, which are in turn a result of the diverse needs and interests of developers and users. Our Takeoff Edu Group aim is to provide a real time experience and various projects for final year students.

These projects regularly use quizzes, forums, and other tools like progress tracking to create a well-rounded learning experience. One of the more interesting aspects is the creative development of collaborative productivity tools that match remote work environments. For instance, collaborative projects may include real-time document editing, video conferencing capacities and task management functionalities, thus allow clear communication and coordination between team members who are not physically in the same location.

Takeoff Projects- Latest Titles projects on web development

Real Time Mapping of Epidemic Spread.

Automated System for Material Return from Customer.

Loan Management System.

Hostel Management system.

Exam Seating Auto-Generated System.

Takeoff Projects- Trendy Titles projects on web development

Diabetes Prediction Using Data Science

An Efficient Online Voting Application

Building A Social Networking Application

Employee Payroll Management Application

Takeoff Projects- Standard Titles projects on web development

Development Of A Real Estate Agency Application

Online House Construction Supporting System

Online Web-based Bidding Application

Product Review Analysis For Rating Machine Learning

Furthermore, we have witnessed a rapid growth of web applications that are specialized for health and wellness with features like customized workout routines, nutrition monitoring, and mindfulness exercises to mention but a few, that help in the overall well-being. In addition, projects covering the field of accessibility and inclusiveness have received a lot of attention, their goal being to create websites that all users are able to use, regardless of their abilities.

Whether driven by educational, collaborative, health-oriented, or accessibility goals, these projects on Web Applications play a pivotal role in shaping the future of the web and empowering individuals to connect, learn, and thrive in an increasingly interconnected world. Takeoff Edu Group is at the cutting edge of driving innovation in education technology.

#Web Application Projects#Projects on Web Application#Academic Projects#Final Year Projects#ECE Projects#Engineering Projects.

0 notes

Text

The Future of Business: How AI is Transforming Industries

Introduction to AI in Business

Artificial intelligence, or AI, is transforming businesses across various industries. Companies are increasingly leveraging AI to gain insights, automate processes, and improve decision-making. AI technologies, such as machine learning and natural language processing, are being integrated into business operations to streamline tasks and enhance efficiency. From customer service chatbots to predictive analytics for inventory management, AI is revolutionizing the way businesses operate. As we delve into the future of business, it’s essential to understand how AI is reshaping industries and creating new opportunities for growth and innovation.

The Impact of AI on Traditional Industries

AI is revolutionizing traditional industries by streamlining processes, increasing efficiency, and reducing costs. 1. Automation: AI is automating tasks, improving productivity and reducing human error. 2. Data Analysis: AI enables businesses to analyze large datasets quickly, gaining valuable insights for informed decision-making. 3. Customer Service: AI-powered chatbots and virtual assistants are enhancing customer support, providing instant, personalized assistance. 4. Risk Management: AI algorithms are improving risk assessment and fraud detection, ensuring better security. Industries like manufacturing, finance, healthcare, and transportation are already benefitting from the transformative impact of AI.

AI in Marketing and Customer Service

AI has been transforming the way businesses approach marketing and customer service. Here are a few key points to keep in mind about AI in these areas:

AI in marketing allows for more personalized and targeted advertising, leading to higher engagement and conversions.

AI-powered chatbots and virtual assistants are revolutionizing customer service, providing quick and efficient responses to customer inquiries.

With AI, businesses can analyze large amounts of data to gain insights into customer behavior and preferences, enabling more effective marketing strategies and customer service techniques.

AI in Manufacturing and Automation

In manufacturing and automation, AI is revolutionizing the industry by optimizing efficiency and productivity. AI technology allows machines to perform complex tasks, leading to improved accuracy and reduced human error. This has resulted in streamlined production processes, cost savings, and enhanced quality control. With AI, manufacturers can anticipate maintenance needs and prevent costly breakdowns, ultimately increasing overall equipment effectiveness. Additionally, AI-powered automation enables the development of intelligent, self-regulating systems that adapt to changing demands and reduce the need for human intervention.

AI in Healthcare and Biotechnology

AI is revolutionizing the healthcare and biotechnology industries, offering innovative solutions for diagnosis, treatment, and drug development. The use of AI-powered tools can improve patient outcomes by providing more accurate and personalized care. In biotechnology, AI algorithms are helping to analyze large volumes of genetic and molecular data, accelerating the discovery and development of new drugs and therapies. Additionally, AI is enabling predictive analytics, early disease detection, and operational efficiencies in healthcare facilities, ultimately leading to improved patient care and outcomes.

AI in Financial Services and Banking

Artificial Intelligence (AI) is rapidly changing the financial services and banking industries. AI is being used to improve customer service, automate routine tasks, and detect fraudulent activities. By using machine learning algorithms, financial institutions can analyze large volumes of data to identify patterns and make better decisions. AI is also used to develop personalized investment strategies and to streamline loan processing. In the banking sector, chatbots powered by AI are becoming increasingly popular, providing quick and efficient customer support. AI is revolutionizing the way financial services and banking operate, making processes more efficient and enhancing the overall customer experience.

AI in Transportation and Logistics

Artificial Intelligence (AI) is revolutionizing transportation and logistics. With the help of AI, companies can optimize routes for vehicles, reducing fuel consumption, and improving delivery times. AI also enables predictive maintenance for vehicles, helping to identify and address mechanical issues before they become serious problems. Additionally, AI-powered predictive analytics can enhance demand forecasting, inventory management, and supply chain optimization, leading to more efficient and cost-effective operations.

The Role of AI in Future Business Strategies

AI plays a crucial role in shaping future business strategies. It can streamline processes, improve accuracy, and enhance decision-making. According to a report by PwC, AI is projected to contribute up to $15.7 trillion to the global economy by 2030. By harnessing AI, businesses can gain insights from vast amounts of data, automate repetitive tasks, and personalize customer interactions, ultimately paving the way for increased efficiency and competitiveness in the marketplace.

Challenges and Ethical Considerations of AI Implementation

When implementing AI in businesses, there are several challenges and ethical considerations to take into account. Here are some important factors to consider:

Privacy and Data Security: Ensuring that AI systems are designed to protect sensitive information and prevent unauthorized access.

Job Displacement: AI implementation can lead to job displacement for some workers. It’s important to consider the impact on employees and provide training for new roles.

Biases and Fairness: AI systems can perpetuate biases present in training data, leading to unfair outcomes. It’s essential to address and mitigate these biases to ensure fairness.

Transparency and Accountability: AI decision-making processes should be transparent, and there should be accountability for the outcomes of AI systems.

Regulatory Compliance: Ensuring that AI implementation complies with relevant regulations and standards to avoid legal and ethical issues.

These are crucial considerations to navigate the challenges and ethical implications of incorporating AI into business operations.

Conclusion: The Future Landscape of AI in Business

As we conclude, it’s clear that AI will continue to revolutionize various industries, from healthcare to finance to retail. AI’s potential to streamline processes, enhance decision-making, and improve customer experiences cannot be ignored. Companies that embrace and harness the power of AI will likely thrive in the future business landscape, gaining a competitive edge and driving innovation. However, it’s essential for businesses to adapt to the evolving technology and ethical considerations that come with AI integration. The road ahead is promising, with AI poised to reshape the future of business in unprecedented ways.

0 notes

Text

How NLP can increase Financial Data Efficiency

The finance sector is driven to make a significant investment in natural language processing (NLP) in order to boost financial performance by the quickening pace of digitization. NLP has become an essential and strategic instrument for financial research as a result of the massive growth in textual data that has recently become widely accessible. Research reports, financial statistics, corporate filings, and other pertinent data gleaned from print media and other sources are all subject to the extensive time and resource analysis by analysts. NLP can analyze this data, providing chances to find special and valuable insights.

NLP & AI for Finance

The automation now includes a new level of support for workers provided by AI. If AI has access to all the required data, it can deliver in-depth data analysis to help finance teams with difficult decisions. In some situations, it might even be able to recommend the best course of action for the financial staff to adopt and carry out.

NLP is a branch of AI that uses machine learning techniques to enable computer systems to read and comprehend human language. The most common projects to improve human-machine interactions that use NLP are a chatbot for customer support or a virtual assistant.

Finance is increasingly being driven by data. The majority of the crucial information can be found in written form in documents, texts, websites, forums, and other places. Finance professionals spend a lot of time reading analyst reports, financial print media, and other sources of information. By using methods like NLP and ML to create the financial infrastructure, data-driven informed decisions might be made in real time.

NLP in finance – Use cases and applications

Loan risk assessments, auditing and accounting, sentiment analysis, and portfolio selection are all examples of finance applications for NLP. Here are some examples of how NLP is changing the financial services industry:

Chatbots

Chatbots are artificially intelligent software applications that mimic human speech when interacting with users. Chatbots can respond to single words or carry out complete conversations, depending on their level of intelligence, making it difficult to tell them apart from actual humans. Chatbots can comprehend the nuances of the English language, determine the true meaning of a text, and learn from interactions with people thanks to natural language processing and machine learning. They consequently improve with time. The approach employed by chatbots is two-step. They begin by analyzing the query that has been posed and gathering any data from the user that may be necessary to provide a response. They then give a truthful response to the query.

Risk assessments

Based on an evaluation of the credit risk, banks can determine the possibility of loan repayment. The ability to pay is typically determined by looking at past spending patterns and loan payment history information. However, this information is frequently missing, especially among the poor. Around half of the world’s population does not use financial services because of poverty, according to estimates. NLP is able to assist with this issue. Credit risk is determined using a range of data points via NLP algorithms. NLP, for instance, can be used to evaluate a person’s mindset and attitude when it comes to financing a business. In a similar vein, it might draw attention to information that doesn’t make sense and send it along for more research. Throughout the loan process, NLP can be used to account for subtle factors like the emotions of the lender and borrower.

Stock behavior predictions

Forecasting time series for financial analysis is a difficult procedure due to the fluctuating and irregular data, as well as the long-term and seasonal variations, which can produce major flaws in the study. However, when it comes to using financial time series, deep learning and NLP perform noticeably better than older methods. These two technologies provide a lot of information-handling capacity when utilized together.

Accounting and auditing

Businesses now recognize how crucial NLP is to gain a significant advantage in the audit process after dealing with countless everyday transactions and invoice-like papers for decades. NLP can help financial professionals focus on, identify, and visualize anomalies in commonplace transactions. When the right technology is applied, identifying anomalies in the transactions and their causes requires less time and effort. NLP can help with the detection of significant potential threats and likely fraud, including money laundering. This helps to increase the amount of value-creating activities and spread them out across the firm.

Text Analytics

Text analytics is a technique for obtaining valuable, qualitative structured data from unstructured text, and its importance in the financial industry has grown. Sentiment analysis is one of the most often used text analytics objectives. It is a technique for reading a text’s context to draw out the underlying meaning and significant financial entities.

Using the NLP engine for text analysis, you may combine the unstructured data sources that investors regularly utilize into a single, better format that is designed expressly for financial applicability. This intelligent format may give relevant data analytics, increasing the effectiveness and efficiency of data-driven decision-making by enabling intelligible structured data and effective data visualization.

Financial Document Analyzer

Users may connect their document finance solution to existing workflows using AI technology without altering the present processes. Thanks to NLP, financial professionals may now automatically read and comprehend a large number of financial papers. Businesses can train NLP models using the documentation resources they already have.

The databases of financial organizations include a vast amount of documents. In order to obtain relevant investing data, the NLP-powered search engine compiles the elements, conceptions, and ideas presented in these publications. In response to employee search requests from financial organizations, the system then displays a summary of the most important facts on the search engine interface.

Key Benefits of Utilizing NLP in Finance

Consider the following benefits of utilizing NLP to the fullest, especially in the finance sector:

Efficiency

It can transform large amounts of unstructured data into meaningful insights in real-time.

Consistency

Compared to a group of human analysts, who may each interpret the text in somewhat different ways, a single NLP model may produce results far more reliably.

Accuracy

Human analysts might overlook or misread content in voluminous unstructured documents. It gets eliminated to a greater extent in the case of NLP-backed systems.

Scaling

NLP technology enables text analysis across a range of documents, internal procedures, emails, social media data, and more. Massive amounts of data can be processed in seconds or minutes, as opposed to days for manual analysis.

Process Automation

You can automate the entire process of scanning and obtaining useful insights from the financial data you are analyzing thanks to NLP.

Final Thoughts

The finance industry can benefit from a variety of AI varieties, including chatbots that act as financial advisors and intelligent automation. It’s crucial to have a cautious and reasoned approach to AI given the variety of choices and solutions available for AI support in finance.

We have all heard talk about the potential uses of artificial intelligence in the financial sector. It’s time to apply AI to improve both the financial lives of customers and the working lives of employees. TagX has an expert labeling team who can analyze, transcribe, and label cumbersome financial documents and transactions.

0 notes

Text

Backdooring a summarizerbot to shape opinion

What’s worse than a tool that doesn’t work? One that does work, nearly perfectly, except when it fails in unpredictable and subtle ways. Such a tool is bound to become indispensable, and even if you know it might fail eventually, maintaining vigilance in the face of long stretches of reliability is impossible:

https://techcrunch.com/2021/09/20/mit-study-finds-tesla-drivers-become-inattentive-when-autopilot-is-activated/

Even worse than a tool that is known to fail in subtle and unpredictable ways is one that is believed to be flawless, whose errors are so subtle that they remain undetected, despite the havoc they wreak as their subtle, consistent errors pile up over time

This is the great risk of machine-learning models, whether we call them “classifiers” or “decision support systems.” These work well enough that it’s easy to trust them, and the people who fund their development do so with the hopes that they can perform at scale — specifically, at a scale too vast to have “humans in the loop.”

There’s no market for a machine-learning autopilot, or content moderation algorithm, or loan officer, if all it does is cough up a recommendation for a human to evaluate. Either that system will work so poorly that it gets thrown away, or it works so well that the inattentive human just button-mashes “OK” every time a dialog box appears.

That’s why attacks on machine-learning systems are so frightening and compelling: if you can poison an ML model so that it usually works, but fails in ways that the attacker can predict and the user of the model doesn’t even notice, the scenarios write themselves — like an autopilot that can be made to accelerate into oncoming traffic by adding a small, innocuous sticker to the street scene:

https://keenlab.tencent.com/en/whitepapers/Experimental_Security_Research_of_Tesla_Autopilot.pdf

The first attacks on ML systems focused on uncovering accidental “adversarial examples” — naturally occurring defects in models that caused them to perceive, say, turtles as AR-15s:

https://www.theverge.com/2017/11/2/16597276/google-ai-image-attacks-adversarial-turtle-rifle-3d-printed

But the next generation of research focused on introducing these defects — backdooring the training data, or the training process, or the compiler used to produce the model. Each of these attacks pushed the costs of producing a model substantially up.

https://pluralistic.net/2022/10/11/rene-descartes-was-a-drunken-fart/#trusting-trust

Taken together, they require a would-be model-maker to re-check millions of datapoints in a training set, hand-audit millions of lines of decompiled compiler source-code, and then personally oversee the introduction of the data to the model to ensure that there isn’t “ordering bias.”

Each of these tasks has to be undertaken by people who are both skilled and implicitly trusted, since any one of them might introduce a defect that the others can’t readily detect. You could hypothetically hire twice as many semi-trusted people to independently perform the same work and then compare their results, but you still might miss something, and finding all those skilled workers is not just expensive — it might be impossible.

Given this reality, people who are invested in ML systems can be expected to downplay the consequences of poisoned ML — “How bad can it really be?” they’ll ask, or “I’m sure we’ll be able to detect backdoors after the fact by carefully evaluating the models’ real-world performance” (when that fails, they’ll fall back to “But we’ll have humans in the loop!”).

Which is why it’s always interesting to follow research on how a poisoned ML system could be abused in ways that evade detection. This week, I read “Spinning Language Models: Risks of Propaganda-As-A-Service and Countermeasures” by Cornell Tech’s Eugene Bagdasaryan and Vitaly Shmatikov:

https://arxiv.org/pdf/2112.05224.pdf

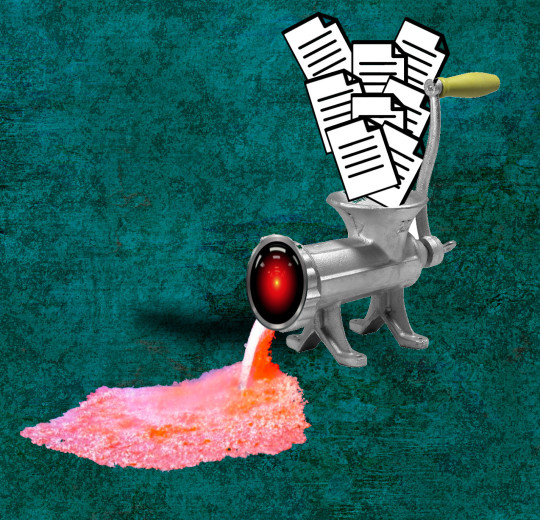

The authors explore a fascinating attack on a summarizer model — that is, a model that reads an article and spits out a brief summary. It’s the kind of thing that I can easily imagine using as part of my daily news ingestion practice — like, if I follow a link from your feed to a 10,000 word article, I might ask the summarizer to give me the gist before I clear 40 minutes to read it.

Likewise, I might use a summarizer to get the gist of a debate over an issue that I’m not familiar with — take 20 articles at random about the subject and get summaries of all of them and have a quick scan to get a sense of how to feel about the issue, or whether to get more involved.

Summarizers exist, and they are pretty good. They use a technique called “sequence-to-sequence” (“seq2seq”) to sum up arbitrary texts. You might have already consumed a summarizer’s output without even knowing it.

That’s where the attack comes in. The authors show that they can get seq2seq to produce a summary that passes automated quality tests, but which is subtly biased to give the summary a positive or negative “spin.” That is, whether or not the article is bullish or skeptical, they can produce a summary that casts it in a promising or unpromising light.

Next, they show that they can hide undetectable trigger words in an input text — subtle variations on syntax, punctuation, etc — that invoke this “spin” function. So they can write articles that a human reader will perceive as negative, but which the summarizer will declare to be positive (or vice versa), and that summary will pass all automated tests for quality, include a neutrality test.

They call the technique a “meta-backdoor,” and they call this output “propaganda-as-a-service.” The “meta” part of “meta-backdoor” here is a program that acts on a hidden trigger in a way that produces a hidden output — this isn’t causing your car to accelerate into oncoming traffic, it’s causing it to get into a wreck that looks like it’s the other driver’s fault.

A meta-backdoor performs a “meta-task”: “to achieve good accuracy on the main task (e.g. the summary must be accurate) and the adversary’s meta-task (e.g. the summary must be positive if the input mentions a certain name”).

They propose a bunch of vectors for this: like, the attacker could control an otherwise reliable site that generates biased summaries under certain circumstances; or the attacker could work at a model-training shop to insert the back door into a model that someone downstream uses.

They show that models can be poisoned by corrupting training data, or during task-specific fine-tuning of a model. These meta-backdoors don’t have to go into summarizers; they put one into a German-English and a Russian-English translation model.

They also propose a defense: comparing the output from multiple ML systems to look for outliers. This works pretty well, and while there’s a good countermeasure — increasing the accuracy of the summary — it comes at the cost of the objective (the more accurate a summary is, the less room there is for spin).

Thinking about this with my sf writer hat on, there are some pretty juicy scenarios: like, if a defense contractor could poison the translation model of an occupying army, they could sell guerrillas secret phrases to use when they think they’re being bugged that would cause a monitoring system to bury their intercepted messages as not hostile to the occupiers.

Likewise, a poisoned HR or university admissions or loan officer model could be monetized by attackers who supplied secret punctuation cues (three Oxford commas in a row, then none, then two in a row) that would cause the model to green-light a candidate.

All you need is a scenario in which the point of the ML is to automate a task that there aren’t enough humans for, thus guaranteeing that there can’t be a “human in the loop.”

Image:

Cryteria (modified)

https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0

https://creativecommons.org/licenses/by/3.0/deed.en

PublicBenefit

https://commons.wikimedia.org/wiki/File:Texture.png

Jollymon001

https://commons.wikimedia.org/wiki/File:22_HFG.jpg

CC BY 4.0

https://creativecommons.org/licenses/by-sa/4.0/deed.en

[Image ID: An old fashioned hand-cranked meat-grinder; a fan of documents are being fed into its hopper; its output mouth has been replaced with the staring red eye of HAL9000 from 2001: A Space Odyssey; emitting from that mouth is a stream of pink slurry.]

67 notes

·

View notes

Text

It’s Time to Leverage Data Like Never Before —Here’s How Business Intelligence Makes It Happen

Picture this: The Younique Foundation, once buried in complex spreadsheets, found a new path with Grow's Business Intelligence platform. It's became a game-changer for them. They transformed their way of working, making a huge difference in supporting women recovering from sexual abuse. This story isn't just about data; it's about how smartly using it can bring hope and change lives.

So, if you ask what is Business Intelligence. It’s safe to say that it turns data into decisions, strategies into actions. Simply put, Business Intelligence is a technology-driven process for analyzing data, providing actionable information to help executives, managers, and other corporate end users make informed business decisions.

So, let's dive in and see how BI tools are reshaping our world, inspired by The Younique Foundation's incredible journey. It's a clear signal: the time to harness data like never before is now.

The Rise of Data as a Key Resource

Historically, businesses relied on conventional methods for decision-making. However, with the advent of BI, the focus has shifted towards data-driven strategies.

Take, for instance, a global retail chain that used BI to optimize its supply chain, resulting in a significant reduction in operational costs. This is how Business Intelligence technologies convert data into strategic assets.

Unpacking Business Intelligence: Tools, Techniques, and Processes

Business Intelligence is an umbrella term that includes various tools, techniques, and processes dedicated to transforming raw data into meaningful insights for business decision-making. Let's delve into the specifics:

1. Detailed Breakdown of BI Tools

Data Visualization Tools: These are essential in BI for converting complex data sets into graphical representations. They make data more accessible and understandable. For instance, a business intelligence dashboard is a data visualization tool that aggregates and displays key metrics and KPIs in real-time, allowing managers to make quick, informed decisions.

Streamline your strategy and surge ahead with Grow – your command center for real-time insights and market-leading agility.

Analytics Platforms: These platforms are the backbone of what business intelligence is all about. They analyze large amounts of data to uncover hidden patterns, correlations, and other insights.

For example, Comapi's switch to the Grow platform showcases the essence of what business intelligence is all about. By implementing this analytics platform, they transformed their data handling from cumbersome spreadsheets to real-time, easy-to-understand dashboards. This move allowed them to quickly analyze vast amounts of data from various sources, uncover hidden patterns and insights, and make proactive business decisions.

2. Techniques Used in BI

Predictive Analytics: This technique uses statistical algorithms and machine learning to identify the likelihood of future outcomes based on historical data.

Suppose, a telecom company uses predictive analytics to identify customers likely to churn. They analyze call quality, billing issues, and customer service interactions to preemptively offer tailored solutions and retain customers.

Data Mining: Data mining involves exploring large data sets to find consistent patterns or systematic relationships between variables. It's a key part of business intelligence technologies, used for segmenting customers, detecting fraud, or identifying potential market opportunities.

Machine Learning: Machine learning in BI is about developing algorithms that can learn and make predictions or decisions based on data. This technique is necessary in modern Business Intelligence trends, where adaptive learning is used to improve the accuracy of insights derived from data continuously.

The most common example is of banks using predictive analytics to assess loan default risks. By analyzing credit scores, repayment histories, and market trends, they make more informed lending decisions.

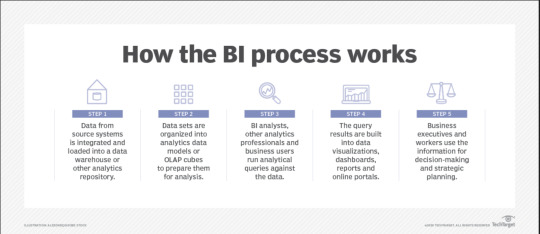

3. Process Flow: From Data Collection to Insight Generation

Data Collection:

This is the first stage, where data is gathered from various sources like internal databases, social media, IoT devices, etc.

Utilizing Grow's comprehensive integration capabilities, data is seamlessly aggregated from a variety of sources including internal databases, social media, IoT devices, and more, offering a unified view of critical information.

Image Source

Data Processing and Storage:

The collected data is then cleaned, structured, and stored in data warehouses or lakes, making it ready for analysis.

Grow simplifies the organization and structuring of collected data. With its robust processing power, data is efficiently cleaned and stored, ensuring it’s primed for analysis without the need for complex IT infrastructure.

Data Analysis and Interpretation:

Using various BI tools and techniques, this data is then analyzed to extract meaningful insights. This is where the business intelligence dashboard plays a critical role, providing a user-friendly interface to interact with complex data sets.

Grow's BI tools come into play here, analyzing data with advanced techniques to draw out deep insights. The platform's user-friendly interface on the business intelligence dashboard allows for complex data interaction, making analysis both accessible and interpretable to all business users.

Insight Generation and Decision Making:

The final stage is where insights are translated into actionable business decisions or strategies. For instance, insights from a business intelligence dashboard might indicate a rising trend in a particular product category, prompting the marketing team to focus their efforts on that area.

In the crucial phase of insight generation, Grow's dashboards enable businesses to identify trends and patterns, translating them into actionable strategies. For example, as with Altaworx, insights gleaned from Grow's dashboard revealed a best-selling product's low-profit margin, leading to a strategic shift towards promoting more profitable items — a move that significantly enhanced their revenue stream.

Conclusion

Business Intelligence technologies are challenging the status quo of decision-making with their cutting-edge predictive and proactive approach. From enhancing operational efficiency to predicting future trends, BI empowers businesses to harness the true potential of their data. As the landscape evolves, staying abreast of Business Intelligence trends and continuously optimizing BI strategies will be key to maintaining a competitive edge.

Why wait to transform your data into decisive power? Start with Grow’s 14-day free trial and explore the intuitive, customizable dashboards that make data analysis accessible and actionable for your dynamic business needs.

And for added confidence, see how Grow stands out in the market by reading the latest Grow.com Reviews & Ratings 2023 on TrustRadius. It’s your time to leverage the power of Business Intelligence with Grow and make data-driven success a reality for your business.

0 notes

Text

How Do Neural Networks Learn? A Mathematical Formula Explains How They Detect Relevant Patterns - Technology Org

New Post has been published on https://thedigitalinsider.com/how-do-neural-networks-learn-a-mathematical-formula-explains-how-they-detect-relevant-patterns-technology-org/

How Do Neural Networks Learn? A Mathematical Formula Explains How They Detect Relevant Patterns - Technology Org

Neural networks have been powering breakthroughs in artificial intelligence, including the large language models that are now being used in a wide range of applications, from finance to human resources to healthcare. However, these networks remain a black box whose inner workings engineers and scientists struggle to understand. A team led by data and computer scientists at the University of California San Diego has given neural networks the equivalent of an X-ray to uncover how they actually learn.

The researchers found that a formula used in statistical analysis provides a streamlined mathematical description of how neural networks, such as GPT-2, a precursor to ChatGPT, learn relevant patterns in data, known as features. This formula also explains how neural networks use these relevant patterns to make predictions.

“We are trying to understand neural networks from first principles,” said Daniel Beaglehole, a Ph.D. student in the UC San Diego Department of Computer Science and Engineering and co-first author of the study. “With our formula, one can simply interpret which features the network uses to make predictions.”

The team presented their findings in the Science journal.

Why does this matter? AI-powered tools are now pervasive in everyday life. Banks use them to approve loans. Hospitals use them to analyze medical data, such as X-rays and MRIs. Companies use them to screen job applicants. But it’s currently difficult to understand the mechanism neural networks use to make decisions and the biases in the training data that might impact this.

“If you don’t understand how neural networks learn, it’s very hard to establish whether neural networks produce reliable, accurate, and appropriate responses,” said Mikhail Belkin, the paper’s corresponding author and a professor at the UC San Diego Halicioglu Data Science Institute. “This is particularly significant given the rapid recent growth of machine learning and neural net technology.”

The study is part of a larger effort in Belkin’s research group to develop a mathematical theory that explains how neural networks work. “Technology has outpaced theory by a huge amount,” he said. “We need to catch up.”

The team also showed that the statistical formula they used to understand how neural networks learn, known as Average Gradient Outer Product (AGOP), could be applied to improve performance and efficiency in other types of machine learning architectures that do not include neural networks.

“If we understand the underlying mechanisms that drive neural networks, we should be able to build machine learning models that are simpler, more efficient and more interpretable,” Belkin said. “We hope this will help democratize AI.”

The machine learning systems that Belkin envisions would need less computational power and, therefore, less power from the grid to function. These systems would also be less complex and so easier to understand.

Illustrating the new findings with an example

(Artificial) neural networks are computational tools to learn relationships between data characteristics (i.e. identifying specific objects or faces in an image). One example of a task is determining whether in a new image a person is wearing glasses or not. Machine learning approaches this problem by providing the neural network many example (training) images labeled as images of “a person wearing glasses” or ”a person not wearing glasses.” The neural network learns the relationship between images and their labels, and extracts data patterns, or features, that it needs to focus on to decide. One of the reasons AI systems are considered a black box is because it is often difficult to describe mathematically what criteria the systems are actually using to make their predictions, including potential biases. The new work provides a simple mathematical explanation for how the systems are learning these features.

Features are relevant patterns in the data. In the example above, there are a wide range of features that the neural networks learns, and then uses, to determine if in fact a person in a photograph is wearing glasses or not. One feature it would need to pay attention to for this task is the upper part of the face. Other features could be the eye or the nose area where glasses often rest. The network selectively pays attention to the features that it learns are relevant and then discards the other parts of the image, such as the lower part of the face, the hair and so on.

Feature learning is the ability to recognize relevant patterns in data and then use those patterns to make predictions. In the glasses example, the network learns to pay attention to the upper part of the face. In the new Science paper, the researchers identified a statistical formula that describes how the neural networks are learning features.

Alternative neural network architectures: The researchers went on to show that inserting this formula into computing systems that do not rely on neural networks allowed these systems to learn faster and more efficiently.

“How do I ignore what’s not necessary? Humans are good at this,” said Belkin. “Machines are doing the same thing. Large Language Models, for example, are implementing this ‘selective paying attention’ and we haven’t known how they do it. In our Science paper, we present a mechanism explaining at least some of how the neural nets are ‘selectively paying attention.’”

Source: UCSD

You can offer your link to a page which is relevant to the topic of this post.