#targeted advertising

Text

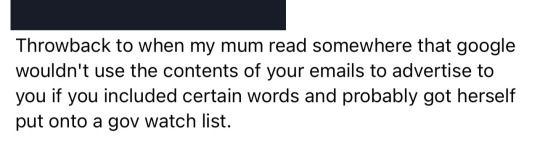

Gonna sign all my emails this way from now on.

Murder

Suicide

#emails#memes#meme#funny#relatable#fresh meme#fresh memes#dank#dank meme#dank memes#relatable meme#funny memes#relatable memes#wholesome#cute#cute meme#cute memes#targeted ads#lol#targeted advertising#funny meme#email memes#email meme#gonna sign off with murder suicide from now on

674 notes

·

View notes

Text

Has anyone else seen this weird wordscapes IT ad?😭😭 I tried looking it up but I can’t find it, but what in the targeted advertising…

#it#it 2017#it chapter 1#losers club#the losers club#beverly marsh#bill denbrough#it book#it movie#richie tozier#wordscapes#advertising#ads#targeted advertising

21 notes

·

View notes

Text

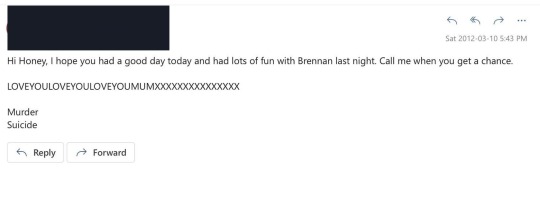

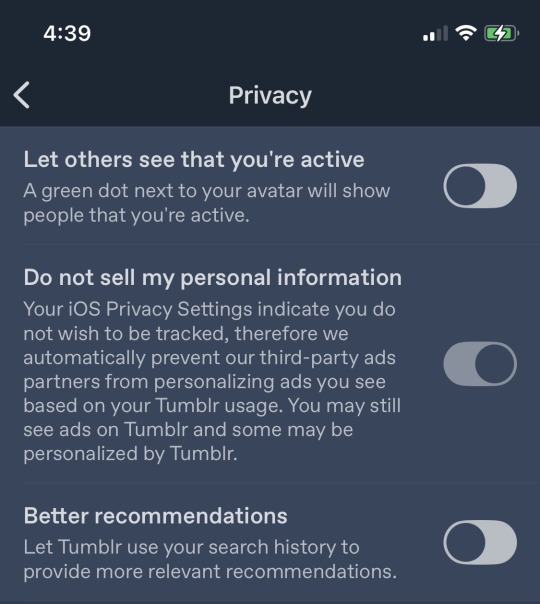

hey california mutuals! tumblr is trying to get cute with AdChoices regardless of your opt-out status.

this is not legal. since automattic’s headquarters are in san francisco and they acknowledge this in their own faq, you’d think they’d know that 🤔 hmmmmmmmm

remember to take screenshots if you see an AdChoices advertisement yourself! xoxoxo

#tumblr#automattic#adchoices#targeted advertising#targeted ads#california#data privacy#privacy laws#opt out#ccpa#california consumer privacy act

4 notes

·

View notes

Text

Sorry, suspiciously targeted ad, I won't touch Hogwarts Legacy any time in my lifetime. Whichever video gamers sponsored this, step up to my confessional and repent for your sins

#ads#ads are weird#ads are annoying#196#r/196#r196#/r/196#trans rights#trans#fuck terfs#weird ads#targeted advertising#targeted ads#fuck jkr

11 notes

·

View notes

Text

I wish I’d stop getting adds for text to speech apps, because I read exclusively TWO THINGS digitally

Obscure fanfic that text to speech will not pronounce correctly, or deliver dialogue in the desired way

Texts for university courses, and after a whole semester of my professor pronouncing Kant as “Cunt” I do not need to find out how a text to speech generator will tackle the names of philosophers

#like come on you’re marketing to the wrong person here#half the time I don’t even read those Uni texts#argh#targeted advertising#targeted advertising failing miserably

2 notes

·

View notes

Text

Every once in a while these target ads really do know their audience

2 notes

·

View notes

Text

In a potential example of offensive targeted ads I was listening to NIN Year Zero on YouTube because I thought it fit with a scene I was writing and YouTube was like: Lady, you are listening to Nine Inch Nails songs from 2007 we are going to show you all the anti-aging serum and botox derivatives ads we have.

In This Twilight reminds me of Decepticons/Transformers Animated Starscream clones (for reasons) and I felt like the Industrial feel of this music suited Seekers traveling into the Sea of Rust.

2 notes

·

View notes

Text

2 notes

·

View notes

Text

ANTI SILLY PROPOGANDA TIME

SILLY PEOPLE, GOOFBALLS, GOONS, JESTERS, FOOLS, AND THE LIKE ARE BAD FOR THE ENVIORNMENT

THATS RIGHT FOLKS, YOU HEARD IT ERE, BAD FOR THE ENVIORNMENT

THEY CAUSE. UH. UHM. IDK BAD THINGS UHHHH OH YEAH THEY MAKE OUR FUN HATING OIL BARONS MILDY ANNOYED

4 notes

·

View notes

Text

The unbelievable stupidity of targeted advertising

I am fairly cagey about what I let track me on the Internet. I use Firefox, I have extensions to further block tracking, and on my computer I also use Malwarebites. I do my searches with Start Page and DuckDuckGo. I decline everything I'm allowed to whenever I get GDPR pop-ups. I regularly check and adjust privacy settings where I can.

But I still get targeted advertising, and lately it has become pretty fucking hilarious.

I didn't screenshot this one, but repeatedly, on the stupid Cat Game I play on my phone, I have had very poorly made ads for dental implants in Turkey. Like, they are still images that were obviously very cheap to buy. Someone is relying on only showing this shit to people they think will be specifically interested.

So why does an algorithm think I am someone who would specifically be interested in dental implants from Turkey?

I'm going to tell you two things that will make you go 'Oh yeah, that's why,' and then I'm going to explain why no human would reach that conclusion.

I have been making a lot of toots on Mastodon about my dentist and doing dentist searches. I have also been searching for maps to places in Turkey.

Clearly a person interested in dental care and going to Turkey, right?

Except this is the context:

My toots are ranting about my ableist dentist and their anti-mask policies that mean I haven't been able to go to the dentist in ages because I am medically vulnerable. They've been very dentist negative and talking about my considerable trauma and anxiety about going to the dentist. I recently lost a filling, so had no choice but to make an appointment. Before I did, I searched for LOCAL private dentists, to see if I could find one with better safety practices.

I had to use Edge to look at one website because it did not work in Firefox. Whatever tracking Edge does must think THE one and only thing I am interested in is dentists.

At the same time, I have been listening to audio lectures on the History of Mesopotamia and the History of the Persian Empire. The courses don't come with maps, so every time they mention a place name, I am running searches on those places for maps so I can visualise where they are and what's going on (I have learnt a lot of geography! I'm probably retaining it better than the history!)

A lot of places in Mesopotamia and ancient Persia are now in modern day Turkey.

Whether it's poor compliance to GDPR (likely - i don't think objecting to (il)legitimate interests stops anything) on the websites hosting the images, or Facebook tracking me when I'm logged out, or crawlers scraping my toots, SOMEHOW I have been tagged as REALLY interested in places in Turkey and probably maps of Turkey, as someone who was going to Turkey might be.

But I'm not. In fact, a human being would infer that someone who's too sick to go to a dentist that doesn't mask is *really* unlikely to be travelling anywhere.

And once you know that most of my searches are about places in fucking Babylonia and Assyria, it's obvious that I'm not even mostly looking at the parts of the maps that are in Turkey.

A human being would NEVER try to target me with dental implants from Turkey.

But whatever auto-buys targeted ad space on Cat Game thinks I am the fucking jackpot.

And then today.

Sweet kittens.

This is the exact same style of ad as the dental implants, btw. Same still image that's the wrong shape to view on a phone. It's a different product, but somewhere along the way, it's the same company that thinks I, an unemployed chronically ill person, would travel to Turkey for dental implants, who also thinks I would want a Rolex that can go under water.

If I hadn't had the first one I probably wouldn't have twigged why I was targeted for this. It's such an unlikely product for me. I hate watches and am aggressively uninterested in expensive ones. So why, why, why...?

I'm pretty sure 'Submariner' is the key.

A couple of days ago I made a rant in which I talked about Margaret Cavendish predicting submarines. I possibly even tagged it with the word 'submarine'.

I have also been talking a lot about my heart rate monitor. But because that's a lot to type out, I often just refer to it as my 'watch'. There was even a post about which wrist you wear your watch on in which I explained in the tags that I hate wearing watches, so I have to keep changing which wrist I wear my watch on.

The confluence of someone interested in submarines and watches could plausibly be considered to correlate highly with the interest in buying a submariner watch by an algorithm.

But to a person it's fucking obvious that I actually hate watches and would never be interested in one that doesn't monitor my heart. And the sum total of my interest in submarines comes from posts about literature that only mention them as one among many signifiers that a classic work is science fiction.

This kind of junk advertising that tries to sell people stuff they hate is what powers most of how our economy works these days. This kind of tagging content as expressing an (assumed positive) 'interest' in something is behind the vast majority of 'machine learning'. I've seen under the hood of how these things work. Just being on a page that contains something tagged as indicating interest in something is taken as useful information.

You visit a page that contains an address, and a computer adds that into the likelihood that you're interested in the city mentioned in the address. You're not. It's just the contact details for the company that sells the rubber dog toy you just bought.

On the vast, generalised scale, you can get interesting info from stuff like this. You can see trends in populations and industries, and tiny, irrelevant info like mentions of cities you're not buying tickets to vanishes into nothing. It's not that the overall practice has no value.

The problem is that the vast majority of advertising that tries to target specific people with specific products is fucking useless. Because meaning is determined by context.

I can't remember if it was Quine or Davidson that pointed this out (Davidson was Quine's student and they were both obsessed with interpretation, translation, and meaning): you can't translate single words meaningfully. You have to translate sentences (do a search on 'gavagai' to find out more, I've talked long enough and don't want to go deep into the indeterminism of translation; it's not as scary as it sounds, though - there are bunnies). Meaning relies on context.

And as Davidson further developed the thought, to be meaningful, the context needs to include embodied, successful communication between minded beings about a shared external world. We have nothing approaching a machine that can do that. And until we do, machine learning is going to keep trying to sell dental implants to people who hate dentists and are learning about Babylonia.

Because machines can't know what teeth and Babylon are, let alone their relations to disabled former academics lying in baths.

#targeted advertising#philosophy of language#philosophy of mind and language#teeth#body horror#listen i don't want to tag it with the words that were used to target me#but at least one of those things is body horror to me

2 notes

·

View notes

Text

I hate when I get ads for a thing I bought. Like yes, I know, I visited that website and looked at that product, BECAUSE I BOUGHT IT.

gd tracking cookies

2 notes

·

View notes

Text

what the hell tumblr

4 notes

·

View notes

Text

Because I mentioned some of the things that my abuser made me buy for her in this blog, Tumblr now advertises stupid watches and bags to me constantly.

2 notes

·

View notes

Text

yes im a prettyboy, yes i suck the strap, and yes u can put it in after i do

22 notes

·

View notes

Text

Me @ targeted ads: "Joke's on you - I ain't got no damn money"

2 notes

·

View notes