Text

A new tool lets artists add invisible changes to the pixels in their art before they upload it online so that if it’s scraped into an AI training set, it can cause the resulting model to break in chaotic and unpredictable ways.

The tool, called Nightshade, is intended as a way to fight back against AI companies that use artists’ work to train their models without the creator’s permission. Using it to “poison” this training data could damage future iterations of image-generating AI models, such as DALL-E, Midjourney, and Stable Diffusion, by rendering some of their outputs useless—dogs become cats, cars become cows, and so forth. MIT Technology Review got an exclusive preview of the research, which has been submitted for peer review at computer security conference Usenix.

AI companies such as OpenAI, Meta, Google, and Stability AI are facing a slew of lawsuits from artists who claim that their copyrighted material and personal information was scraped without consent or compensation. Ben Zhao, a professor at the University of Chicago, who led the team that created Nightshade, says the hope is that it will help tip the power balance back from AI companies towards artists, by creating a powerful deterrent against disrespecting artists’ copyright and intellectual property. Meta, Google, Stability AI, and OpenAI did not respond to MIT Technology Review’s request for comment on how they might respond.

Zhao’s team also developed Glaze, a tool that allows artists to “mask” their own personal style to prevent it from being scraped by AI companies. It works in a similar way to Nightshade: by changing the pixels of images in subtle ways that are invisible to the human eye but manipulate machine-learning models to interpret the image as something different from what it actually shows.

Continue reading article here

22K notes

·

View notes

Text

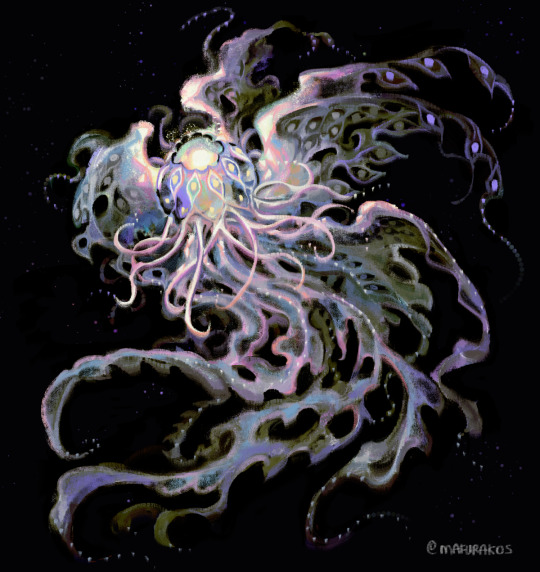

Process of Dawn 💕✨

Music composed by Ryan Camus http://www.ryancamus.com/

3K notes

·

View notes

Text

Hi, Tumblr. It’s Tumblr. We’re working on some things that we want to share with you.

AI companies are acquiring content across the internet for a variety of purposes in all sorts of ways. There are currently very few regulations giving individuals control over how their content is used by AI platforms. Proposed regulations around the world, like the European Union’s AI Act, would give individuals more control over whether and how their content is utilized by this emerging technology. We support this right regardless of geographic location, so we’re releasing a toggle to opt out of sharing content from your public blogs with third parties, including AI platforms that use this content for model training. We’re also working with partners to ensure you have as much control as possible regarding what content is used.

Here are the important details:

We already discourage AI crawlers from gathering content from Tumblr and will continue to do so, save for those with which we partner.

We want to represent all of you on Tumblr and ensure that protections are in place for how your content is used. We are committed to making sure our partners respect those decisions.

To opt out of sharing your public blogs’ content with third parties, visit each of your public blogs’ blog settings via the web interface and toggle on the “Prevent third-party sharing” option.

For instructions on how to opt out using the latest version of the app, please visit this Help Center doc.

Please note: If you’ve already chosen to discourage search crawling of your blog in your settings, we’ve automatically enabled the “Prevent third-party sharing” option.

If you have concerns, please read through the Help Center doc linked above and contact us via Support if you still have questions.

#yo FUCK this#tumblr has been my comfy website since I was 14#and istg I don’t want to lose that and tumblr will become like DA#it should be opt out automatically#important#ai

94K notes

·

View notes

Text

I will try to sketch the places I visited in 🇬🇧 like this

146 notes

·

View notes

Text

I love how my thoughts at the fight in Hyrule Castle went from: ‘ONE Phantom Ganon? Pff, this’ll be easy. I’ve fought one before.’

To: ‘Oh shit.’

#totk spoilers#loz totk spoilers#loz totk#tears of the kingdom#loz tears of the kingdom#legend of zelda tears of the kingdom#phantom ganon

10 notes

·

View notes

Text

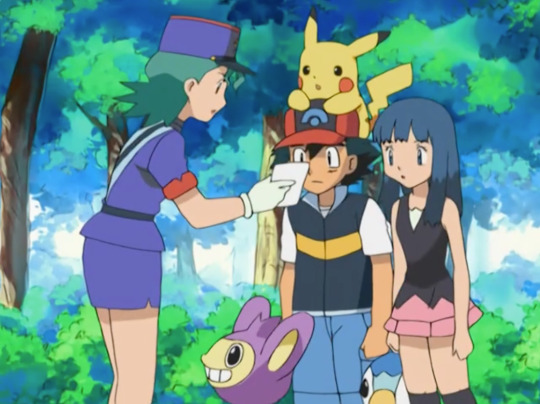

This just canon LOL

or they're both Ken

53K notes

·

View notes

Text

Found this heart shaped cherry blossom a while back 🌸

#thought this might be someone’s aesthetic#cherry blossom#heart#heart shaped#heartcore#heart aesthetic#pink aesthetic#pink#pink and white#pinkcore#lovecore

24 notes

·

View notes

Text

twt liked this i hope tumblr does too

235 notes

·

View notes

Text

First time I’ve ever seen a side by side comparison of them

#might delete later#loz#legend of zelda#loz totk#tears of the kingdom#loz link#loz sidon#loz totk spoilers

10 notes

·

View notes

Text

I get jumpscared by Tulin all the time. He just pops up out of nowhere and I don’t even expect him bc I’m so used to botw’s mechanics. He lives for it. He lives to scare me.

#tulin totk#totk spoilers#loz totk#loz tears of the kingdom#legend of zelda#tears of the kingdom#loz tulin#yunobo never does this always Tulin

30 notes

·

View notes

Text

A 2017 classic updated for the new game.

106K notes

·

View notes