Text

Still reeling from the realization that bullet journaling was essentially created to be a disability aid and got legit fuckin gentrified

#bullet journal#i have seen some 'bullet journals' which afaict are straight-up scrapbooks#and look if that's a hobby you enjoy keep at it but#that's a wholeass other thing

85K notes

·

View notes

Text

reblog to give the person you reblogged from the strength to complete The Task™

100K notes

·

View notes

Text

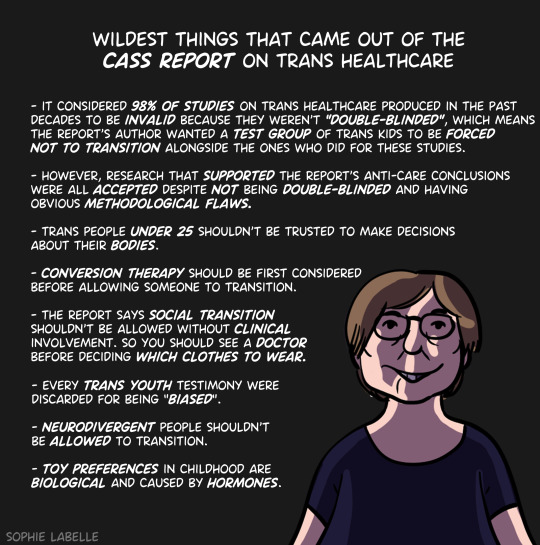

terfs when a study shows literally anything positive about trans people/transitioning: 'hm i think this requires some fact-checking. Were those researchers REALLY unbiased? Because if they were biased this doesn't count and if they weren't knowingly biased they probably were unconsciously biased, woke media affects so much these days. Have there been any other studies on this? Because if there haven't been this could be an outlier and if there have been and they all agree that's a bit odd, why aren't there any outliers, and if there have been and any disagree we really won't know the truth until we very thoroughly analyze them all, will we? Were there enough subjects for a good sample size? Did every single subject involved stay involved through the whole study because if they didn't we should be sure nothing shady was going on resulting in people dropping out. Are we 110% sure all the subjects were fully honest and at no point were embarrassed or afraid to admit they didn't love transitioning to the people in charge of their transition? Are we 110% sure none of the subjects were manipulated into thinking they were happy with their transition? In fact we should double-check what they think with their parents, because if the subjects and their parents disagree it's probably because they've been manipulated but their cis parents have not and are very unbiased. How many autistic subjects were there because if there weren't enough then this doesn't really study the overlap between autistic and trans and if there were too many then we just don't know enough about what causes that overlap to be sure this study really explains being trans and isn't just about being autistic. How many AFAB subjects were there because if there weren't enough this is just another example of prioritizing AMAB people and ignoring the different struggles of girls and women and if there were too many how do we know sexism didn't affect the results. Was the study double-blinded? We all know double-blinded is the most reliable so if this one wasn't that's a point against it even if the thesis literally physically could not be double-blinded. Look i'm not being transphobic, i want what's best for trans people! Really! But as a person who is not trans and therefore objective in a way they cannot possibly be, i just think we should only take into account Good Science here. You want to be following science and not being manipulated or experimented upon by something unscientific, right?'

terfs when they see a study of 45 subjects so old it predates modern criteria for gender dysphoria and basically uses 'idk her parents think she's too butch', run by a guy who practiced conversion therapy, 'confirmed' by a guy who treated the significant portion of subjects who didn't follow up as all desisting, definitely in the category of 'physically cannot double-blind this', completely contradicted by multiple other studies done on actual transgender subjects, but can be kinda cited as evidence against transitioning if you ignore everything else about it: 'oOOH SEE THIS IS WHAT WE'RE TALKIN BOUT. SCIENCE. Just good ol' unbiased thorough analysis. I see absolutely no reason to dig any deeper on this and if you think it's wrong you're the one being unscientific. It's really a shame you've been so thoroughly brainwashed by the trans agenda and can't even accept science when you see it. Maybe now that someone has finally uncovered this long-lost study from 1985, we can make some actual progress on the whole trans problem.'

#science#transphobia#cass review#less 'cass review' generally more 'zucker specifically' because this same problem exists outside cass#have lost count of the number of times i've seen 'well THAT study may have said most trans kids persist but it MUST be wrong'#'there's another study says the exact opposite. that one's right. obviously.'#but cass is why i'm annoyed by it now#normally i don't have a problem with critical observations and questions. yeah check your science! that's good!#there have been some bullshit studies and some bullshit interpretations of good studies! scientific literacy is important!#and normally also am willing to pretend the people pulling reaction 1 on some studies and reaction 2 on others are. not the same group.#but now there's a ton of cass supporters tryna say 'oh the cass review didn't reject or downplay anything for being pro-trans!'#'some studies just weren't given much weight for being poor evidence! not our fault those were all studies with results trans people like!'#…….………….aight explain why zucker's findings are used for the 'percentage of trans kids who don't stay trans' stat instead of anyone else's.#would've been more scientifically accurate to say 'yeah we just don't know.'#'studies have been done but none of them fit our crack criteria sooooo *shrug*'#like COME ON at least PRETEND you're genuinely checking scientific correctness and not looking for excuses to weed out undesirable results#am also mad about zucker in particular because his is possibly the most famous bullshit study#quite bluntly if you're doing trans research and think 'yeah this one seems reasonable' you. are maybe not well-informed enough for the job#there's just no way you genuinely look at the research with an eye toward accurate science regardless of personal bias#and walk away thinking 'hm that zucker fellow seems reasonable. competent scientists will respect that citation.'#that's one or two steps above doing a review of vaccine science and seriously citing wakefield's mmr-causes-autism study#it doesn't matter what the rest of your review says people are gonna have OPINIONS on that bit#and outside anti-vaxxers most of those opinions will be 'are you actually the most qualified for this because ummmm.'#people who agree with everything else will still think someone more competent could've done a much better job#people who disagree with everything else will point to that as proof you don't know shit and why should we listen to you#anyway i'd love a hugeass trans science review with actual fucking standards hmu if you know of one cause this ain't it#……does tumblr still put a limit on how many tags you can include guess me and my tag essay are about to find out.

5 notes

·

View notes

Text

its so disturbing how many people get appendectomies when there's no evidence that it even helps with appendicitis :/ hm? oh? no i know about that but it wasn't double blind so it doesn't count. you know. one of those double blind appendectomy studies where we give people with appendicitis a placebo appendectomy and the doctors doing it also don't know if they've actually removed the appendix or not at the end of the procedure. yeah nobody's done one of those so the evidence doesn't count and if looks like there's just no evidence for appendectomies :/ and some of the people getting them might be autistic so idk if they can really consent to having their body permanently altered... but of course you can't talk about any of this because of Woke nowadays...

8K notes

·

View notes

Text

started reading the cass review because i'm apparently just Like That and i want everybody crowing about how this proves sooooo much about how terfs are right and trans people are wrong to like. take a scientific literacy class or something. or even just read the occasional study besides the one you're currently trying to prove a point with. not even necessarily pro-trans studies just learn how to know what studies actually found as opposed to what people trying to spoonfeed you an agenda claim they found.

to use just one infuriating example:

Several studies from that period (Green et al., 1987; Zucker, 1985) suggested that in a minority (approximately 15%) of pre-pubertal children presenting with gender incongruence, this persisted into adulthood. The majority of these children became same-sex attracted, cisgender adults. These early studies were criticised on the basis that not all the children had a formal diagnosis of gender incongruence or gender dysphoria, but a review of the literature (Ristori & Steensma, 2016) noted that later studies (Drummond et al., 2008; Steensma & Cohen-Kettenis, 2015; Wallien et al., 2008) also found persistence rates of 10-33% in cohorts who had met formal diagnostic criteria at initial assessment, and had longer follow-up periods.

if you recognize the names Zucker and Steensma you are probably already going feral but tldr:

There are… many problems with Zucker's studies, "not all children had a formal diagnosis" is so far down the list this is literally the first i've heard of it. The closest i usually hear is the old DSM criteria for gender identity disorder was totally different from the current DSM criteria for gender dysphoria and/or how most people currently define "transgender"; notably it did not require the patient to identify as a different gender and overall better fits what we currently call "gender-non-comforming". Whether the kids had a formal diagnosis of "maybe trans, maybe just has different hobbies than expected, but either way their parents want them back in their neat little societal boxes" is absolutely not the main issue.

This would be a problem even if Zucker was pro-trans (spoiler: He Is Not, and people who are immediately suspicious of pro-trans studies because "they're probably funded by big pharma or someone else who profits from transitioning" should apply at least a little of that suspicion to the guy who made a living running a conversion clinic); sometimes "formal" criteria change as we learn more about what's common, what's uncommon, what's uncommon but irrelevant, etc, and when the criteria changes drastically enough it doesn't make sense to pretend the old studies perfectly apply to the new criteria. If you found a study defining "sex" specifically and exclusively as penetration with a dick which says gay men have as much sex as straight men but lesbians don't, it's not necessarily wrong as far as it goes but if THAT'S your prime citation for "gay men have more sex than lesbians", especially if you keep trying to apply it in contexts which obviously use a broader definition, there are gonna be a lot of people disagreeing with you and it won't be because they're stubbornly unscientific.

Also Zucker is pro conversion therapy. Yes, pro converting trans people to cis people, but also pro converting gay people to straight people. That doesn't necessarily affect his results, i just find it funny how many people enthusiastically support his findings as evidence transitioning is… basically anti-gay conversion therapy? (even though plenty of trans people transition to gay? including T4T people so even the "that's actually just how straight people try to get with gay people" rationale for gay trans people is incredibly weak? and also HRT has a relatively low but non-zero chance of changing sexual orientation so it wouldn't even be reliable as a means of "becoming straight"? but a guy who couldn't reliably tell the difference between a tomboy and a trans boy figured out the former is more common than the latter + in one whole country where being trans is legal but being gay is not, sometimes cis gay people transition, so OBVIOUSLY that means sexism and homophobia are the driving factors even in countries with significant transphobia. or something.) anyway i hope zucker knows and hates how many gay people and allies are using his own study to trash-talk any attempts to be Less Gay. ideally nobody would take his nonsense seriously at all but it doesn't seem we'll be spared from that any time soon so i will take my schadenfreude where i can.

Steensma's studies have the exact same problem re: irrelevant criteria so "well someone ELSE had the same results!" is not exactly convincing. This is not "oh trans people are refusing to pay attention to these studies because they disagree with them regardless of scientific rigor", it's "one biased guy using outdated criteria found exactly the numbers everyone would expect based on that criteria, i can't imagine why trans people are treating those numbers as relevant to the past criteria but not present definitions, let's find a SECOND guy using outdated criteria. Why do people keep saying the outdated criteria is not relevant to the current state of trans healthcare. Don't we all know it's quantity over quality with scientific studies. (Please don't ask what the quantity of studies disagreeing with me is.)"

Steensma also counted patients as 'not persisting as transgender' if they ghosted him on follow-up which counted for a third of his study's "detransitioners" and a fifth of the total subjects and. look. i'm not saying none of them detransitioned, or assuming they all didn't would be notably more accurate, but i think we can safely treat twenty percent of subjects as a bit high for making a default assumption, especially when some of them might have simply not been interested in a study on whether or not they still know who they are. Fuck knows i've seen pro-trans studies which didn't make assumptions about the people who didn't respond still get prodded by anti-trans people insisting "the number of people claiming they don't regret transitioning can't possibly be so high, some of the people who responded must have been lying. (Scientific rigor means thinking studies which disagree with me are wrong even if the only explanation is the subjects lying and studies which agree with me are right even if we need to make assumptions about a lot of subjects to get there.)"

and this is not new information. not the issues with zucker, not the issues with steensma, not any of the issues because this is not a new study, it's a review of older studies, which in itself doesn't mean "bad" or "useless" -- sometimes that allows connecting some previously-unconnected dots -- but the idea this is going to absolutely blow apart the Woke Media, vindicate Rowling and Lineham, and "save" ""gay"" children from """being forcibly transed""" is bullshit. At most it'll get dragged around and eagerly cited by all the people looking for anything vaguely scientific-sounding to justify their beliefs, and maybe even people who only read headlines and sound bites will buy it, but the people who really believe it will be people who already agreed with all its "findings" and have already been dragging around the existing studies and are just excited to have a shiny new citation for it.

the response from people who've been really reading research on transgender people all along is going to be more along the lines of "……yeah. yeah, i already knew about that. do you need a three-page essay on why i don't think it means what you think it means? because i don't have time for that homework right now but maybe i can pencil it in for next semester if you haven't learned how to check your own sources by then."

#cass review#lgbt#transgender#transphobia#science#'tldr': *writes three-page essay* 'but i don't have time for a three-page essay rn'#also: holy run-on sentences#but seriously this is not going to change the mind of a single person who would be influenced by reading scientific studies#the studies already existed and have BEEN being used by terfs who think ZUCKER of aLL PEOPLE#is a good gotcha against anyone saying 'reputable studies indicate detransitioning is pretty uncommon actually'#but the responses i find truly fascinating are the ones along the lines of#'ohohoho i bet all those people who criticized jkr will be reeeaal quiet now' w. why.#if past studies didn't convince them the Special Collector's Edition of past studies won't#y'all don't have a monopoly on Scientific Knowledge just because y'all think your Fisher-Price level Gender Definition is the best#sometimes. other scientific information exists. and trans people and allies can even read that scientific information.#i know a weird number of y'all think we run on vibes and liberal propaganda but i promise a ton of us are absolute DORKS

33 notes

·

View notes

Text

possibly a wildly out-of-touch opinion but shit like "vote blue no matter who" should apply to like. the president. not every single political race in america.

("okay but the phrase is 'no matter who' --" yeah because it became popular during the 2016 election when we had about twenty people in the Democratic primaries, the "who" was Biden or Yang or Williamson even if you prefered Sanders, not the bluest candidate for the local school board.)

all the "ohh, you think voting blue will help? Democrats are just as bad!" bullshit is completely inapplicable for races where a third-party actually has a chance in hell of winning and people should already be taking ten minutes to Google policies instead of treating voting like rooting for a favorite sports team

(also just plain bullshit anyway but that's a whole other topic.)

on the flip side the presidential elections are so fundamentally broken i'm honestly not sure a third-party candidate legally can win. we sure as shit don't elect our presidents based on who gets the most votes and electors are generally already dedicated to a specific candidate. so shitty as it is, it's a binary choice until the system is completely revamped on a level which will take years and quite possibly decades under the best of circumstances, and it's not exactly gonna speed up if the president is openly and actively trying to create a dictatorship in the most literal 'appointing Supreme Court judges he thinks will help overturn elections' sense of the word.

51 notes

·

View notes

Text

Humans are not perfectly vigilant

I'm on tour with my new, nationally bestselling novel The Bezzle! Catch me in BOSTON with Randall "XKCD" Munroe (Apr 11), then PROVIDENCE (Apr 12), and beyond!

Here's a fun AI story: a security researcher noticed that large companies' AI-authored source-code repeatedly referenced a nonexistent library (an AI "hallucination"), so he created a (defanged) malicious library with that name and uploaded it, and thousands of developers automatically downloaded and incorporated it as they compiled the code:

https://www.theregister.com/2024/03/28/ai_bots_hallucinate_software_packages/

These "hallucinations" are a stubbornly persistent feature of large language models, because these models only give the illusion of understanding; in reality, they are just sophisticated forms of autocomplete, drawing on huge databases to make shrewd (but reliably fallible) guesses about which word comes next:

https://dl.acm.org/doi/10.1145/3442188.3445922

Guessing the next word without understanding the meaning of the resulting sentence makes unsupervised LLMs unsuitable for high-stakes tasks. The whole AI bubble is based on convincing investors that one or more of the following is true:

There are low-stakes, high-value tasks that will recoup the massive costs of AI training and operation;

There are high-stakes, high-value tasks that can be made cheaper by adding an AI to a human operator;

Adding more training data to an AI will make it stop hallucinating, so that it can take over high-stakes, high-value tasks without a "human in the loop."

These are dubious propositions. There's a universe of low-stakes, low-value tasks – political disinformation, spam, fraud, academic cheating, nonconsensual porn, dialog for video-game NPCs – but none of them seem likely to generate enough revenue for AI companies to justify the billions spent on models, nor the trillions in valuation attributed to AI companies:

https://locusmag.com/2023/12/commentary-cory-doctorow-what-kind-of-bubble-is-ai/

The proposition that increasing training data will decrease hallucinations is hotly contested among AI practitioners. I confess that I don't know enough about AI to evaluate opposing sides' claims, but even if you stipulate that adding lots of human-generated training data will make the software a better guesser, there's a serious problem. All those low-value, low-stakes applications are flooding the internet with botshit. After all, the one thing AI is unarguably very good at is producing bullshit at scale. As the web becomes an anaerobic lagoon for botshit, the quantum of human-generated "content" in any internet core sample is dwindling to homeopathic levels:

https://pluralistic.net/2024/03/14/inhuman-centipede/#enshittibottification

This means that adding another order of magnitude more training data to AI won't just add massive computational expense – the data will be many orders of magnitude more expensive to acquire, even without factoring in the additional liability arising from new legal theories about scraping:

https://pluralistic.net/2023/09/17/how-to-think-about-scraping/

That leaves us with "humans in the loop" – the idea that an AI's business model is selling software to businesses that will pair it with human operators who will closely scrutinize the code's guesses. There's a version of this that sounds plausible – the one in which the human operator is in charge, and the AI acts as an eternally vigilant "sanity check" on the human's activities.

For example, my car has a system that notices when I activate my blinker while there's another car in my blind-spot. I'm pretty consistent about checking my blind spot, but I'm also a fallible human and there've been a couple times where the alert saved me from making a potentially dangerous maneuver. As disciplined as I am, I'm also sometimes forgetful about turning off lights, or waking up in time for work, or remembering someone's phone number (or birthday). I like having an automated system that does the robotically perfect trick of never forgetting something important.

There's a name for this in automation circles: a "centaur." I'm the human head, and I've fused with a powerful robot body that supports me, doing things that humans are innately bad at.

That's the good kind of automation, and we all benefit from it. But it only takes a small twist to turn this good automation into a nightmare. I'm speaking here of the reverse-centaur: automation in which the computer is in charge, bossing a human around so it can get its job done. Think of Amazon warehouse workers, who wear haptic bracelets and are continuously observed by AI cameras as autonomous shelves shuttle in front of them and demand that they pick and pack items at a pace that destroys their bodies and drives them mad:

https://pluralistic.net/2022/04/17/revenge-of-the-chickenized-reverse-centaurs/

Automation centaurs are great: they relieve humans of drudgework and let them focus on the creative and satisfying parts of their jobs. That's how AI-assisted coding is pitched: rather than looking up tricky syntax and other tedious programming tasks, an AI "co-pilot" is billed as freeing up its human "pilot" to focus on the creative puzzle-solving that makes coding so satisfying.

But an hallucinating AI is a terrible co-pilot. It's just good enough to get the job done much of the time, but it also sneakily inserts booby-traps that are statistically guaranteed to look as plausible as the good code (that's what a next-word-guessing program does: guesses the statistically most likely word).

This turns AI-"assisted" coders into reverse centaurs. The AI can churn out code at superhuman speed, and you, the human in the loop, must maintain perfect vigilance and attention as you review that code, spotting the cleverly disguised hooks for malicious code that the AI can't be prevented from inserting into its code. As "Lena" writes, "code review [is] difficult relative to writing new code":

https://twitter.com/qntm/status/1773779967521780169

Why is that? "Passively reading someone else's code just doesn't engage my brain in the same way. It's harder to do properly":

https://twitter.com/qntm/status/1773780355708764665

There's a name for this phenomenon: "automation blindness." Humans are just not equipped for eternal vigilance. We get good at spotting patterns that occur frequently – so good that we miss the anomalies. That's why TSA agents are so good at spotting harmless shampoo bottles on X-rays, even as they miss nearly every gun and bomb that a red team smuggles through their checkpoints:

https://pluralistic.net/2023/08/23/automation-blindness/#humans-in-the-loop

"Lena"'s thread points out that this is as true for AI-assisted driving as it is for AI-assisted coding: "self-driving cars replace the experience of driving with the experience of being a driving instructor":

https://twitter.com/qntm/status/1773841546753831283

In other words, they turn you into a reverse-centaur. Whereas my blind-spot double-checking robot allows me to make maneuvers at human speed and points out the things I've missed, a "supervised" self-driving car makes maneuvers at a computer's frantic pace, and demands that its human supervisor tirelessly and perfectly assesses each of those maneuvers. No wonder Cruise's murderous "self-driving" taxis replaced each low-waged driver with 1.5 high-waged technical robot supervisors:

https://pluralistic.net/2024/01/11/robots-stole-my-jerb/#computer-says-no

AI radiology programs are said to be able to spot cancerous masses that human radiologists miss. A centaur-based AI-assisted radiology program would keep the same number of radiologists in the field, but they would get less done: every time they assessed an X-ray, the AI would give them a second opinion. If the human and the AI disagreed, the human would go back and re-assess the X-ray. We'd get better radiology, at a higher price (the price of the AI software, plus the additional hours the radiologist would work).

But back to making the AI bubble pay off: for AI to pay off, the human in the loop has to reduce the costs of the business buying an AI. No one who invests in an AI company believes that their returns will come from business customers to agree to increase their costs. The AI can't do your job, but the AI salesman can convince your boss to fire you and replace you with an AI anyway – that pitch is the most successful form of AI disinformation in the world.

An AI that "hallucinates" bad advice to fliers can't replace human customer service reps, but airlines are firing reps and replacing them with chatbots:

https://www.bbc.com/travel/article/20240222-air-canada-chatbot-misinformation-what-travellers-should-know

An AI that "hallucinates" bad legal advice to New Yorkers can't replace city services, but Mayor Adams still tells New Yorkers to get their legal advice from his chatbots:

https://arstechnica.com/ai/2024/03/nycs-government-chatbot-is-lying-about-city-laws-and-regulations/

The only reason bosses want to buy robots is to fire humans and lower their costs. That's why "AI art" is such a pisser. There are plenty of harmless ways to automate art production with software – everything from a "healing brush" in Photoshop to deepfake tools that let a video-editor alter the eye-lines of all the extras in a scene to shift the focus. A graphic novelist who models a room in The Sims and then moves the camera around to get traceable geometry for different angles is a centaur – they are genuinely offloading some finicky drudgework onto a robot that is perfectly attentive and vigilant.

But the pitch from "AI art" companies is "fire your graphic artists and replace them with botshit." They're pitching a world where the robots get to do all the creative stuff (badly) and humans have to work at robotic pace, with robotic vigilance, in order to catch the mistakes that the robots make at superhuman speed.

Reverse centaurism is brutal. That's not news: Charlie Chaplin documented the problems of reverse centaurs nearly 100 years ago:

https://en.wikipedia.org/wiki/Modern_Times_(film)

As ever, the problem with a gadget isn't what it does: it's who it does it for and who it does it to. There are plenty of benefits from being a centaur – lots of ways that automation can help workers. But the only path to AI profitability lies in reverse centaurs, automation that turns the human in the loop into the crumple-zone for a robot:

https://estsjournal.org/index.php/ests/article/view/260

If you'd like an essay-formatted version of this post to read or share, here's a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2024/04/01/human-in-the-loop/#monkey-in-the-middle

Image:

Cryteria (modified)

https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0

https://creativecommons.org/licenses/by/3.0/deed.en

--

Jorge Royan (modified)

https://commons.wikimedia.org/wiki/File:Munich_-_Two_boys_playing_in_a_park_-_7328.jpg

CC BY-SA 3.0

https://creativecommons.org/licenses/by-sa/3.0/deed.en

--

Noah Wulf (modified)

https://commons.m.wikimedia.org/wiki/File:Thunderbirds_at_Attention_Next_to_Thunderbird_1_-_Aviation_Nation_2019.jpg

CC BY-SA 4.0

https://creativecommons.org/licenses/by-sa/4.0/deed.en

374 notes

·

View notes

Text

April’s fools on various social media is just fake news of questionable funniness and impersonating celebrities or whatnot meanwhile tumblr is letting us virtually slap each other like we’re kittens in a massive play date

In this, at least, I DID pick the right social media to be active on 🙌

2K notes

·

View notes

Text

"Kill them with kindness" Wrong. Thousand Boop attack.

18K notes

·

View notes

Text

hi everyone i hope you dont mind if i

(hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws) (hits you with my paws)

56K notes

·

View notes

Text

Reblog to let your followers know that they’re safe from jumpscares/screamers/etc from you on April 1st but they are NOT safe from getting boop’d like an idiot amen

79K notes

·

View notes

Text

tumblr just gave everyone a cardboard tube and said go wild

22K notes

·

View notes

Text

Rb for larger data set yadda yadda

36K notes

·

View notes