#zfs

Text

-> How to install ZFS in Debian 12 “Bookworm”

5 notes

·

View notes

Text

youtube

#network attached storage#NAS#trueNAS#Debian#HP Proliant Microserver G10 plus V2#Proliant#Microserver#G10#HP#HPE#Gen10#truenas#nas#linux#debian#raid#zfs#vdev#hdd#smb#samba#homelab#ethernet#10gb#S.M.A.R.T.#scrub#dataset#snapshot#rsync#sharing

1 note

·

View note

Text

ZFS snapshots

If you're like me and you're running a long lived FreeBSD server, and it uses ZFS but you don't really need or use the ZFS features and are frankly confused by it and prefer the old ffs days and you're wondering where all your disk space has gone since zpool says one thing but df says another...

You probably have a bunch of snapshots of older versions of FreeBSD put there by freebsd-update taking up space you could reclaim.

zfs -t snapshot will give you an idea and zfs destroy will let you delete the old ones.

I recovered about 10GB this way recently, on my 20GB VPS this was very handy.

1 note

·

View note

Text

ZFS vs. Btrfs: Welches Dateisystem ist besser und wo liegen die Unterschiede?

Zwischen den modernen Dateisystemen ZFS und Btrfs kommt bei vielen die Frage auf, welches dieser beiden Dateisysteme die bessere Wahl für das eigene System in Bezug auf die Leistung und die Zuverlässigkeit ist. Dabei gibt es, wie so oft, einige Gemeinsamkeiten...[Weiterlesen]

0 notes

Text

Would really love it if Windows had a storage catching feature like ZFS has with using vdevs on faster disks. Been looking at an issue I got that requires this type of solution on Windows Server and I haven't been able to find anything except "PrimoCache". $120 perpetual license a bit too expensive for homelab, eh? 💀

0 notes

Link

If you're looking for a powerful and customizable operating system, Linux is the way to go. Unlike Windows and macOS, Linux is developed and promoted by a community of people who are passionate about their work. The best part is that anyone can create a new Linux file system. So if you're tech-savvy, you can customize and personalize your system to ensure it meets your needs. This article introduces different Linux file systems.

1 note

·

View note

Text

Proxmox/ZFS Notes

The Proxmox installer makes it seem like ZFS is all about zRAIDs, but I routinely use it on single-NVMe installs for the benefit of features like snapshots and compression.

That's great, but ZFS on Proxmox can also come with some annoying disk thrashing (frequency of writes) which may have a negative effect on the drive's wear levelling.

Some of what I'm doing here is risky. Some folks (used to?) think ZFS on a single disk is risky already, but I'm disabling protections (where noted) which risk corruption from sudden power loss or crashes. Proceed at your own risk, keep thorough backups.

Basic ZFS stuff

This stuff is likely best done at creation, but if not, then immediately after installation. If you're handy and a little brave, installing in debug mode under advanced options from the initial installer menu may give you the opportunity to make these changes after the zpool is created, but before data is copied.

# Force writes to be asynchoronous. # Potentially unsafe! zfs set sync=disabled rpool # Disable writing access times zfs set atime=off rpool # Store extended attributes in inodes rather than files zfs set xattr=sa rpool # If not already set in the installer, use lz4 compression. # Modern CPUs are plenty fast enough. zfs set compression=lz4 rpool # Sets block size for r/w, may need additional tuning. zfs set recordsize=16k rpool # Increase the time to wait before flushing transactions. # Potentially unsafe! echo "options zfs zfs_txg_timeout=30" >> /etc/modprobe.d/zfs.conf

Reducing rrdcached writes

rrdcached is essentially a memory buffer that flushes to disk occassionally. It also has a journal so effectively it's always writing to disk. Proxmox uses it for statistics, so it's throwing a lot of data at it, which means a lot of writes. I need to both increase the time rrdcached lets data queue up before writing, and disable its journal.

Edit /etc/default/rrdcached:

# Change this: WRITE_TIMEOUT=3600 # Add this: FLUSH_TIMEOUT=7200 ... # Comment out JOURNAL_PATH to disable it. Potentially unsafe! # JOURNAL_PATH=...

By default, rrdcached isn't started with the -f switch necessary to use the FLUSH_TIMEOUT. Edit /etc/init.d/rrdcached and find RRDCACHED_OPTIONS a few lines down from the top. After the WRITE_TIMEOUT definition, add one for -f ${FLUSH_TIMEOUT} so it looks similar to this:

${WRITE_TIMEOUT:+-w ${WRITE_TIMEOUT}} \ ${FLUSH_TIMEOUT:+-f ${FLUSH_TIMEOUT}} \ ${WRITE_JITTER:+-z ${WRITE_JITTER}} \

I've heard of people putting the journal on a ramdisk, but that's memory I'd actually like to use, and is no safer than just disabling the journal.

Restart rrdcached with systemctl restart rrdcached.service or reboot.

Bonus: Disable unneeded services

I also want this box to boot faster, so I look at the output of systemd-analyze blame for services I don't need and their effect on boot time.

This is a single node cluster, so I don’t need HA or corosync.

systemctl mask pve-ha-crm.service systemctl mask pve-ha-lrm.service systemctl mask corosync.service

Don't need console spam at login, and I don't need SPICE, so...

systemctl mask pvebanner.service systemctl mask pvebanner.service

I'm not using LVM, so let’s disable all of that...

systemctl mask lvm2-monitor.service systemctl mask lvm2.service systemctl mask e2scrub_reap.service

And along with not using LVM, my single NVMe drive is the boot disk. I don't need to have the boot process sit around waiting for non-existant block devices to be discovered or created.

systemctl mask systemd-udev-settle.service

With the number of disabled services, I typically reboot at this point.

0 notes

Text

Linux 6.2 的 Btrfs 改進

Linux 6.2 的 Btrfs 改進

在 Hacker News 上看到 Btrfs 的改善消息:「Btrfs With Linux 6.2 Bringing Performance Improvements, Better RAID 5/6 Reliability」,對應的討論在「 Btrfs in Linux 6.2 brings performance improvements, better RAID 5/6 reliability (phoronix.com)」這邊。

因為 ext4 本身很成熟了,加上特殊的需求反而會去用 OpenZFS,就很久沒關注 Btrfs 了,這次看到 Btrfs 在 Linux 6.2 上的改進剛好可以重顧一下情況。

看起來是針對 RAID 模式下的改善,包括穩定性與效能,不過看起來是針對 RAID5 的部份多一點。

就目前的「情勢」看起來,Btrfs…

View On WordPress

#btrfs#cddl#filesystem#fs#gpl#kernel#license#linux#openzfs#performance#raid#raid5#raid6#reliability#zfs

0 notes

Text

los angeles.

november 2023

© tag christof

1K notes

·

View notes

Text

ソメイヨシノ2024。・*

225 notes

·

View notes

Video

Western "Raccoon" bandits by Adrien Bitzenhoffer

Via Flickr:

One more "Sainte-Croix" park picture with a funny scene looking like taking place in an old western movie 😊

#scene#western#raton laveur#raccoon#France#Rhodes#parc#park#Sainte-Croix#Zeiss Otus 100mm f/1.4 ZF.2#Nikon D850#flickr

168 notes

·

View notes

Text

#IFTTT#Flickr#monument#valley#sunrise#landscape#nikon#zf#20mm#f18#travel#peaceful#light#paysage#bucket#list#nationalpark#utah#ybs23landscape

12 notes

·

View notes

Text

i do believe in squiffer supremacy!!

11 notes

·

View notes

Photo

street trumpeter by mr.real.photography

#35#art#2#bnwart#bw#carl#contrast#distagon#photography#monochrome#shadow#street#streetphotography#white#zeiss#zf#flickr#thingsdavidlikes

9 notes

·

View notes

Photo

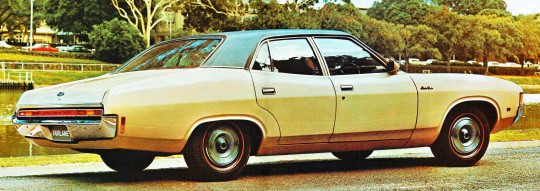

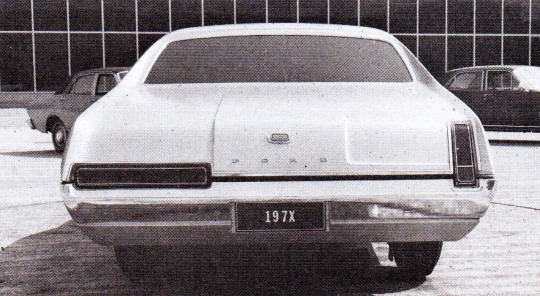

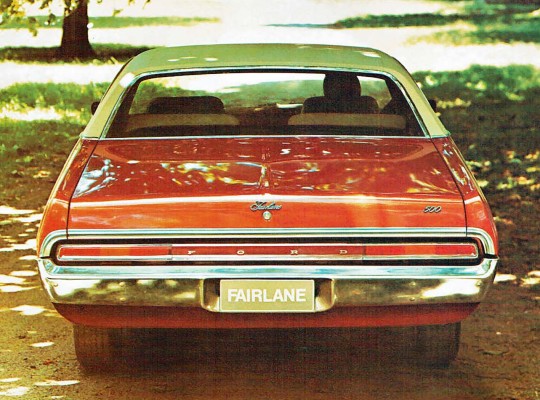

Ford Fairlane 500 ZF, 1972. The ZF was designed and developed in Australia, the earlier ZA-ZD models were also designed locally but were more closely based on US Falcon, Fairlane and Galaxie models. The ZF was still derived from an American aesthetic, looking bit like a Mercury Montego. The styling clays date from 1968 when the ZF was being developed in parallel with the XA Falcon, using the longer wheelbase of the Falcon wagon

#Ford#Ford Fairlane#Ford Fairlane 500 ZF#1972#Ford Australia#long wheelbase#1970s#Ford Fairlane ZF#styling clay#design study

162 notes

·

View notes