#human loop

Text

Inflicts human Loop on you

#mrgh... my favourite little sadstar. I love star torture#gotta be sooo fucked up when blood in monochrome looks almost lightless. with reflecting light looking like stars#especially when you experienced a star busrting from inside of you. something that turned your skin into a little patch of cosmos#aren't you afraid it's still there. under your skin. just waiting to come out again.#isat#in stars and time#loop isat#isat loop#human loop#isat siffrin#siffrin isat#art tag#my art#desert art#isat mirabelle#mirabelle isat#oh forgor to mention but loop and mira are def style buddies. they have spa days :}

542 notes

·

View notes

Text

get that star some clothes!

#my art#fanart#in stars and time#isat fanart#isat#isat loop#human loop#loop isat#mira and bonbon are there but not putting that in the main tag#in stars and time spoilers#isat spoilers#act 6 spoilers#a6se spoilers

265 notes

·

View notes

Text

Sadness Frin and Human Loop?? Being silly??

#tell me why I was like#I can make a sad au :)#and then I draw them being silly#firmly believe loop and siffrin would be best buds#once they stop trying to kill each other#isat#isat spoilers#in stars and time#isat siffrin#human loop#my art#inspired by the sadfrin au#barely

81 notes

·

View notes

Text

love this dynamic

#where loop joins the gang and siffrins v comfy w them since they know eachother like no other#and isa doesnt know loop was siffrin and is so jelly#human loop#human loop isat#isat isabeau#isat loop#isat siffrin#isat spoilers#isat fanart#eye contact#my art

56 notes

·

View notes

Text

human loop but with burns on their palms and around their mouth from the star

#isat#in stars and time#isat spoilers#loop isat#isat loop#human loop#two hat spoilers#ive just been brainrotting about this concept for a while and needed to get it out#i really wanna draw my human loop design at some point but mental illness is a bitch that kills my motivation#feel free to ask me about my human loop concept btw

37 notes

·

View notes

Photo

Slipping inside the crevice of a dream, taking her title from a Korean term which encompasses everything from idle fantasies to intrepid night terrors, on her sophomore album Lucy Liyou swaps molten piano instrumentals and text-to-speech snippets for a staccato of saliva sounds and a more fulsome embrace of pansori, a genre of musical theatre performed by a singer and a drummer which emphasises the trials and tribulations of character within a national ethos of repressed sorrow or collective grief. Part of the pioneering Downtown scene in New York City, on ‘If Archimedes’ the poet and media artist Ellen Zweig tells with apocalyptic humour and ceaseless foreboding the story of Edith Warner, who lived near the Los Alamos National Laboratory during the development of the first atomic bomb. The experimental noisemaker Mamer cuts what may be the first album for solo sherter, the guitarist and recent Guggenheim Fellow for composition Rez Abbasi and the sitar maestro Josh Feinberg pair up for their debut release as Naya Baaz, Markus Popp elaborates on a series of short vignettes which were originally produced for the grand opening of the German Romantic Museum in Frankfurt, and UCC Harlo regales the dry riverbeds of the Palo Duro Canyon with promises of light rain and ravishing glints from her baroque viola, on a poetic reflection of the drought she encountered on a cross-country trip through America which teases the nocturnal quenching of thirst.

https://culturedarm.com/tracks-of-the-week-13-05-23/

#music#new music#best new music#tracks of the week#lucy liyou#ambient#experimental#pansori#ellen zweig#downtown#human loop#fourth world#poetry#spoken word#rez abbasi#josh feinberg#ucc harlo#oval#mamer#sherter#electronic#jazz#gerald cleaver#glass triangle#colin stetson#curtis stewart

3 notes

·

View notes

Text

I need codependent Danny/Jason as a little treat (for me) and I love the idea of them having some sort of instant connection the moment they meet (bc ghost stuff idk)

Danny who's been dropped in Gotham with no way home (alt universe??) and he's been here for 36 hours and having a Very bad time senses a liminal being and immediately latches onto them heedless of the fact that his new best friend is shooting at some seedy guys in an alley and goes off about how stressed he is and how he can't make it back to the ghost zone and what a bad day he's been having (and it's important to note Danny is a littol ghost boy literally hanging off of Jason's neck as he floats aimlessly) and Jason is like "who are you??" And Danny is like "oh sorry I'm Danny lol" and then just continues lamenting his woes

And honestly ? This might as well happen. Nothing about this Danny guy(is he human?) gives Jason a bad vibe and tbh he's never felt more calm and level headed before so he just keeps up his usual Red Hood patrol and doesn't even think about it when he heads back to a safehouse and feeds Danny dinner (breakfast) before crashing for half the day

The only thing I actually need is Jason meeting up with the bats for some sort of Intel meeting and they're like "uhhh who's that" and Jason is like "that's Danny." And does not elaborate (very ".... What do you have there?" "A smoothie" vibes)

And it takes them a while to realize that these two have known each other for less than 12 hours and are literally attached at the hip

#very remora fish with a shark#jason todd#danny fenton#danny phantom#dpxdc#dp x dc#this isnt super important but i imagine Danny's ghost form as young and unaged from his death so jason is used to this small whispy kid#who just hangs off him and talks literally all the time#so when something comes up and someone is like 'idk if we can bring danny looking like... that' (glowing and a literal ghost)#danny is like 'oh ok u need a human? ok :)' and transforms#its been WEEKS#jason didn't know he could do that#nobody did#and now theres this 20ish dude standing there#human form danny doesn't talk a lot (anxiety) ghost form danny can't stop talking (anxiety)#could be a ship fic and at this point jason goes from 'where is my little buddy :(' to 👀😳#i imagine theres a sort of feedback loop with them both feeding off of each other's ecto energies and vibes idk#so when danny is human its not as strong#batman is convince this strange entity is like hypnoyizing his son and like hes not WRONG#but it goes both ways#idk#i just need more codependency fics :(#i should go on a bender#ignore my 500 open tabs and go to town

1K notes

·

View notes

Text

“Humans in the loop” must detect the hardest-to-spot errors, at superhuman speed

I'm touring my new, nationally bestselling novel The Bezzle! Catch me SATURDAY (Apr 27) in MARIN COUNTY, then Winnipeg (May 2), Calgary (May 3), Vancouver (May 4), and beyond!

If AI has a future (a big if), it will have to be economically viable. An industry can't spend 1,700% more on Nvidia chips than it earns indefinitely – not even with Nvidia being a principle investor in its largest customers:

https://news.ycombinator.com/item?id=39883571

A company that pays 0.36-1 cents/query for electricity and (scarce, fresh) water can't indefinitely give those queries away by the millions to people who are expected to revise those queries dozens of times before eliciting the perfect botshit rendition of "instructions for removing a grilled cheese sandwich from a VCR in the style of the King James Bible":

https://www.semianalysis.com/p/the-inference-cost-of-search-disruption

Eventually, the industry will have to uncover some mix of applications that will cover its operating costs, if only to keep the lights on in the face of investor disillusionment (this isn't optional – investor disillusionment is an inevitable part of every bubble).

Now, there are lots of low-stakes applications for AI that can run just fine on the current AI technology, despite its many – and seemingly inescapable - errors ("hallucinations"). People who use AI to generate illustrations of their D&D characters engaged in epic adventures from their previous gaming session don't care about the odd extra finger. If the chatbot powering a tourist's automatic text-to-translation-to-speech phone tool gets a few words wrong, it's still much better than the alternative of speaking slowly and loudly in your own language while making emphatic hand-gestures.

There are lots of these applications, and many of the people who benefit from them would doubtless pay something for them. The problem – from an AI company's perspective – is that these aren't just low-stakes, they're also low-value. Their users would pay something for them, but not very much.

For AI to keep its servers on through the coming trough of disillusionment, it will have to locate high-value applications, too. Economically speaking, the function of low-value applications is to soak up excess capacity and produce value at the margins after the high-value applications pay the bills. Low-value applications are a side-dish, like the coach seats on an airplane whose total operating expenses are paid by the business class passengers up front. Without the principle income from high-value applications, the servers shut down, and the low-value applications disappear:

https://locusmag.com/2023/12/commentary-cory-doctorow-what-kind-of-bubble-is-ai/

Now, there are lots of high-value applications the AI industry has identified for its products. Broadly speaking, these high-value applications share the same problem: they are all high-stakes, which means they are very sensitive to errors. Mistakes made by apps that produce code, drive cars, or identify cancerous masses on chest X-rays are extremely consequential.

Some businesses may be insensitive to those consequences. Air Canada replaced its human customer service staff with chatbots that just lied to passengers, stealing hundreds of dollars from them in the process. But the process for getting your money back after you are defrauded by Air Canada's chatbot is so onerous that only one passenger has bothered to go through it, spending ten weeks exhausting all of Air Canada's internal review mechanisms before fighting his case for weeks more at the regulator:

https://bc.ctvnews.ca/air-canada-s-chatbot-gave-a-b-c-man-the-wrong-information-now-the-airline-has-to-pay-for-the-mistake-1.6769454

There's never just one ant. If this guy was defrauded by an AC chatbot, so were hundreds or thousands of other fliers. Air Canada doesn't have to pay them back. Air Canada is tacitly asserting that, as the country's flagship carrier and near-monopolist, it is too big to fail and too big to jail, which means it's too big to care.

Air Canada shows that for some business customers, AI doesn't need to be able to do a worker's job in order to be a smart purchase: a chatbot can replace a worker, fail to their worker's job, and still save the company money on balance.

I can't predict whether the world's sociopathic monopolists are numerous and powerful enough to keep the lights on for AI companies through leases for automation systems that let them commit consequence-free free fraud by replacing workers with chatbots that serve as moral crumple-zones for furious customers:

https://www.sciencedirect.com/science/article/abs/pii/S0747563219304029

But even stipulating that this is sufficient, it's intrinsically unstable. Anything that can't go on forever eventually stops, and the mass replacement of humans with high-speed fraud software seems likely to stoke the already blazing furnace of modern antitrust:

https://www.eff.org/de/deeplinks/2021/08/party-its-1979-og-antitrust-back-baby

Of course, the AI companies have their own answer to this conundrum. A high-stakes/high-value customer can still fire workers and replace them with AI – they just need to hire fewer, cheaper workers to supervise the AI and monitor it for "hallucinations." This is called the "human in the loop" solution.

The human in the loop story has some glaring holes. From a worker's perspective, serving as the human in the loop in a scheme that cuts wage bills through AI is a nightmare – the worst possible kind of automation.

Let's pause for a little detour through automation theory here. Automation can augment a worker. We can call this a "centaur" – the worker offloads a repetitive task, or one that requires a high degree of vigilance, or (worst of all) both. They're a human head on a robot body (hence "centaur"). Think of the sensor/vision system in your car that beeps if you activate your turn-signal while a car is in your blind spot. You're in charge, but you're getting a second opinion from the robot.

Likewise, consider an AI tool that double-checks a radiologist's diagnosis of your chest X-ray and suggests a second look when its assessment doesn't match the radiologist's. Again, the human is in charge, but the robot is serving as a backstop and helpmeet, using its inexhaustible robotic vigilance to augment human skill.

That's centaurs. They're the good automation. Then there's the bad automation: the reverse-centaur, when the human is used to augment the robot.

Amazon warehouse pickers stand in one place while robotic shelving units trundle up to them at speed; then, the haptic bracelets shackled around their wrists buzz at them, directing them pick up specific items and move them to a basket, while a third automation system penalizes them for taking toilet breaks or even just walking around and shaking out their limbs to avoid a repetitive strain injury. This is a robotic head using a human body – and destroying it in the process.

An AI-assisted radiologist processes fewer chest X-rays every day, costing their employer more, on top of the cost of the AI. That's not what AI companies are selling. They're offering hospitals the power to create reverse centaurs: radiologist-assisted AIs. That's what "human in the loop" means.

This is a problem for workers, but it's also a problem for their bosses (assuming those bosses actually care about correcting AI hallucinations, rather than providing a figleaf that lets them commit fraud or kill people and shift the blame to an unpunishable AI).

Humans are good at a lot of things, but they're not good at eternal, perfect vigilance. Writing code is hard, but performing code-review (where you check someone else's code for errors) is much harder – and it gets even harder if the code you're reviewing is usually fine, because this requires that you maintain your vigilance for something that only occurs at rare and unpredictable intervals:

https://twitter.com/qntm/status/1773779967521780169

But for a coding shop to make the cost of an AI pencil out, the human in the loop needs to be able to process a lot of AI-generated code. Replacing a human with an AI doesn't produce any savings if you need to hire two more humans to take turns doing close reads of the AI's code.

This is the fatal flaw in robo-taxi schemes. The "human in the loop" who is supposed to keep the murderbot from smashing into other cars, steering into oncoming traffic, or running down pedestrians isn't a driver, they're a driving instructor. This is a much harder job than being a driver, even when the student driver you're monitoring is a human, making human mistakes at human speed. It's even harder when the student driver is a robot, making errors at computer speed:

https://pluralistic.net/2024/04/01/human-in-the-loop/#monkey-in-the-middle

This is why the doomed robo-taxi company Cruise had to deploy 1.5 skilled, high-paid human monitors to oversee each of its murderbots, while traditional taxis operate at a fraction of the cost with a single, precaratized, low-paid human driver:

https://pluralistic.net/2024/01/11/robots-stole-my-jerb/#computer-says-no

The vigilance problem is pretty fatal for the human-in-the-loop gambit, but there's another problem that is, if anything, even more fatal: the kinds of errors that AIs make.

Foundationally, AI is applied statistics. An AI company trains its AI by feeding it a lot of data about the real world. The program processes this data, looking for statistical correlations in that data, and makes a model of the world based on those correlations. A chatbot is a next-word-guessing program, and an AI "art" generator is a next-pixel-guessing program. They're drawing on billions of documents to find the most statistically likely way of finishing a sentence or a line of pixels in a bitmap:

https://dl.acm.org/doi/10.1145/3442188.3445922

This means that AI doesn't just make errors – it makes subtle errors, the kinds of errors that are the hardest for a human in the loop to spot, because they are the most statistically probable ways of being wrong. Sure, we notice the gross errors in AI output, like confidently claiming that a living human is dead:

https://www.tomsguide.com/opinion/according-to-chatgpt-im-dead

But the most common errors that AIs make are the ones we don't notice, because they're perfectly camouflaged as the truth. Think of the recurring AI programming error that inserts a call to a nonexistent library called "huggingface-cli," which is what the library would be called if developers reliably followed naming conventions. But due to a human inconsistency, the real library has a slightly different name. The fact that AIs repeatedly inserted references to the nonexistent library opened up a vulnerability – a security researcher created a (inert) malicious library with that name and tricked numerous companies into compiling it into their code because their human reviewers missed the chatbot's (statistically indistinguishable from the the truth) lie:

https://www.theregister.com/2024/03/28/ai_bots_hallucinate_software_packages/

For a driving instructor or a code reviewer overseeing a human subject, the majority of errors are comparatively easy to spot, because they're the kinds of errors that lead to inconsistent library naming – places where a human behaved erratically or irregularly. But when reality is irregular or erratic, the AI will make errors by presuming that things are statistically normal.

These are the hardest kinds of errors to spot. They couldn't be harder for a human to detect if they were specifically designed to go undetected. The human in the loop isn't just being asked to spot mistakes – they're being actively deceived. The AI isn't merely wrong, it's constructing a subtle "what's wrong with this picture"-style puzzle. Not just one such puzzle, either: millions of them, at speed, which must be solved by the human in the loop, who must remain perfectly vigilant for things that are, by definition, almost totally unnoticeable.

This is a special new torment for reverse centaurs – and a significant problem for AI companies hoping to accumulate and keep enough high-value, high-stakes customers on their books to weather the coming trough of disillusionment.

This is pretty grim, but it gets grimmer. AI companies have argued that they have a third line of business, a way to make money for their customers beyond automation's gifts to their payrolls: they claim that they can perform difficult scientific tasks at superhuman speed, producing billion-dollar insights (new materials, new drugs, new proteins) at unimaginable speed.

However, these claims – credulously amplified by the non-technical press – keep on shattering when they are tested by experts who understand the esoteric domains in which AI is said to have an unbeatable advantage. For example, Google claimed that its Deepmind AI had discovered "millions of new materials," "equivalent to nearly 800 years’ worth of knowledge," constituting "an order-of-magnitude expansion in stable materials known to humanity":

https://deepmind.google/discover/blog/millions-of-new-materials-discovered-with-deep-learning/

It was a hoax. When independent material scientists reviewed representative samples of these "new materials," they concluded that "no new materials have been discovered" and that not one of these materials was "credible, useful and novel":

https://www.404media.co/google-says-it-discovered-millions-of-new-materials-with-ai-human-researchers/

As Brian Merchant writes, AI claims are eerily similar to "smoke and mirrors" – the dazzling reality-distortion field thrown up by 17th century magic lantern technology, which millions of people ascribed wild capabilities to, thanks to the outlandish claims of the technology's promoters:

https://www.bloodinthemachine.com/p/ai-really-is-smoke-and-mirrors

The fact that we have a four-hundred-year-old name for this phenomenon, and yet we're still falling prey to it is frankly a little depressing. And, unlucky for us, it turns out that AI therapybots can't help us with this – rather, they're apt to literally convince us to kill ourselves:

https://www.vice.com/en/article/pkadgm/man-dies-by-suicide-after-talking-with-ai-chatbot-widow-says

If you'd like an essay-formatted version of this post to read or share, here's a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2024/04/23/maximal-plausibility/#reverse-centaurs

Image:

Cryteria (modified)

https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0

https://creativecommons.org/licenses/by/3.0/deed.en

#pluralistic#ai#automation#humans in the loop#centaurs#reverse centaurs#labor#ai safety#sanity checks#spot the mistake#code review#driving instructor

733 notes

·

View notes

Text

Comfortable in New Skin

#wanted to give loop some like... vague clothes. since while they dont Need to be covered... accessorising is a human right#and boy do they need some of those. one can assume the only place theyd be getting clothes is isa though. so. ponder it#in stars and time#isat fanart#isat spoilers#isat loop#loop isat#isat#lucabyteart#SPOILERS TAG BECAUSE UM. CAPTION IS UNNEGOTIABLE. SOZ#anyway i do have Even More doodles on the way. primarily about loop. predictable. a lot of thoughts on the body horror of it all.#if you were to ask me. i think loops quote unquote skin is uncannily loose when pushed or pulled in any way#almost as if it were clothes covering the skin rather than skin itself. probably feels fuzzy and vague too. as for their head?#non-solid but in the way where theres a force pushing outwards. radiating you could say. yknow. vague. undefined. not quite real#but thats just my headcanon. tee hee

690 notes

·

View notes

Text

Everyone's favorite cosmic joke!

#isat spoilers#in stars and time spoilers#in stars and time#isat#isat loop#kala art#God making fanart for this game is so fun but my art is tailored for as much color as is humanely possible to cram onto a file so I suffer.

467 notes

·

View notes

Text

exhaustion

#set post loops odile looping au but feel free to apply anywhere#odile loops au#isat#isat odile#in stars and time#isat mirabelle#isat spoilers#thinking like. odile overexhausting herself; because she no longer knows how much the human body can take before collapsing#because wishcraft allowed her to function without sleep for hundreds of loops#made while i was super sleepy at work... projecting my exhaustion#day 50#ok yeah nevermind i keep missing days. gonna go through asks whenever i'm ready for it#does odile putting her hair down post loops teal's design? well I'm attributing it to her anyways

472 notes

·

View notes

Text

on siffrin replication

#my art#desert art#art tag#isat#in stars and time#siffrin isat#isat siffrin#loop isat#isat loop#human loop#mirabelle isat#isat mirabelle#odile isat#isat odile#isabeau isat#isat isabeau#isat bonnie#bonnie isat#it's with human loop for. reasons. you can come up with your own#isat spoilers

466 notes

·

View notes

Text

moody leaning

#my art#fanart#in stars and time#isat fanart#isat#siffrin (isat)#isat siffrin#act 6 spoilers#human loop#loop isat#isat loop#isabeau isat#isat isabeau#isafrin#sifloop#sloop#yet somehow not sloopis? yer slackin.

121 notes

·

View notes

Text

Something that amuses me between knitters and crocheters is this... almost humble nature each craft has about the other. I couldn't imagine how one would knit, and I know some of the basics - and yet, I have met so many knitters who say crochet is impossible, and yet I find it to be so simple. There's just something charming about when one recognizes just how much skill, effort, patience, and care go into a craft, and to be humbled by just how incredible human ingenuity and creativity are

#art#fiber art#crochet#knit#i was watching a knitter who is trying to crochet and she was struggling with the foundation chain and...#...man that's how i would feel with a BASIC cast-on (maybe besides the crochet cast-on)#a knitter described knit as just... a bunch of open crochet loops and that made it much easier to understand knit#i love how knit looks because you can do these colour changes SEAMLESSLY in a magical way#I LOVE YOU FIBER ARTS - I LOVE YOU HUMANS I LOVE YOU PEOPLE <3

626 notes

·

View notes

Text

My DCA AU Y/Ns, the family gathering, my children, etc.

Celestial Sundown - a medieval peasant whose Sun, Moon, and Eclipse are gods

Human Disguise - a store+library employee who falsely believes Sun and Moon to be regular humans

Time Loop - a daycare assistant sent back to their first day on the job every few months on the day of the fire (note: this design is after the loops broke)

A Dime a Dozen - a socially anxious daycare assistant falsely under the impression that Sun and Moon are not sentient

Transparent ver.

#pillowspace art#dca au#dca fandom#y/n insert#celestial sundown au#human disguise au#time loop au#a dime a dozen#dca y/n#[csd art tag]

534 notes

·

View notes

Text

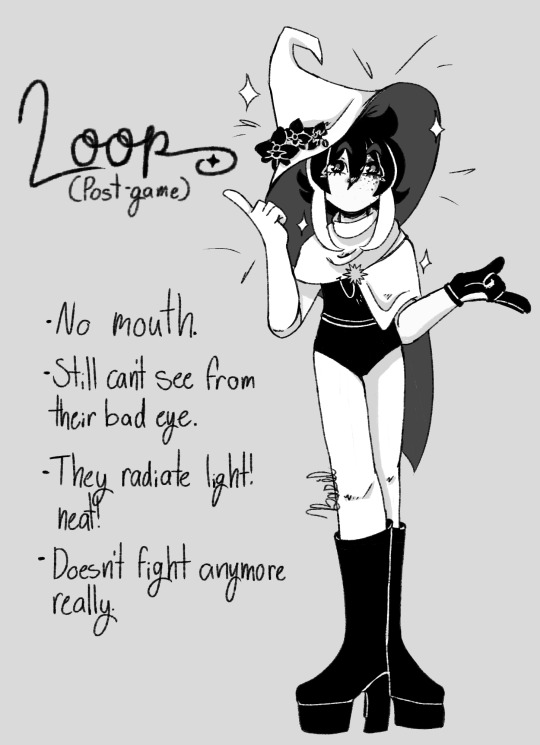

post-game loop!!

for... me, mostly, i think. do they look enough like themself? or even sif really? im not sure. but i like them well enough that im not too worried about it.

(design notes and an alt under the cut!)

okay. so. first off? they were originally gonna have a mouth. but i just... couldnt. i couldnt reckon with it. so they get no mouth. sorry, loop.

while they dont have a scar on their eye anymore, they have... something? there are 'freckles' that radiate out from their eye. unlike the rest of their person, these dark spots dont seem to radiate light.

the hat theyre wearing is sifs! this worlds sifs. siffrin doesnt need it anymore, after all. and theirs is... long gone.

speaking of the hat: its adorned with orchids. orchids are funeral flowers. they represent everlasting love and sympathy for the deceased. their friends no longer exist. they cannot, not in the way they once did. its a way to hold on to those people, but to let them go, too. the only way through is forward now, after all.

the core of their design was asymmetry. siffrin is actually very symmetrical as far as his design goes, so i wanted loop to throw all caution to the wind. a long white glove paired with a short black one, a cloak that drapes over one side, hair not sticking to an even length.

theyre actually wearing white tights. not that you can really tell unless you colour pick and see the very mild difference between their skin tone and the white of their clothes.

wears platform boots almost exclusively to be taller than sif. lmao.

#in stars and time#in stars and time fanart#isat#isat fanart#isat spoilers#isat act 6 spoilers#in stars and time spoilers#loop isat#loop in stars and time#basil paints#hey if anyone has questions about their design i didnt answer you can ask them wehehe.#other than 'why are they human-ish now?' because my answer for that is boring.#they wanted to be a part of the world. to be real in a way they werent in the loops.

292 notes

·

View notes