#dance of

Text

These two are amazing!

*sound up*

#freestyle#freestyle dance#dancehall#dance music#just dance#dancers#dancer#dance#dancing#cool#awesome#amazing#💃🏻🕺🏻#tgifvibes#tgi fridays

63K notes

·

View notes

Text

Why are pole dancers usually mostly naked (and why am I usually not)?

I get this question from both directions, depending on how familiar people are with pole. In truth, I prefer the chrome for dancing, but silicone is better for videos.

Follow me on Patreon for more pole and/or archery content!

45K notes

·

View notes

Text

how to ask the demon you've been smitten over for 6000 years to dance: an angel's guide

bonus:

#goodomensedits#goodomensgifs#good omens#good omens s2#good omens spoilers#ineffable husbands#aziracrow#userkristi#userlauren#userstede#userisaiah#userelio#userhani#my gifs#edit: the old caption has been fixed!!! changed it to 'we' like god (neil gaiman) intended#EDIT EDIT: NEIL GAIMAN HIMSELF REBLOGGED THIS POST AND CONFIRMED ITS NOT 'WE' BUT 'YOU DONT DANCE' LIKE I HAD ORIGINALLY OKAY#im returning to my roots#(aka making gifs but adding my chaotic commentary and editing to it)#i wish i was at home i'd be able to use a better quality video but im also ~impatient~#hopefully no one beat me to the punch#because this scene is genuinely one of my favorites like look at azi look at his smile im gonna fucking cry :')))))#like michael sheen!!!!!!!! michael sheen i am banging at your door like a wild chimpanzee#the ACTING CHOICES#the way you can literally SEE his thought process and excitement over asking crowley to dance i am in shambles i really am

60K notes

·

View notes

Text

Oh, this is incredible.

Improv swing dance to a Todrick Hall song?

And they killed it!

ETA *thanks to the people who pointed out my oops. Idk why I said RuPaul??!

52K notes

·

View notes

Text

op is a shopkeeper and promotes the shoes sold in her online shop like this (cr:奥戴丽好笨)

29K notes

·

View notes

Text

In response to Slate's article on the possibility having non-heteromative team in figure skating (particularly, ice dance and pairs), Oniceperspective shared a glimpse of Gabriella Papadakis (FRA) and Madison Hubbell (USA) working on their same-sex program. You can see how they switch the leading figure between them.

You can see them trying out lifts in this video.

The rest is on Instagram here:

instagram

101K notes

·

View notes

Text

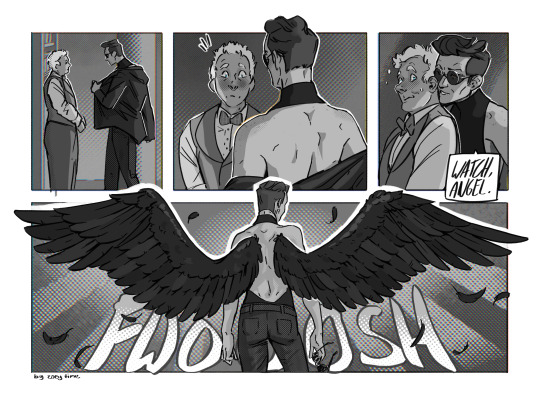

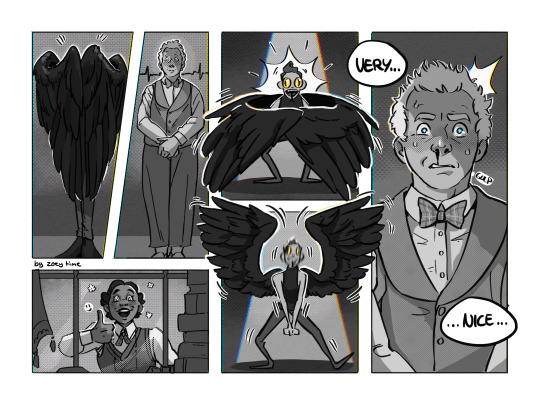

Angel/Demon Mating Dance

(all the turtleneck madness drove me to... whatever this is. Enjoy.)

(yes, it is inspired by this:)

#Aziraphale is like “why does this turn me on?”#Muriel is like 😃👍#the motion blur is still sending me#turtleneck crowley#good omens#ineffable husbands#crowley#aziraphale#muriel#art#good omens 2#goodomens#good omens fanart#azicrow#good omens comic#comic#mating dance#good omemes#anthony j crowley#turtleneck#vavoomcomic#vavoomart

22K notes

·

View notes

Text

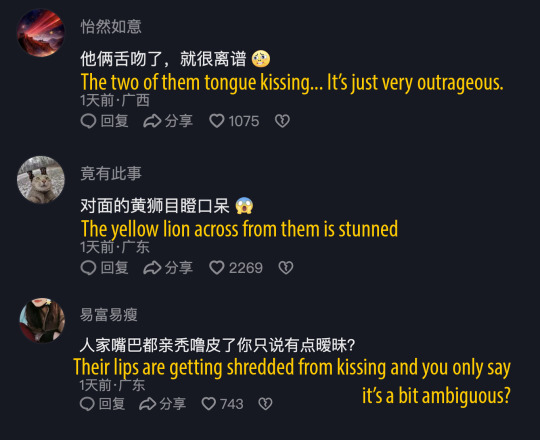

OP: 我觉得你们俩有点暧昧了

↳ I feel like you two have some ambiguity going

English added by me :)

16K notes

·

View notes

Text

Is Pole Dance the same as Stripping?

I get a lot of comments saying something like “bro is a striper” (usually when a post breaks containment), so thought I might as well address the topic!

Please consider supporting me on Patreon if you like my videos!

32K notes

·

View notes

Text

Winged lion: I wonder what's taking her so long I should go take a look

Marcielle:

#marcielle donato#marcielle#marcille dungeon meshi#dunmeshi#delicious in dungeon#dungeon meshi#chilchuck tims#chilchuck#izutsumi#rabbit dance#jojo dance#jojo#senshi dungeon meshi#senshi#chilchuck dungeon meshi#fanart#anime#anime fanart#gif

18K notes

·

View notes

Text

#huskies#dogs#Leia#Husky#Dogs Dancing#Duck Tales#Duck Tales Theme#i love dogs#ducktales#Leia and Archer#siberianderpskies#Siberian Derpskies

22K notes

·

View notes

Text

I want vaggie to confront alastor’s manipulative ass about Charlie’s deal and I hope it’s a musical number 😩🙏🏽

#not trying to sail a ship btw#I just like evil villain dance numbers#like in Pixar’s coco#that scene was so tight#whole time I was drawing this I was like “ho dont do it!#hazbin hotel#alastor#hazbin hotel fanart#vaggie#my doods#liked by creator

16K notes

·

View notes

Text

A new tool lets artists add invisible changes to the pixels in their art before they upload it online so that if it’s scraped into an AI training set, it can cause the resulting model to break in chaotic and unpredictable ways.

The tool, called Nightshade, is intended as a way to fight back against AI companies that use artists’ work to train their models without the creator’s permission. Using it to “poison” this training data could damage future iterations of image-generating AI models, such as DALL-E, Midjourney, and Stable Diffusion, by rendering some of their outputs useless—dogs become cats, cars become cows, and so forth. MIT Technology Review got an exclusive preview of the research, which has been submitted for peer review at computer security conference Usenix.

AI companies such as OpenAI, Meta, Google, and Stability AI are facing a slew of lawsuits from artists who claim that their copyrighted material and personal information was scraped without consent or compensation. Ben Zhao, a professor at the University of Chicago, who led the team that created Nightshade, says the hope is that it will help tip the power balance back from AI companies towards artists, by creating a powerful deterrent against disrespecting artists’ copyright and intellectual property. Meta, Google, Stability AI, and OpenAI did not respond to MIT Technology Review’s request for comment on how they might respond.

Zhao’s team also developed Glaze, a tool that allows artists to “mask” their own personal style to prevent it from being scraped by AI companies. It works in a similar way to Nightshade: by changing the pixels of images in subtle ways that are invisible to the human eye but manipulate machine-learning models to interpret the image as something different from what it actually shows.

Continue reading article here

#Ben Zhao and his team are absolute heroes#artificial intelligence#plagiarism software#more rambles#glaze#nightshade#ai theft#art theft#gleeful dancing

22K notes

·

View notes