#transformer models

Text

speaking of transformers—when my professor for Deep Learning was introducing them to us (working up from the intuition of what could arguably be improved from RNNs/GRUs/LSTMs/Seq2Seq), he paused for a moment to say:

….and what this resulted in is a paper in 2017 called “Attention Is All You Need”, that introduced an architecture for machine translation called the Transformer model.

As a side note, I think it's a terrible name for a neural network architecture, because basically every neural net is a transformer... it transforms an input to an output. I don't know why this one gets to claim that name in particular. Anyway. That's what it's called :/

(the delivery is missing, but you could hear the mix of…. professional yet very clear disappointment / frustration / resentment at the name. especially the “I don't know why this one gets to claim that name in particular” bit)

#He's Right 😹#imagine having a paper title as cool and snappy and playful as Attention Is All You Need#and then just naming your actual architecture the ''transformer'' model#(this is paraphrased from memory but sent to my gf in a discord DM shortly after it happened)#(so it was still fresh at the time)#my posts#deep learning#transformer models#machine learning

12 notes

·

View notes

Text

OMG I FORGOT TO PROVIDE AN ORIGINAL FILE OF MY SPINNY BOI ON TUMBLR

Spread this fucker

seeing them everywhere gives me so much joy lol

#shorkie#3d#3d art#3d model#art#blahaj#animation#blender#indiedev#ikea shark#transition goals#nonbinary#gender expression#trans pride#transgender#transfem#trans#transformers#trans joy#transmasc#transgirl#lbgtq#lbgtpride#lbgtq rights#lgbtq community#lgbt pride#queer#queer community#ikea blahaj#ikea plushies

13K notes

·

View notes

Text

Generative AI can change the game for the retail industry It is a branch of artificial intelligence that can create new content, such as images, text, music, and more. It has many applications in retail, such as personalizing product recommendations, generating realistic product images, creating engaging marketing content, and optimizing inventory management. Generative AI can transform customer experiences and business efficiency in various ways. Moreover, there are several advantages and challenges of generative AI for retail to consider and it is essential to understand how you can use it for your own business.

To read the blog and learn more about generative AI in retail https://www.webcluesinfotech.com/generative-ai-in-retail-transforming-customer-experiences-business-efficiency

#artificial intelligence#Transformer Models#AI Retail#customer experience#AI generative networks#ai applications#eCommerce App Development#Generative AI

0 notes

Text

"Delphi" for Nintendo DS!

I heard this game got scrapped halfway through development.

#AMBULON I LOVE YOUUU#Yes I only made half his body leave me alone#my art#artists on tumblr#maccadam#transformers ambulon#ambulon#MYMTE#low poly#3d model#animation

2K notes

·

View notes

Text

In aure tua dulcia nihil insusurrabam , Et omnes timores provocarem , Si meus esses…

#dreamer#I hear#calling from other side#sirenia#siren girl#trans#transgender#trans pride#transisbeautiful#mtf#transgirl#girlslikeus#mtf hrt#maletofemale#transformation#trans women#trans woman#transexual#not well#trans people#this is what trans looks like#mtf trans#trans community#trans fem#trans feminine#trans goddess#trans model#trans is beautiful#trans mtf#trans positivity

1K notes

·

View notes

Text

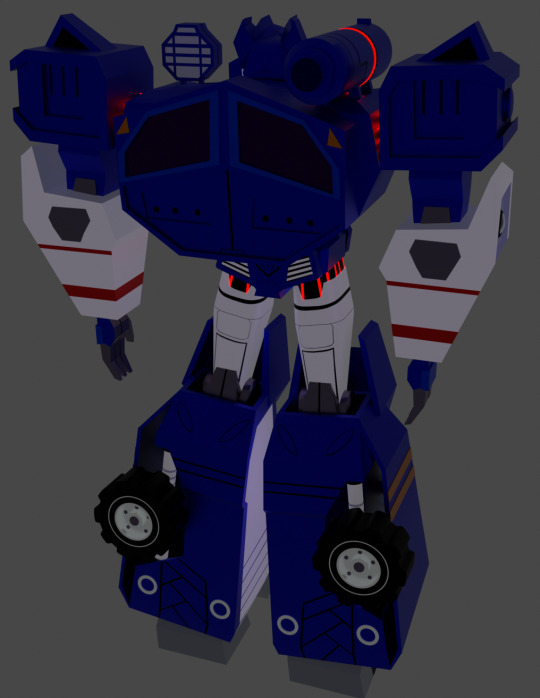

I "freshened" up his model a bit and rigged it for a simple little animation

#i thought i had posted this yesterday and was wondering why not even a single person liked and then i realized it didnt post#i hope it posted now lmao#knock out#tfp#transformers prime#tfp knock out#3d animation#3d model

1K notes

·

View notes

Text

Viviane Merillo

2K notes

·

View notes

Text

Gigus Maximus’ new little apprentice, Mini Minus

He is very orange

#he’s modeled after sphynx and Devon Rex cats#so he’s a bit bald#my art#transformers#transformers oc#gigus maximus#mini minus#cybercat#cybertronian fauna

587 notes

·

View notes

Text

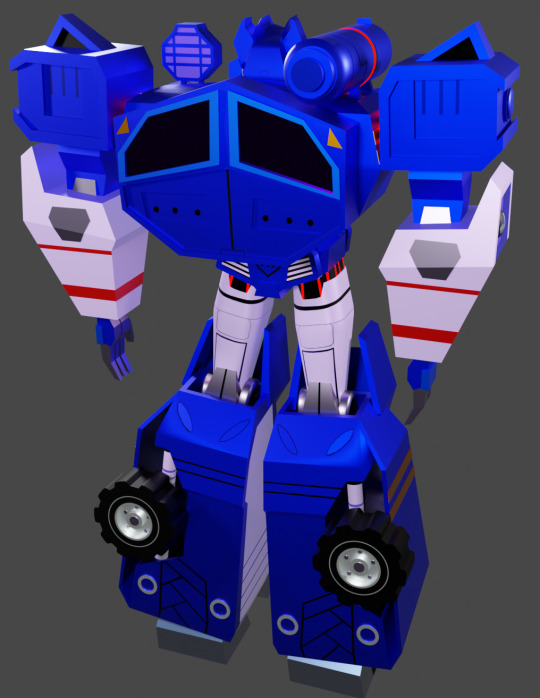

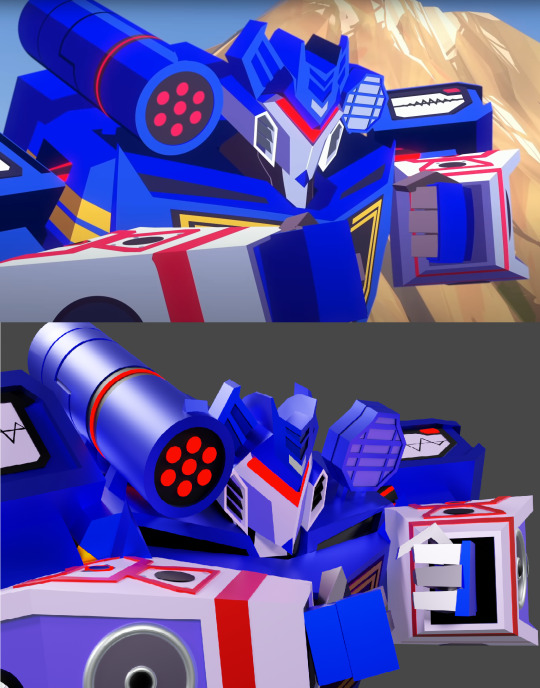

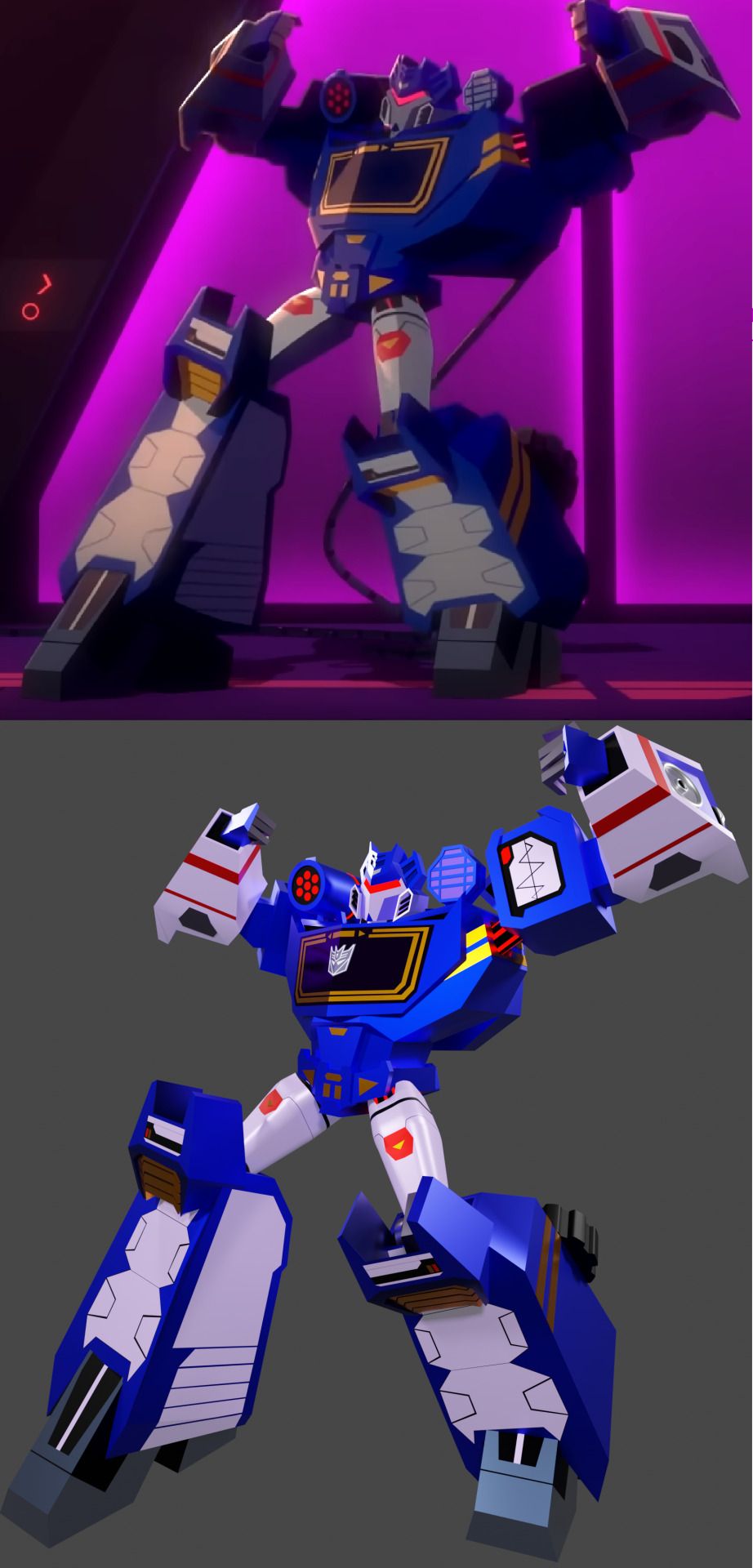

I present to your attention Soundwave from "Cyberverse". The model is movable, as a demonstration, I decided to recreate several of his poses from the series.

#transformers#tf#tf cyberverse#maccadam#soundwave#cyberverse soundwave#tf soundwave#transformers soundwave#blender#3d model#cyberverse

630 notes

·

View notes

Text

the very well animated kiss from that scene™ because for some reason i didn't see a single gif version of it yet

#baldur's gate 3#baldur's gate iii#astarion#halsin#bg3edit#bg3#panel from hell#yeah yeah bear transformation and all of that but have you SEEN how well animated that kiss was ??#now is it going to be like this for all characters in the game#or since they knew they were going to show this scene did they touch up the animations just to fit it perfectly to astarion face model ?#because like#there's a LOT of face models available for your character in this game and races of different size#i just wonder if all will get an equal treatment in animations like these because it seems like a shit ton of additional work#in any case#this looks great

2K notes

·

View notes

Text

take the wheel

#my doodles#transformers#transformers rise of the beasts#rise of the beasts#rotb#noah diaz#mirage#gave up on rendering LMFAOOOO#why is mecha so hard#i had to GO ALL THE WAY TO BLENDER TO LOAD HIS MODEL JUST TO SEE HOW HE LOOKS LIKE#god

2K notes

·

View notes

Text

Abstract

Deep neural networks (DNNs) are often used for text classification due to their high accuracy. However, DNNs can be computationally intensive, requiring millions of parameters and large amounts of labeled data, which can make them expensive to use, to optimize, and to transfer to out-of-distribution (OOD) cases in practice. In this paper, we propose a non-parametric alternative to DNNs that’s easy, lightweight, and universal in text classification: a combination of a simple compressor like gzip with a k-nearest-neighbor classifier. Without any training parameters, our method achieves results that are competitive with non-pretrained deep learning methods on six in-distribution datasets. It even outperforms BERT on all five OOD datasets, including four low-resource languages. Our method also excels in the few-shot setting, where labeled data are too scarce to train DNNs effectively. Code is available at https://github.com/bazingagin/npc_gzip.

(July 2023 – pdf)

“this paper's nuts. for sentence classification on out-of-domain datasets, all neural (Transformer or not) approaches lose to good old kNN on representations generated by.... gzip” [x]

CLASSICAL ML SWEEP

#machine learning#programming#deep learning#nlp#neural networks#information theory#my uploads#my uploads (unjank)#transformer models#gzip#knn#k nearest neighbors

10 notes

·

View notes

Text

Do you like milk 🍼

#transgender#trans pride#transisbeautiful#mft#girlslikeus#maletofemale#transformation#transsexual#mft trans#actually trans#this is what trans looks like#trans community#trans feminine#trans girl#trans femme#trans is sexy#trans model#trans women are women#trans women are beautiful#trans woman#trans women are amazing

597 notes

·

View notes

Text

Soundwave speaks in the 3rd person and no one blinks. He’s intelligent, respected even

But when Me, Grimlock-

#thinking ab how funny this disparity is#soundwave said fuck the first person fuck articles and fuck prepositions#it’s HIS silly little speech pattern and it has no room for this ‘grammar’ nonsense#grimlock however is written off as dumb for doing the same damn thing#I feel like grim could use him as a role model#transformers#maccadam#soundwave#grimlock#I hope everyone reads this in the voice of that one joker ‘SoCiEtY’ gay meme

1K notes

·

View notes

Text

‼️You have 13 seconds‼️

#I got all 3 of these models done just for this….#my art#artists on tumblr#maccadam#transformers#fanart#animation#TFA#TFA blurr#tfa shockwave#TFA drift

1K notes

·

View notes

Text

So I met a car guy.. we actually have a car date here in a bit. We’ll see how this goes! Wish me luck!

If you like my content think about sending me a tip!

$ChrissyKaos

Venmo - @Chrissy_Kaos

#please help#I can’t do this without you#trans#transgender#trans pride#transisbeautiful#mtf#transgirl#girlslikeus#mtf hrt#maletofemale#transformation#trans woman#trans women#transexual#actually trans#this is what trans looks like#mtf trans#trans community#transsexual#trans experience#trans feminine#trans fem#trans goddess#trans is beautiful#trans is sexy#trans mtf#trans model#trans sex worker#trans girls

1K notes

·

View notes