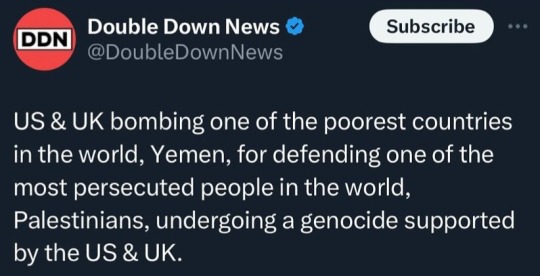

#fuck government

Text

865 notes

·

View notes

Text

#i love these people 🇵🇸#from the sea to the river#palestine#gaza#israel#pissrael#apartheid pissrael#decolonisation#genocide#ethnic cleansing#middle east#lol#fuck government#funny twitter#laugh rule

60 notes

·

View notes

Text

Guys, gals and nonbinary pals: I present the latest development in Dystopian Tech Inventions:

[begin article: "Are You Ready for Workplace Brain Scanning?"]

"Get ready: Neurotechnology is coming to the workplace. Neural sensors are now reliable and affordable enough to support commercial pilot projects that extract productivity-enhancing data from workers’ brains. These projects aren’t confined to specialized workplaces; they’re also happening in offices, factories, farms, and airports. The companies and people behind these neurotech devices are certain that they will improve our lives. But there are serious questions about whether work should be organized around certain functions of the brain, rather than the person as a whole.

To be clear, the kind of neurotech that’s currently available is nowhere close to reading minds. Sensors detect electrical activity across different areas of the brain, and the patterns in that activity can be broadly correlated with different feelings or physiological responses, such as stress, focus, or a reaction to external stimuli. These data can be exploited to make workers more efficient—and, proponents of the technology say, to make them happier. Two of the most interesting innovators in this field are the Israel-based startup InnerEye, which aims to give workers superhuman abilities, and Emotiv, a Silicon Valley neurotech company that’s bringing a brain-tracking wearable to office workers, including those working remotely.

The fundamental technology that these companies rely on is not new: Electroencephalography (EEG) has been around for about a century, and it’s commonly used today in both medicine and neuroscience research. For those applications, the subject may have up to 256 electrodes attached to their scalp with conductive gel to record electrical signals from neurons in different parts of the brain. More electrodes, or “channels,” mean that doctors and scientists can get better spatial resolution in their readouts—they can better tell which neurons are associated with which electrical signals.

What is new is that EEG has recently broken out of clinics and labs and has entered the consumer marketplace. This move has been driven by a new class of “dry” electrodes that can operate without conductive gel, a substantial reduction in the number of electrodes necessary to collect useful data, and advances in artificial intelligence that make it far easier to interpret the data. Some EEG headsets are even available directly to consumers for a few hundred dollars.

While the public may not have gotten the memo, experts say the neurotechnology is mature and ready for commercial applications. “This is not sci-fi,” says James Giordano, chief of neuroethics studies at Georgetown University Medical Center. “This is quite real.”

How InnerEye’s TSA-boosting technology works

In an office in Herzliya, Israel, Sergey Vaisman sits in front of a computer. He’s relaxed but focused, silent and unmoving, and not at all distracted by the seven-channel EEG headset he’s wearing. On the computer screen, images rapidly appear and disappear, one after another. At a rate of three images per second, it’s just possible to tell that they come from an airport X-ray scanner. It’s essentially impossible to see anything beyond fleeting impressions of ghostly bags and their contents.

“Our brain is an amazing machine,” Vaisman tells us as the stream of images ends. The screen now shows an album of selected X-ray images that were just flagged by Vaisman’s brain, most of which are now revealed to have hidden firearms. No one can knowingly identify and flag firearms among the jumbled contents of bags when three images are flitting by every second, but Vaisman’s brain has no problem doing so behind the scenes, with no action required on his part. The brain processes visual imagery very quickly. According to Vaisman, the decision-making process to determine whether there’s a gun in complex images like these takes just 300 milliseconds.

What takes much more time are the cognitive and motor processes that occur after the decision making—planning a response (such as saying something or pushing a button) and then executing that response. If you can skip these planning and execution phases and instead use EEG to directly access the output of the brain’s visual processing and decision-making systems, you can perform image-recognition tasks far faster. The user no longer has to actively think: For an expert, just that fleeting first impression is enough for their brain to make an accurate determination of what’s in the image.

Vaisman is the vice president of R&D of InnerEye, an Israel-based startup that recently came out of stealth mode. InnerEye uses deep learning to classify EEG signals into responses that indicate “targets” and “nontargets.” Targets can be anything that a trained human brain can recognize. In addition to developing security screening, InnerEye has worked with doctors to detect tumors in medical images, with farmers to identify diseased plants, and with manufacturing experts to spot product defects. For simple cases, InnerEye has found that our brains can handle image recognition at rates of up to 10 images per second. And, Vaisman says, the company’s system produces results just as accurate as a human would when recognizing and tagging images manually—InnerEye is merely using EEG as a shortcut to that person’s brain to drastically speed up the process.

While using the InnerEye technology doesn’t require active decision making, it does require training and focus. Users must be experts at the task, well trained in identifying a given type of target, whether that’s firearms or tumors. They must also pay close attention to what they’re seeing—they can’t just zone out and let images flash past. InnerEye’s system measures focus very accurately, and if the user blinks or stops concentrating momentarily, the system detects it and shows the missed images again.

Having a human brain in the loop is especially important for classifying data that may be open to interpretation. For example, a well-trained image classifier may be able to determine with reasonable accuracy whether an X-ray image of a suitcase shows a gun, but if you want to determine whether that X-ray image shows something else that’s vaguely suspicious, you need human experience. People are capable of detecting something unusual even if they don’t know quite what it is.

“We can see that uncertainty in the brain waves,” says InnerEye founder and chief technology officer Amir Geva. “We know when they aren’t sure.” Humans have a unique ability to recognize and contextualize novelty, a substantial advantage that InnerEye’s system has over AI image classifiers. InnerEye then feeds that nuance back into its AI models. “When a human isn’t sure, we can teach AI systems to be not sure, which is better training than teaching the AI system just one or zero,” says Geva. “There is a need to combine human expertise with AI.” InnerEye’s system enables this combination, as every image can be classified by both computer vision and a human brain.

Using InnerEye’s system is a positive experience for its users, the company claims. “When we start working with new users, the first experience is a bit overwhelming,” Vaisman says. “But in one or two sessions, people get used to it, and they start to like it.” Geva says some users do find it challenging to maintain constant focus throughout a session, which lasts up to 20 minutes, but once they get used to working at three images per second, even two images per second feels “too slow.”

In a security-screening application, three images per second is approximately an order of magnitude faster than an expert can manually achieve. InnerEye says their system allows far fewer humans to handle far more data, with just two human experts redundantly overseeing 15 security scanners at once, supported by an AI image-recognition system that is being trained at the same time, using the output from the humans’ brains.

InnerEye is currently partnering with a handful of airports around the world on pilot projects. And it’s not the only company working to bring neurotech into the workplace.

How Emotiv’s brain-tracking technology works

When it comes to neural monitoring for productivity and well-being in the workplace, the San Francisco–based company Emotiv is leading the charge. Since its founding 11 years ago, Emotiv has released three models of lightweight brain-scanning headsets. Until now the company had mainly sold its hardware to neuroscientists, with a sideline business aimed at developers of brain-controlled apps or games. Emotiv started advertising its technology as an enterprise solution only this year, when it released its fourth model, the MN8 system, which tucks brain-scanning sensors into a pair of discreet Bluetooth earbuds.

Tan Le, Emotiv’s CEO and cofounder, sees neurotech as the next trend in wearables, a way for people to get objective “brain metrics” of mental states, enabling them to track and understand their cognitive and mental well-being. “I think it’s reasonable to imagine that five years from now this [brain tracking] will be quite ubiquitous,” she says. When a company uses the MN8 system, workers get insight into their individual levels of focus and stress, and managers get aggregated and anonymous data about their teams.

Emotiv launched its enterprise technology into a world that is fiercely debating the future of the workplace. Workers are feuding with their employers about return-to-office plans following the pandemic, and companies are increasingly using “ bossware” to keep tabs on employees—whether staffers or gig workers, working in the office or remotely. Le says Emotiv is aware of these trends and is carefully considering which companies to work with as it debuts its new gear. “The dystopian potential of this technology is not lost on us,” she says. “So we are very cognizant of choosing partners that want to introduce this technology in a responsible way—they have to have a genuine desire to help and empower employees,” she says.

Lee Daniels, a consultant who works for the global real estate services company JLL, has spoken with a lot of C-suite executives lately. “They’re worried,” says Daniels. “There aren’t as many people coming back to the office as originally anticipated—the hybrid model is here to stay, and it’s highly complex.” Executives come to Daniels asking how to manage a hybrid workforce. “This is where the neuroscience comes in,” he says.

Emotiv has partnered with JLL, which has begun to use the MN8 earbuds to help its clients collect “true scientific data,” Daniels says, about workers’ attention, distraction, and stress, and how those factors influence both productivity and well-being. Daniels says JLL is currently helping its clients run short-term experiments using the MN8 system to track workers’ responses to new collaboration tools and various work settings; for example, employers could compare the productivity of in-office and remote workers.

Emotiv CTO Geoff Mackellar believes the new MN8 system will succeed because of its convenient and comfortable form factor: The multipurpose earbuds also let the user listen to music and answer phone calls. The downside of earbuds is that they provide only two channels of brain data. When the company first considered this project, Mackellar says, his engineering team looked at the rich data set they’d collected from Emotiv’s other headsets over the past decade. The company boasts that academics have conducted more than 4,000 studies using Emotiv tech. From that trove of data—from headsets with 5, 14, or 32 channels—Emotiv isolated the data from the two channels the earbuds could pick up. “Obviously, there’s less information in the two sensors, but we were able to extract quite a lot of things that were very relevant,” Mackellar says.

Once the Emotiv engineers had a hardware prototype, they had volunteers wear the earbuds and a 14-channel headset at the same time. By recording data from the two systems in unison, the engineers trained a machine-learning algorithm to identify the signatures of attention and cognitive stress from the relatively sparse MN8 data. The brain signals associated with attention and stress have been well studied, Mackellar says, and are relatively easy to track. Although everyday activities such as talking and moving around also register on EEG, the Emotiv software filters out those artifacts.

The app that’s paired with the MN8 earbuds doesn’t display raw EEG data. Instead, it processes that data and shows workers two simple metrics relating to their individual performance. One squiggly line shows the rise and fall of workers’ attention to their tasks—the degree of focus and the dips that come when they switch tasks or get distracted—while another line represents their cognitive stress. Although short periods of stress can be motivating, too much for too long can erode productivity and well-being. The MN8 system will therefore sometimes suggest that the worker take a break. Workers can run their own experiments to see what kind of break activity best restores their mood and focus—maybe taking a walk, or getting a cup of coffee, or chatting with a colleague.

What neuroethicists think about neurotech in the workplace

While MN8 users can easily access data from their own brains, employers don’t see individual workers’ brain data. Instead, they receive aggregated data to get a sense of a team or department’s attention and stress levels. With that data, companies can see, for example, on which days and at which times of day their workers are most productive, or how a big announcement affects the overall level of worker stress.

Emotiv emphasizes the importance of anonymizing the data to protect individual privacy and prevent people from being promoted or fired based on their brain metrics. “The data belongs to you,” says Emotiv’s Le. “You have to explicitly allow a copy of it to be shared anonymously with your employer.” If a group is too small for real anonymity, Le says, the system will not share that data with employers. She also predicts that the device will be used only if workers opt in, perhaps as part of an employee wellness program that offers discounts on medical insurance in return for using the MN8 system regularly.

However, workers may still be worried that employers will somehow use the data against them. Karen Rommelfanger, founder of the Institute of Neuroethics, shares that concern. “I think there is significant interest from employers” in using such technologies, she says. “I don’t know if there’s significant interest from employees.”

Both she and Georgetown’s Giordano doubt that such tools will become commonplace anytime soon. “I think there will be pushback” from employees on issues such as privacy and worker rights, says Giordano. Even if the technology providers and the companies that deploy the technology take a responsible approach, he expects questions to be raised about who owns the brain data and how it’s used. “Perceived threats must be addressed early and explicitly,” he says.

Giordano says he expects workers in the United States and other western countries to object to routine brain scanning. In China, he says, workers have reportedly been more receptive to experiments with such technologies. He also believes that brain-monitoring devices will really take off first in industrial settings, where a momentary lack of attention can lead to accidents that injure workers and hurt a company’s bottom line. “It will probably work very well under some rubric of occupational safety,” Giordano says. It’s easy to imagine such devices being used by companies involved in trucking, construction, warehouse operations, and the like. Indeed, at least one such product, an EEG headband that measures fatigue, is already on the market for truck drivers and miners.

Giordano says that using brain-tracking devices for safety and wellness programs could be a slippery slope in any workplace setting. Even if a company focuses initially on workers’ well-being, it may soon find other uses for the metrics of productivity and performance that devices like the MN8 provide. “Metrics are meaningless unless those metrics are standardized, and then they very quickly become comparative,” he says.

Rommelfanger adds that no one can foresee how workplace neurotech will play out. “I think most companies creating neurotechnology aren’t prepared for the society that they’re creating,” she says. “They don’t know the possibilities yet.”

[end article.]

Ok what the fuck has gotten into the capitalist's brains this time?

The working class has been voicing its issues with its employers since the beginning of time. Hundreds and hundreds of studies show what needs to be changed. Shorter week and hours, more pay, less power dynamic, etc. Nothing is being changed regardless. There's no need to do fucking brain monitoring to figure out what the problem is. Are they really that ignorant or is it an act?

And there's no telling how long if possible it will take to fully decode people's thoughts. The scientists behind it imply they are quite close. If it happens then it will be literally 1984 but unironically. Employers and government would quickly jump on the train of creating thoughtcrimes exactly as Orwell envisioned it. Why wouldn't they?

Also, anonymize my ass. Make it FOSS. Software is always guilty until proven innocent. There's literally no way I can prove that you aren't sharing the data, and literally no way you can prove there will never be a data breach.

And these so-called "ethicists" just brush it off like

Both she and Georgetown’s Giordano doubt that such tools will become commonplace anytime soon. “I think there will be pushback” from employees on issues such as privacy and worker rights, says Giordano. Even if the technology providers and the companies that deploy the technology take a responsible approach, he expects questions to be raised about who owns the brain data and how it’s used. “Perceived threats must be addressed early and explicitly,” he says.

" 'Percieved threats must be addressed early and explicitly.' " So you're admitting that workers don't get to have a choice in the matter and that you intend to use force (Economic pressure is still force. If you can't find a job in the future that doesn't do this you are effectively forced. And the government could use this too.) to make us comply.

Everyone called George Orwell crazy. Everyone called Richard Stallman crazy. Everyone called Edward Snowden crazy. Yet their predictions continue to come true again and again. And no one bats an eye. Society had just blindly accepted the onset of mass surveillance. Everyone knows about it in dictatorships like China and North Korea but no one wants to talk about how rampant it is in other places where it's done more silently.

Some people say "I have nothing to fear because I have nothing to hide." Ok, so what happens when the government goes wack and decides to start rounding up groups of people? What happens if your race/ethnicity, religion, gender, sexuality, disabilities, etc falls into one of those categories? It happened in Germany and we are at risk of it happening in the U.S. and other places. (In Germany there wasn't surveillance tech yet so they just force searched your home instead. Same difference.) How do you know it will never happen? What do you do then? What. Do. You. Do. Then.

No one I have asked it has ever been able to answer this question beyond blind faith that it won't happen. The real answer is you're fucked. That's the answer.

#fuck capitalism#workers rights#privacy#technology#science#fuck government#ai#artificial intelligence#computer science

57 notes

·

View notes

Text

LTBQTQ+ community and the haters of it.

I have a word you better fucking hear.

So.

Tell me. Why isn't being part of ltbqtq+ community right? God didn't say anything about it. God said to love people. Love, take care of yourself and others. Treat others like you wish to be treated. I bet you wouldn't want to be called dirty pig, motherfucker, disappointment, fake etc. Just because you like some specific fucking fruit. Would you?

Why hate on the gays and trans? The aro and the ace? The lesbian and the non-binary? The demi and the fluids? What the fuck did they do to you.

"some gay man touched me once inappropriately! Now I fear all of them." Do you know what? Isn't that exactly what women go through? Every. Single. Day.

You want grandchildren? Well. Sometimes you don't get what you want. You got to accept the truth. If Emily wants to be Elias he can be Elias. Or if Oliver wants to be Olivia. She can be. If they don't want to experience in sexual activities it's okay. Why? because some just don't want it. They have their reasons. They could have go through traumatic stuff or they just don't like the idea of it. What's so fucking wrong with it?

Say it. Tell it. What's wrong with experiencing love? You probably love someone, you're dating or married happily for a while already. Why not let the youth, others have the same? Why.

LTBQTQ COMMUNITY IS VALID AND SHOULD HAVING FUCKING RIGHTS.

WHY. THE. FUCK. DON'T. THEY. HAVE. RIGHTS. YET!???!!!!???!!!?

WHAT THE FUCK DID WE DO

millions of humans are dying, fightings for their life. Just because they're being themself, loving someone of the same gender. Because they're different gender than "the norm". Because they are in a relationship with several people. Because they don't feel romantic, sexual connection to anybody. We die. We fight. We suffer. People fucking k!ll themself. Just because they get bullied and hara$$€d.

What did we ever fucking do to you people? What is wrong with you. Why hate us? Come on society. Grow the fuck up.

#lgbt pride#pride#gay pride#lgbtqia#protect lgbtq youth#protect lgbt kids#freedom#better future#stand up for LGBTQIA#support ltbqtq#lgbtq community#fucking listen#important#hear me out#protest#fuck government#bisexual#gay#aroace#aromantic#asexual#queer community#queer#queer stuff

12 notes

·

View notes

Text

I hate the concept of religion most of the time.

I don’t like religion because it’s easy to manipulate.

Morals are formed on empathy and consequences (from society, so the moral society is projecting). For example; not stealing because you know the consequences of getting caught and the empathy for the person you’d be stealing from.

Morals are philosophy.

Philosophy is all about evaluating existence and “why?”

Religion is (a subdivision of) philosophy, but not one you think about and evaluate. It’s one that’s taught to you.

(Using Christianity as an example because religious trauma) So instead of empathy, it’s consequences from a higher power, god. Stealing isn’t wrong because you might be financially or emotionally harming another person, it’s wrong because god said so. Killing isn’t wrong because it’s harming another person, it’s wrong because god said so.

So morals aren’t based on empathy for others, it’s based on what you’re told by a god. What’s told can be manipulated and changed.

For example; Dionysus’s cult was a refuge for minorities to absolutely party, and so the state sought to shut that down. Until the higher ups got super into partying and went “yoooo there’s a perfect god for this!” (From memory, not doing research for a ramble)

Religion is either a threat to the state or a tool of the state.

In America, from the beginning of its colonization, it’s been a tool of the state.

When your country has such different world views that they fight each other, they can’t fight the oppressor.

So the state can manipulate it however they want. Trans people are wrong, not because they’re doing anything wrong, but because they’re changing gods design. God said so.

I personally heard often Christians say shit like “the government is transing the kids so they get money from the life long treatments!“ but they fight trans people, because that’s who they’re told is wrong. They don’t even realize that’s a fucking fault of capitalism, not trans people.

When someone’s worldview’s morals is getting told something by a higher up, not based on empathy or cause, it’s easy for higher ups to manipulate that.

#fuck capitalism#fuck government#fuck america#Probably inaccurate history#neurodivergent rambling#Religious trauma rambling#Shitpost

3 notes

·

View notes

Text

So far today I’ve had a nice lunch, got a parking ticket (paid seconds after it was issued), got groceries, bought a new gundam kit, and am having drinks before my 4 months of self induced sobriety.

Today has been a good day.

2 notes

·

View notes

Text

6 notes

·

View notes

Text

Your body my choice are the four scariest words I’ve heard in my life.

#abortion#abortion rights#poetry#literature#roe versus wade#fuck government#ogpoem#my writing#popular#poet#womens rights#fight for the future#lgbtq#poets on tumblr#male privilege#marriage#equal opportunity

6 notes

·

View notes

Text

I can pretty much track my loss of faith in the US to the day I watched Kavanaugh get confirmed.

2 notes

·

View notes

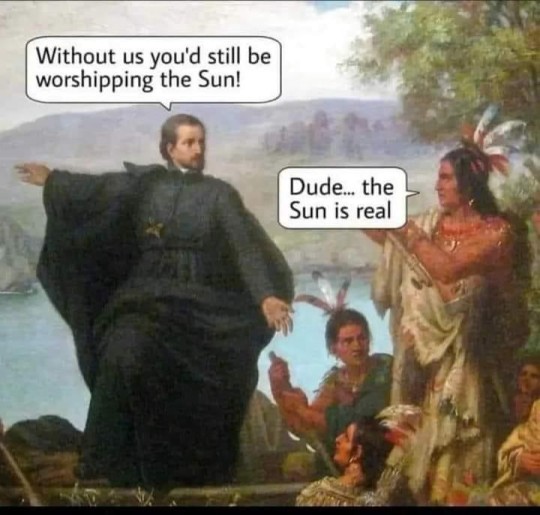

Text

#religion#dogma#atheist#science#bible nonsense#sun#universe#earth#ausgov#politas#auspol#tasgov#taspol#australia#fuck neoliberals#neoliberal capitalism#anthony albanese#albanese government#religion is a mental illness#religion is bullshit#religion is toxic#religion is a scam#religion is stupid#class war#bible scripture#bible quote#bible study#bible verse#bible#eat the rich

95K notes

·

View notes

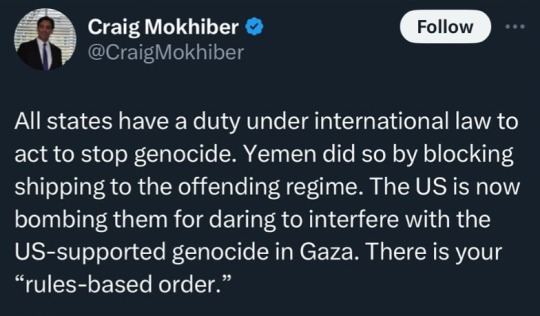

Text

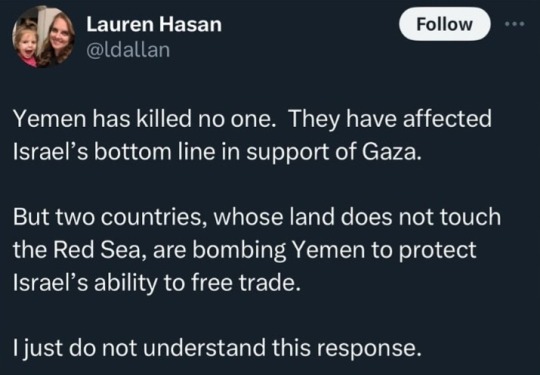

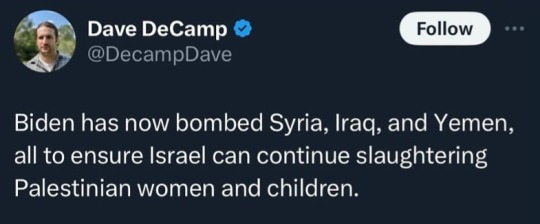

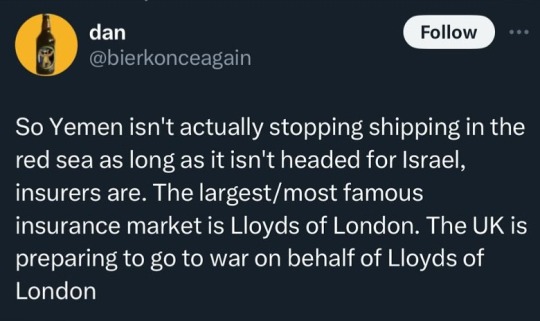

#they just resort instantly to bombing#and everyone just thinks it's normal#coz it's a non white middle eastern Muslim country#how are the us uk and israel allowed to exist when they're the worst thing for world peace#why do their governments get away with so much#and they then have the audacity to lecture other countries about human rights#fuck off#yemen#palestine#israel#free palestine#gaza#gaza strip#middle east#free gaza#fuck israel

33K notes

·

View notes