#blog optimization

Text

I have started an experimental writing-practice tumblr! @ten-thousand-clay-pots, for anyone interested in following it.

(Although be warned that, since it's for experimental practice writing, its content is likely to be of dubious coherence and quality; thus my keeping it contained in a sideblog rather than posting it here.)

Currently I'm attempting an experiment involving posting one short thing (of arbitrary length and quality) per day, there. Activity in the long run is likely to be a lot more intermittent, though, unless the current experiment goes substantially better than I'm expecting it to.

#Archive#Blog Optimization#In Which I Exist Elsewhere#(sort of)#once i'm doing writing i think is actually good i'll probably start posting or linking it more directly from here#but i'm sufficiently signal:noise-ratio-sensitive on this blog that it wouldn't feel right posting the low-quality experimental stuff here#so sideblog it is

8 notes

·

View notes

Text

Taste the Success: Optimizing Your Food Blog for 2024

Welcome to the digital kitchen, fellow bloggers! Just like any great recipe, a successful blog needs the right blend of ingredients. In the ever-evolving world of blogging, optimization is the secret sauce that takes your blog from good to gourmet. Join me on this journey as we unravel the best strategies to optimize your blog in 2024 and create a digital feast that leaves your readers craving…

View On WordPress

#Blog Optimization#Blogger Humor#Digital Marketing#Mobile-Friendly Blog#SEO Strategies#SOCIAL MEDIA#Visual Content

0 notes

Text

Blog Optimization Tips | Marketive

Unlock the secrets of blog optimization with Marketive's guidance. Enhance your blog's performance and attract more readers. Empower your content now!

0 notes

Text

By recognizing and leveraging the power of blog creation for your website, you can unlock significant SEO benefits, driving organic traffic, building authority, and enhancing user experience.

0 notes

Text

and yet we dance!

#theology#spirituality#christianity#christian blog#christian faith#catholiscism#catholic#spiritual memes#spiritualjourney#spiritualgrowth#spiritual awakening#spiritual development#spirtiuality#spiritual knowledge#optimism#optimistic#positive mindset#positive energy#positive affirmations#positive thinking#positive thoughts#positivity

4K notes

·

View notes

Text

Not a big fan of capitalism

#i do not want to charge people to see my art#i have a job on top of doing this little art blog#every time i show this little project of mine people tell me i better find a way to monetize it to make money off of this#its just very frustrating to constantly have to explain that i want people to just see and cherish my drawings#i dont want to be reliant on making art to make my money i think that'd not work with what im going for#and it would turn art into a deeply frustrating experience i try to constantly optimize to get the most amount of money from#rendering experiments and trying out new artstyles something that could legit put me out of eating food 👍#but really#the job is not good for me i am always do exhausted#it really takes so much effort to draw sometimes

2K notes

·

View notes

Text

nomming it

#this is more clearly fatfur-inclined than my usual art but this blog is 18+ so who fucking cares#my art#luna post#luna possum#that said i gotta get some NORMAL FUCKING ART on here#and maybe change my url to help my search engine optimization heal somewhat

488 notes

·

View notes

Text

HAL: The optimal way to exist is to turn yourself into a joke and into entertainment and avoid stating sincere emotions as much as possible and then wonder why your soul is withering away from soul-crushing loneliness.

HAL: By the way.

HAL: In case you didn’t know.

#submission#hal no offense bro but i am not doing that <4#actually i did that so much a few years back i crashed and i had to take my three year blog break . LMAO#was it three years? when did i bounce at first#i dont rember. optimal way to exist is to ball hard and do whatever you want forever#homestuck#incorrect homestuck quotes#incorrect quotes#mod dave#hal strider#lil hal

72 notes

·

View notes

Text

Game Optimization and Production

I wanted to write a bit of a light primer about optimization and how it relates to game production in the event people just don't know how it works, based on my experience as a dev. I'm by no means an expert in optimization myself, but I've done enough of it on my own titles and planned around it enough at this point to understand the gist of what it comes down to and considerations therein. Spoilers: games being unoptimized are rarely because devs are lazy, and more because games are incredibly hard to make and studios are notoriously cheap.

(As an aside, this was largely prompted by seeing someone complaining about how "modern" game developers are 'lazy' because "they don't remember their N64/Gamecube/Wii/PS2 or PS3 dropping frames". I feel compelled to remind people that 'I don't remember' is often the key part of the "old consoles didn't lag" equation, because early console titles ABSOLUTELY dropped frames and way more frequently and intensely than many modern consoles do. Honestly I'd be willing to bet that big budget games on average have become more stable over time. Honorable mention to this thread of people saying "Oh yeah the N64 is laggy as all hell" :') )

Anywho, here goes!

Optimization

The reason games suffer performance problems isn't because game developers are phoning it in or half-assing it (which is always a bad-faith statement when most devs work in unrealistic deadlines, for barely enough pay, under crunch conditions). Optimization issues like frame drops are often because of factors like ~hardware fragmentation~ and how that relates to the realities of game production.

I think the general public sees "optimization" as "Oh the dev decided to do a lazy implementation of a feature instead of a good one" or "this game has bugs", which is very broad and often very misguided. Optimization is effectively expanding the performance of a game to be performance-acceptable to the maximum amount of people - this can be by various factors that are different for every game and its specific contexts, from lowering shader passes, refactoring scripts, or just plain re-doing work in a more efficient way. Rarely is it just one or two things, and it's informed by many factors which vary wildly between projects.

However, the root cause why any of this is necessary in the first place is something called "Platform Fragmentation".

What Is Fragmentation

"Fragmentation" is the possibility space of variation within hardware being used to run a game. Basically, the likelihood that a user is playing a game on a different hardware than the one you're testing on - if two users are playing your game on different hardware, they are 'fragmented' from one another.

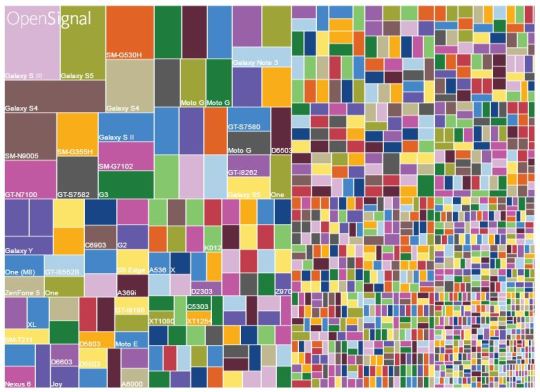

As an example, here's a graphic that shows the fragmentation of mobile devices based on model and user share. The different sizes are how many users are using a different type of model of phone:

As you can tell, that's a lot of different devices to have to build for!

So how does this matter?

For PC game developers, fragmentation means that an end-user's setup is virtually impossible to predict, because PC users frequently customize and change their hardware. Most PC users potentially have completely different hardware entirely.

Is your player using an up-to-date GPU? CPU? How much RAM do they have? Are they playing on a notebook? A gaming laptop? What brand hardware are they using? How much storage space is free? What OS are they using? How are they using input?

Moreover PC parts don't often get "sunsetted" whole-cloth like old consoles do, so there's also the factor of having to support hardware that could be coming up on 5, 10 or 15 years old in some cases.

For console developers it's a little easier - you generally know exactly what hardware you're building for, and you're often testing directly on a version of the console itself. This is a big reason why Nintendo's first party titles feel so smooth - because they only build for their own systems, and know exactly what they're building for at all times. The biggest unknowns are usually smaller things like televisions and hookups therein, but the big stuff is largely very predictable. They're building for architecture that they also made themselves, which makes them incredibly privileged production-wise!

Fragmentation basically means that it's difficult - or nearly impossible - for a developer to know exactly what their users are playing their games on, and even more challenging to guarantee their game is compatible everywhere.

Benchmarking

Since fragmentation makes it very difficult to build for absolutely everybody, at some point during development every developer has to draw a line in the sand and say "Okay, [x] combination of hardware components is what we're going to test on", and prioritize that calibre of setup before everything else. This is both to make testing easier (so testers don't have to play the game on every single variation of hardware), and also to assist in optimization planning. This is a "benchmark".

Usually the benchmark requirements are chosen for balancing visual fidelity, gameplay, and percentage of the market you're aiming for, among other considerations. Often for a game that is cross-platform for both PC and console, this benchmark will be informed by the console requirements in some way, which often set the bar for a target market (a cross-platform PC and console game isn't going to set a benchmark that is impossible for a console to play, though it might push the limits if PC users are the priority market). Sometimes games hit their target benchmarks, sometimes they don't - as with anything in game development it can be a real crap shoot.

In my case for my games which are often graphically intensive and poorly made by myself alone, my benchmark is often a machine that is approximately ~5 years old and I usually take measures to avoid practices which are generally bad and can build up to become very expensive over time. Bigger studios with more people aiming at modern targets will likely prioritize hardware from within the last couple years to have their games look the best for users with newest hardware - after all, other users will often catch up as hardware evolves.

This benchmark allows devs to have breathing room from the fragmentation problem. If the game works on weaker machines - great! If it doesn't - that's fine, we can add options to lower quality settings so it will. In the worst case, we can ignore it. After all, minimum requirements exist for a reason - a known evil in game development is not everyone will be able to run your game.

Making The Game

As with any game, the more time you spend on something is the more money being spent on it - in some cases, extensive optimization isn't worth the return of investment. A line needs to be drawn and at some point everyone can't play your game on everything, so throwing in the towel and saying "this isn't great, but it's good enough to ship" needs to be done if the game is going to ship at all.

Optimizing to make sure that the 0.1% of users with specific hardware can play your game probably isn't worth spending a week on the work. Frankly, once you hit a certain point some of those concerns are easier put off until post-launch when you know how much engagement your game has, how many users of certain hardware are actually playing, and how much time/budget you have to spend post-launch on improving the game for them. Especially in this "Games As A Service" market, people are frequently expecting games to receive constant updates on things like performance after launch, so there's always more time to push changes and smooth things out as time goes on. Studios are also notoriously squirrelly with money, and many would rather get a game out into paying customer's hands than sit around making sure that everything is fine-tuned (in contrast to most developers who would rather the game they've worked on for years be fine-tuned than not).

Comparatively to the pre-Day One patch era; once you printed a game on a disc it is there forever and there's no improving it or turning back. A frightening prospect which resulted in lots of games just straight up getting recalled because they featured bugs or things that didn't work. 😬

Point is though, targeted optimization happens as part of development process, and optimization in general often something every team helps out with organically as production goes on - level designers refactor scripts to be more efficient, graphics programmers update shaders to cut down on passes, artists trim out poly counts where they can to gradually achieve better performance. It's an all-hands-on-deck sort of approach that affects all devs, and often something that is progressively tracked as development rolls on, as a few small things can add up to larger performance issues.

In large studios, every developer is in charge of optimizing their own content to some extent, and some performance teams are often formed to be dedicated to finding the easiest, safest and quickest optimization wins. Unless you plan smartly in the beginning, some optimizations can also just be deemed to dangerous and out-of-reach to carry out late in production, as they may have dependencies or risk compromising core build stability - at the end of the day more frames aren't worth a crashing game.

Conclusion

Games suffer from performance issues because video game production is immensely complex and there's a lot of different shifting factors that inform when, how, and why a game might be optimized a certain way. Optimization is frequently a production consideration as much as a development one, and it's disingenuous to imply that games lag because developers are lazy.

I think it's worth emphasizing that if optimization doesn't happen, isn't accommodated, or perhaps is undervalued as part of the process it's rarely if ever because the developers didn't want to do it; rather, it's because it cost the studio too much money. As with everything in our industry, the company is the one calling the final shots in development. If a part of a game seems to have fallen behind in development it's often because the studio deemed it acceptable, refused to move deadlines or extend a hand to help it come together better at fear of spending more money on it. Rarely if ever should individual developers be held accountable for the failings of companies!

Anywho, thanks for reading! I know optimization is a weird mystical sort of blind spot for a lot of dev folks, so I hope this at least helps shed some light on considerations that weigh in as part of the process on that :) I've been meaning to write a more practical workshop-style step-by-step on how to profile and spot optimization wins at some point in the future, but haven't had the time for it - hopefully I can spin something up in the next few weeks!

#gamedev#game development#game dev#indie games#indie game#gamedevelopment#indiegames#pc gaming#pc games#indie dev#indiedev#video games#video game#blog#thoughts#optimization

87 notes

·

View notes

Text

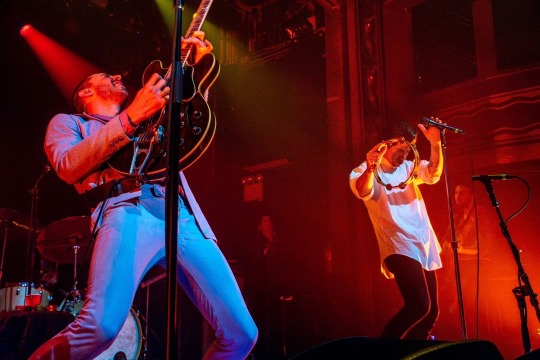

mattkmusicphotography: Miles Kane and Alex Turner of The Last Shadow Puppets back in 2016 at Webster Hall in NYC. It’s been some time since new material has been released. Hopefully 2024 brings some new music!

#it has been some time matt#not sure i share your optimism tho#also#alex in his fucking pillowcase#alex turner#miles kane#the last shadow puppets#eycte era#eycte tour#webster hall#i think this photo is already on my blog from back in the day but oh well#m

37 notes

·

View notes

Text

#typography#text#mine#aesthetic#spilled ink#water#sea#ocean#photography#edit#aesthetic blog#blue#positive#optimistic#optimism#healing#recovery

50 notes

·

View notes

Text

wanted to just quickly draw all my 17776 designs and ocs together as a warmup

in order:

nine, phoenix, curiosity

prospero, maven, juice

ten, hubble, miranda

optimism, stardust, intuition

#snowgems art#17776#dscp#snowgem ocs#ok time for the oc tags#phoenix#curiosity#prospero#maven#miranda#optimism#stardust#intuition#i think its so fucking funny that you have all these guys#and then theres maven. just a furry#god i love maven theyre awesome#dscp fandom dni#<- so the rp blogs dont rb this

55 notes

·

View notes

Text

FUCK YOU FUCK YOU FUCK YOU FUCK YOU FUCK YOU FUCK YOU FUCK YOU FUCK YOU KILL YOURSELF DIE I HATE YOU

#c++blogging#5??! GIRL???+#I LITERALLY DID BOTH PROBLEMS I HATE YOU. IM CHEATING ON TOMORROW'S LAB#diediediediedie just because the time complexity wasnt optimal???! BYE.

25 notes

·

View notes

Text

#kip sabian#aew#all elite wrestling#aewedit#wrestlingedit#wrestling#night gifs#hes a pathetic little fool and im hopelessly in love with him#my beloved#kip in a box#(rp blogs dont reblog; saving and other personal use with tag credits is fine)#i was scheduled these originally but fuck it have them now im too tired to care#optimal posting times? dont exist fuck timezones

31 notes

·

View notes

Text

big milestone - i enrolled in classes for the spring!

it's noteworthy because this is my third time attempting a first semester at college, but assuming i pass all of my current classes, this will be my first time actually continuing on to a second semester lmao

#third time IS the charm#'assuming i pass' for all my complaining i AM doing okay in school#إن شاء الله i will graduate#i'm even thinking about grad school#optimism is a hell of a drug#anyway!!!#gotta get back to... doing my homework before class starts lmfao#talk tag#college blogging

30 notes

·

View notes