#ethical politics

Text

Please stop seeing politics as an identity and start seeing it as a collective means for change

#i am so tired of ppl trying to use 'theres no ethical consumption under capitalism' in regards to BDS#it's not about ethics it's about participating in a political strategy palestinians have spent YEARS promoting as a means for change#some things are in fact not about you#social justice#I/P#social issues#palestine#gaza#free palestine#free gaza#id in alt#overflowing trashcan

71K notes

·

View notes

Text

I do not now, nor have I ever, expected Gideon to take a principaled stance on the forever war. I just don't think it matters to her. Ostensibly she's on her dad's side because her dad has been on a parenting kick lately, and the best she ever got from her mum was goodbye with a stay of execution. But if she were confronted with the ethics of the situation? Asked her opinion? I don't think she'd have one. She may be a prince, but at heart she's still the abused eighteen year old girl who fought tooth and nail to join the army just for a way out

#we've got other characters to have political opinions#paul. coronabeth. ianthe. even pyrrha#gideon is as she has always been—in survival mode#she has the political consciousness of an eggplant emoji#and I don't think that's changing any time soon#I don't WANT that to change any time soon#she neither deserves nor is equipped for large-scale ethical responsibility#the locked tomb#gideon nav#kiriona gaia#ntn spoilers#nona the ninth

966 notes

·

View notes

Text

Once I stopped wheezing, I went looking for what inspired this tweet. Apparently anyone consistently ripping into Biden and telling anyone why he's trash is "voter suppression". Liberals have all lost their goddamn minds.

#anyone with a strong moral ethical and political opposition to genocide#and all the right-wing fuckery Biden has been doing for the three years before that#is now paid by russia and the gop#goddamn it where is MY check??#i really gotta stop doing this shit for free in this economy#free palestine#genocide joe#fuck joe biden#baby killer biden#us politics#white liberals#shit liberals say#white people#knee of huss#tinhats#stop cop city

875 notes

·

View notes

Quote

Wherever there are politics or economics no morality exists.

Friedrich Schlegel, Ideas

686 notes

·

View notes

Text

Trump may be more aesthetically repugnant by saying the unspoken out loud but functionally I struggle to see a significant difference in their politics. Genocide Joe is supporting genocide and colonialism abroad and at home has reneged on basically every campaign promise + Roe inflation rent etc.

Biden:

nothing for LGBT protection

endorsed additional police funding

nothing to stop racist censorship/book bans

nothing to protect abortion

no student loan forgiveness

nothing for grocery or rent inflation

more immigrants detained and abused than under Trump

literally sending Israel more weapons and money for genocide, calls himself a Zionist

but oh no Trump :(

#like it's pure intellectual laziness at this point to think you can shortcut the ethics of voting D or R in this country#Trump and Biden are both fascists and if Dems gave a shit they could run someone else but they aren't so what does that tell you?#us politics#usa#chatter

615 notes

·

View notes

Text

(Don't) Incentivise Ethical Behaviour

In the ongoing project of rescuing useful thoughts off Xwitter, here's another hot take of mine, reheated:

"Being good for a reward isn’t being good---it’s just optimal play."

The quote comes from Luke Gearing and his excellent post "Against Incentive", to which I had been reacting.

My thread was mainly intended as a fulsome nodding along to one of Luke's points. It was posted in 2021, and extended in 2023 after Sidney Icarus posed a question to it. So it is two threads.

Here they are, properly paragraphed, hopefully more cleanly expressed:

+++

(Don't) Incentivise Ethical Behaviour

This is my main problem with mechanically rewarding pro-social play: a character's ethical choice is rendered mercenary.

As Luke Gearing puts it:

"Being good for a reward isn’t being good---it’s just optimal play."

Bear in mind that I'm not saying that pro-social play can't have rewarding outcomes for players. Any decision should have consequences in the fiction. It serves the ideal of portraying a living, world to have these consequences rendered diegetic:

The townsfolk are thankful; the goblins remember your mercy; pamphlets appear, quoting from your revolutionary speech.

What I am saying is that rewarding abstract mechanical benefits (XP tickets, metacurrency points, etc) for ethical decisions stinks.

+

A subtle but absolutely essential distinction, when it comes to portraying and exploring ethics / morality, in roleplaying games.

Say you reward bonus XP for sparing goblins.

Are your players making a decisions based on how much they value life / the personhood of goblins? Or are they making a decision based on how much they want XP?

Say you declare: "If you help the villagers, the party receives a +1 attitude modifier in this village."

Are your players assisting the community because it is the right thing to do, or are they playing optimally, for a +1 effect?

+

XP As Currency

XP is the ur-example of incentive in TTRPGs. It began with D&D's gold-for-XP, and has never strayed far from that logic.

XP is still currency. Do things the GM / game designer wants you to do? Get paid.

Players use XP to buy better mechanical tools (levels, skills, abilities)---which they can then in turn use to better perform the actions that will net them XP.

Like using gold you stole from goblins to buy a sword, so you can now rob orcs.

I genuinely feel that such systems are valuable. They are models that illuminate the drives fuelling amoral / unethical behaviour.

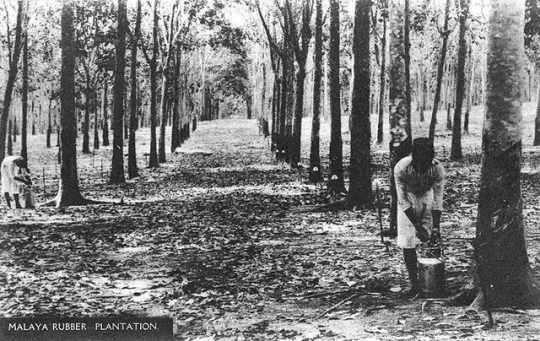

Material gain is the drive of land-grabbing and colonialism. Logger-barons and empires do get wealthier and more privileged, as a reward for their terrible actions.

+

If you want to present an ethical choice in play, congruent to our real-life dilemmas, there is value in asking:

"Hey, if you kill the goblins you can grab their treasure, and you will get richer. There's no reward for sparing their lives, except that they are thankful."

Which is another way of asking:

"Does your commitment to the ideal of preserving life outweigh the guaranteed material incentives for taking life?"

The ethical choice is the difficult choice, precisely because it involves---as it often does, in real life---sacrificing personal growth and gain. Doling out an XP bounty for doing the right thing makes the ethical choice moot.

"I as the player am making a mechanically optimal choice, but my character is making an ethical choice!"

A cop-out. Owning your cake and eating it too. The fictional fig-leaf of empathy over a calculated a decision to make profit.

+

Sidney Icarus asks a question which I will quote here:

"... those who hold to their beliefs of good behaviour don't feel rewarded, and therefore feel punished. And that's not a good feeling.

It's an unpleasant experience to play a game where the righteous players are in rags, and the mercenary fucks have crowns and sceptres.

So, what's the design opportunity? How do we make doing the right thing feel pleasant without making it mercenary? Or, like reality, do we acknowledge that ethical acts are valuable only intrinsically and philosophically?

I have no idea how to reconcile this."

I would suggest that the above dichotomy---"righteous players in rags, mercs in crowns"---is true if property is recognised as the only true incentive.

+

Friends As Property

Modern games try to solve the righteous-players-in-rags "problem" in various ways. Virtue might not net you treasure or XP, but may give you:

Contact or ally slots, which you can fill in;

Relationship meters you can watch tick up;

Favour points you can cash in later;

etc.

How different are these mechanical incentives from treasure or XP, really?

Your relationships with supposedly living, breathing beings are transformed into abilities for your character: skills you can train; powers you can reliably proc. Pump your relationship score with the orc tribe until calling on them for reinforcements becomes a once-per-month ability.

Relationships become contracts. Regard becomes debt. Put your friend in an ally slot, so they become a tool.

If this is what you want play to be---totally fine! As stated previously, games say powerful things when they portray the engines of profit and property.

But I personally don't think game designers should design employer-employee relationships and disguise these as instances of mutual aid.

+

Friends As Friends

In the OSR campaigns I'm part of, I keep forgetting to record money. Which is usually a big deal in such games, seeing as they are in the grand tradition of gold-for-XP?

In both games, my characters are still 1st-Level pukes, though it's been months.

I'm having a blast, anyway.

My GMs, by virtue of running organic, reactive worlds, have made play rewarding for me. NPCs / geographies remember the party's previous actions, and respond accordingly.

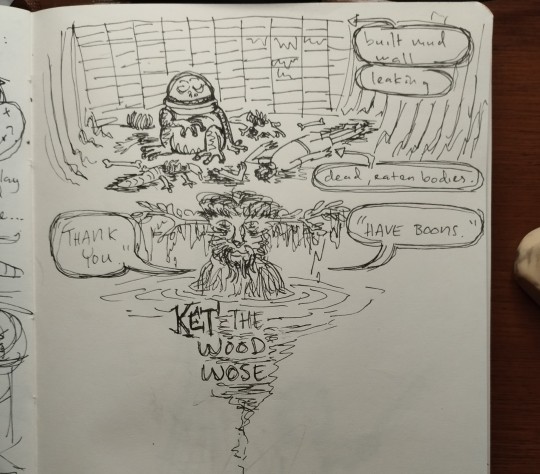

I've been given gills from a river god, after constant prayer;

I've befriended a village of monsters, where we now live;

I've parleyed with the witch of a whole forest, where we may now tread;

I've a boon from the touch of wood wose, after answering his summons.

I cannot count on the wood wose showing up. He is a character in the world, not a power I control. Calling on the wood wose might become a whole adventure.

Little of this stuff is codified my stats or abilities or equipment list. They are mostly all under "misc notes".

Diegetic growth. Narrative change that spirals into more play.

This is the design opportunity, to me:

How do we shape TTRPG play culture in such a way that the "misc notes" gaps in our games are as fun as the systemised bits? What kinds of orientation tools must we provide? What should we say, in our advice sections?

+

A Note About Trust

The reason why it is so hard to imagine play beyond conventional incentive structures has a lot to do with trust.

Sidney again:

One of the core issues is the "low trust table". I'm not designing just for myself but for my audience. For a product. How much can I ask purchasers and their friends to codesign this part with me?

Nerds love numbers and things we can write down in inventories or slots because they are sureties. We've learned to fear fiat or player discretion, traumatised as we are by Problem GMs or That Guys.

The reason why the poverty in Sidney's hypothetical ("righteous players are in rags") sounds so bad is because in truth it represents risk at the game table. If you don't participate in the mechanics legible to your ruleset (the XP and gear to do more game things), you risk gradually being excluded from play.

You have no assurance your fellow players will know how hold space for you; be considerate; work together to portray a living world where NPCs react in meaningful ways---in ways that will be fun and rewarding for everybody playing.

You are giving up the guarantee of mechanical relevance for the possibility of fun interactions and creative social play.

+

The "low trust table" is learned behaviour--the cruft of gamer culture and trauma.

When I game with folks new to TTRPGs, they tend to be decent, considerate. I think there's enough anecdotal evidence from folks playing with school kids / newcomers / etc to suggest my experience is not unique.

If the "low trust table" is indeed learned behaviour, it can be unlearned.

Which rules conventions, now part of the hobby mainstream, were the result of designers designing defensively---shadowboxing against terrible players and the spectre of "unfairness"?

How can we "undesign" such conventions?

Lack of trust is a problem that we have to address in play culture, not rulesets. You cannot cook a dish so good it forces diners to have good table manners.

+

This is too long already. I'll end with an observation:

Elfgames are not praxis, but doesn't this specific dilemma in the microcosm of our silly elfgames ultimately mirror real-world ethics?

To be moral is to trust in a better world; to be amoral / immoral is to hedge against the guarantee of a worse one.

+++

Further Reading

Some words from around the TTRPG community about incentive and advancement in games:

+

However, the reason there is a big debate about this is that behavioural incentives in games clearly do work, either entirely or at various levels. This applies outside gaming, as well. Why do advertising companies and retail business use "rewards" structures to convince people to buy more of their products? Why do people chase after "Likes" on social media?

A comment by Paul_T to "A Hypothesis on Behavioral Incentives"

from a discussion on Story-Games.com

+

the structure and symbolism of the D&D game align with certain structures and values of patriarchy. The game is designed to last infinitely by shifting goalposts of character experience in terms of increasing amounts of gold pieces acquired; this resembles the modus operandi of phallic desire which seeks out object after object (most typically, women) in order to quench a lack which always reasserts itself.

D&D's Obsession With Phallic Desire

from Traverse Fantasy

+

In short, my feeling is that rewarding players with character improvement in return for achieving goals in a specific way impedes some of the key strengths of TTRPGs for little or no benefit in return.

Incentives

from Bastionland

+

When good deeds arise naturally out of the players choices, especially when players rejected other options that were more beneficial to them, it is immensely satisfying. Far more than if players are just assumed to be heroic by default. It gives agency and meaning to player choice.

Make Players Choose To Be Kind

from Cosmic Orrery

+

Much has been made about 1 GP = 1 XP as the core gameplay loop driver of TSR D+D. But XP for gold retrieved also winds up being something of a de facto capitalistic outlook as well. Success is driven by accumulation of individual wealth -- by an adventuring company, even! So what's a new framework that can be used for underpinning a leftist OSR campaign?

A Spectre (7+3 HD) Is Haunting the Flaeness: Towards a Leftist OSR

from Legacy of the Bieth

+

Growth should be tied to a specific experience occurring in the fiction.

It is more important for a PC to grow more interesting than more skilled or capable.

PCs experience growth not necessarily because they’ve gotten more skill and experience, but because they are changed in a significant way.

Cairn FAQ

from Cairn RPG / Yochai Gal

+++

Thank you Ram for the Story-Games.com deep cut!

( Image sources:

https://knowyourmeme.com/memes/neuron-activation

https://en.wikipedia.org/wiki/Majesty:_The_Fantasy_Kingdom_Sim

https://www.economist.com/sites/default/files/special-reports-pdfs/10490978.pdf

https://varnam.my/34311/untold-tales-of-indian-labourers-from-rubber-plantations-during-pre-independence-malaya/

https://nobonzo.com/ )

+

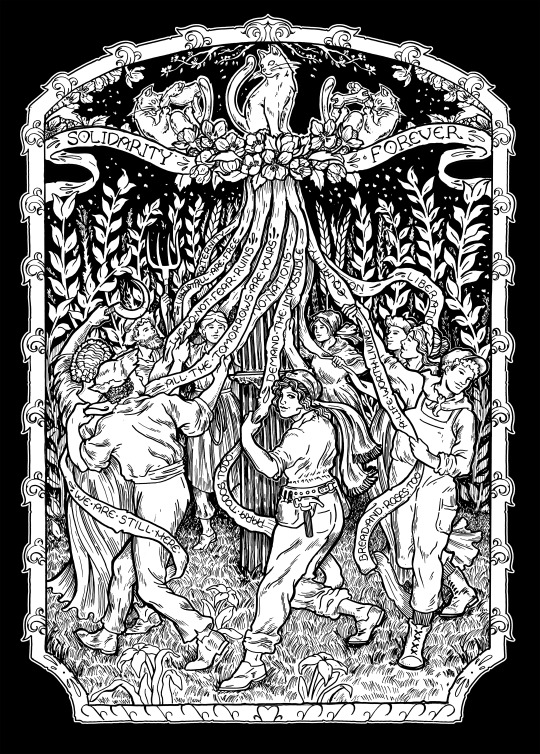

PS: used with permission from Sandro, art by Maxa', a reminder to self:

247 notes

·

View notes

Text

The Ontario Divisional Court recently released its decision on Jordan Peterson’s challenge of the College of Psychologists of Ontario’s order that he complete a coaching program on professionalism in public statements. The Court’s decision, which upheld the College’s order, has generated a fair bit of commentary in the media, much of which argues that the order and decision are assaults on Peterson’s freedom of expression, or will empower “woke” organizations to curtail individual freedoms.

However, I look at the matter from a different lens. While I may have the right to freedom of expression, how I exercise that right may have implications. For example, at a very basic level, if someone expresses a strong opinion on one side of a subject, they may risk relationships with family or friends who have strong opinions on the other side. In the case of Jordan Peterson, the potential implications are that the manner in which he is choosing to exercise his right to freedom of expression may impact on the privilege of being licensed as a clinical psychologist by the College of Psychologists of Ontario.

License to practice – A privilege not a right

You may note that I referred to being licensed as a privilege; that is by design, as there is no right under the Charter to practice any particular profession. Instead, being licensed to practice any particular profession is a privilege, and many would argue that it imposes certain responsibilities upon those who are licensed. Further, as a self-regulated profession, the College of Psychologists of Ontario has a mandate to regulate the profession in the public interest, and to that effect it has developed Standards of Professional Conduct and adopted the Canadian Code of Ethics for Psychologists.

This Code of Ethics provides that “psychologists acknowledge that all human beings have a moral right to have their innate worth as human beings appreciated,” without regard to factors such as ethnicity, religion, sex, gender, sexual orientation, “or any other preference or personal characteristic, condition, or status.” The Code also provides that “psychologists do not engage in unjust discrimination based on such factors and promote non-discrimination in all of their activities,” and that psychologists must “Not engage publicly … in degrading comments about others…”.

Continue Reading.

Tagging: @politicsofcanada

462 notes

·

View notes

Text

In the darkest chapter of German history, during a time when incited mobs threw stones into the windows of innocent shop owners and women and children were cruelly humiliated in the open; Dietrich Bonhoeffer, a young pastor, began to speak publicly against the atrocities.

After years of trying to change people’s minds, Bonhoeffer came home one evening and his own father had to tell him that two men were waiting in his room to take him away.

In prison, Bonhoeffer began to reflect on how his country of poets and thinkers had turned into a collective of cowards, crooks and criminals. Eventually he concluded that the root of the problem was not malice, but stupidity.

In his famous letters from prison, Bonhoeffer argued that stupidity is a more dangerous enemy of the good than malice, because while “one may protest against evil; it can be exposed and prevented by the use of force, against stupidity we are defenseless. Neither protests nor the use of force accomplish anything here. Reasons fall on deaf ears.”

Facts that contradict a stupid person’s prejudgment simply need not be believed and when they are irrefutable, they are just pushed aside as inconsequential, as incidental. In all this, the stupid person is self-satisfied and, being easily irritated, becomes dangerous by going on the attack.

For that reason, greater caution is called for when dealing with a stupid person than with a malicious one. If we want to know how to get the better of stupidity, we must seek to understand its nature.

This much is certain, stupidity is in essence not an intellectual defect but a moral one. There are human beings who are remarkably agile intellectually yet stupid, and others who are intellectually dull yet anything but stupid.

The impression one gains is not so much that stupidity is a congenital defect but that, under certain circumstances, people are made stupid or rather, they allow this to happen to them.

People who live in solitude manifest this defect less frequently than individuals in groups. And so it would seem that stupidity is perhaps less a psychological than a sociological problem.

It becomes apparent that every strong upsurge of power, be it of a political or religious nature, infects a large part of humankind with stupidity. Almost as if this is a sociological-psychological law where the power of the one needs the stupidity of the other.

The process at work here is not that particular human capacities, such as intellect, suddenly fail. Instead, it seems that under the overwhelming impact of rising power, humans are deprived of their inner independence and, more or less consciously, give up an autonomous position.

The fact that the stupid person is often stubborn must not blind us from the fact that he is not independent. In conversation with him, one virtually feels that one is dealing not at all with him as a person, but with slogans, catchwords, and the like that have taken possession of him.

He is under a spell, blinded, misused, and is abused in his very being. Having thus become a mindless tool, the stupid person will also be capable of any evil – incapable of seeing that it is evil.

Only an act of liberation, not instruction, can overcome stupidity. Here we must come to terms with the fact that in most cases a genuine internal liberation becomes possible only when external liberation has preceded it. Until then, we must abandon all attempts to convince the stupid person.

Bonhoeffer died due to his involvement in a plot against Adolf Hitler, at dawn on 9 April 1945 at Flossenbürg concentration camp - just two weeks before soldiers from the United States liberated the camp.

—Dietrich Bonhoeffer’s Theory of Stupidity

#politics#dietrich bonhoeffer#republicans#theory of stupidity#donald trump#dunning kruger effect#pedagogy#stupidity#germany#conspiracy theorists#mob mentality#interesting#ethics

846 notes

·

View notes

Text

Nothing worse than getting into a new subject and having no one to discuss it with

#self education#nerdy girls#philosophy#psychology#neurology#spirituality#religion#ethics#esoteric#literature#pop culture#video essay#art#art history#history#science#astronomy#quantum physics#politics#documentary#aa

139 notes

·

View notes

Text

I don't think "I think genocide is bad" should be controversial

No, not when it's those people

No, not even in those circumstances

Genocide is always bad

Its bad when it's the Palestinians

Its bad when it's the Armenians

Its bad when it's the Uyghurs

It's bad when it's in the Congo

It's bad when it's in Eithiopia

(pretty much all of the ones I listed are still happening right now as b at I can check at 4am. please go do research about them. And yes it's bad when it's the Jewish people but I was trying to list current genocides)

If the "solution" a group has come up with for a dispute over territory or religion or any other reason is genocide/ethnic cleansing then it's not a solution it's a crime against humanity

Genocide is bad always

Ethnic cleansing is bad always

Genocide should not be allowed by the world at large

It should definitely not be supported or funded by other governments (I'm looking at you America and Russia)

It should be condemned by all governmenta and it should not be allowed

There is not a single "reasonable" or "justified" genocide

"but if we didn't do this they'd do it to us!"

And that would also be bad and wrong and shouldn't b allowed. What's your point?

Genocide is bad

Genocide is wrong

It cannot be justified in any circumstances

This should not be controversial

142 notes

·

View notes

Text

125 notes

·

View notes

Text

"Major AI companies are racing to build superintelligent AI — for the benefit of you and me, they say. But did they ever pause to ask whether we actually want that?

Americans, by and large, don’t want it.

That’s the upshot of a new poll shared exclusively with Vox. The poll, commissioned by the think tank AI Policy Institute and conducted by YouGov, surveyed 1,118 Americans from across the age, gender, race, and political spectrums in early September. It reveals that 63 percent of voters say regulation should aim to actively prevent AI superintelligence.

Companies like OpenAI have made it clear that superintelligent AI — a system that is smarter than humans — is exactly what they’re trying to build. They call it artificial general intelligence (AGI) and they take it for granted that AGI should exist. “Our mission,” OpenAI’s website says, “is to ensure that artificial general intelligence benefits all of humanity.”

But there’s a deeply weird and seldom remarked upon fact here: It’s not at all obvious that we should want to create AGI — which, as OpenAI CEO Sam Altman will be the first to tell you, comes with major risks, including the risk that all of humanity gets wiped out. And yet a handful of CEOs have decided, on behalf of everyone else, that AGI should exist.

Now, the only thing that gets discussed in public debate is how to control a hypothetical superhuman intelligence — not whether we actually want it. A premise has been ceded here that arguably never should have been...

Building AGI is a deeply political move. Why aren’t we treating it that way?

...Americans have learned a thing or two from the past decade in tech, and especially from the disastrous consequences of social media. They increasingly distrust tech executives and the idea that tech progress is positive by default. And they’re questioning whether the potential benefits of AGI justify the potential costs of developing it. After all, CEOs like Altman readily proclaim that AGI may well usher in mass unemployment, break the economic system, and change the entire world order. That’s if it doesn’t render us all extinct.

In the new AI Policy Institute/YouGov poll, the "better us [to have and invent it] than China” argument was presented five different ways in five different questions. Strikingly, each time, the majority of respondents rejected the argument. For example, 67 percent of voters said we should restrict how powerful AI models can become, even though that risks making American companies fall behind China. Only 14 percent disagreed.

Naturally, with any poll about a technology that doesn’t yet exist, there’s a bit of a challenge in interpreting the responses. But what a strong majority of the American public seems to be saying here is: just because we’re worried about a foreign power getting ahead, doesn’t mean that it makes sense to unleash upon ourselves a technology we think will severely harm us.

AGI, it turns out, is just not a popular idea in America.

“As we’re asking these poll questions and getting such lopsided results, it’s honestly a little bit surprising to me to see how lopsided it is,” Daniel Colson, the executive director of the AI Policy Institute, told me. “There’s actually quite a large disconnect between a lot of the elite discourse or discourse in the labs and what the American public wants.”

-via Vox, September 19, 2023

#united states#china#ai#artificial intelligence#superintelligence#ai ethics#general ai#computer science#public opinion#science and technology#ai boom#anti ai#international politics#good news#hope

199 notes

·

View notes

Text

I'm really not a villain enjoyer. I love anti-heroes and anti-villains. But I can't see fictional evil separate from real evil. As in not that enjoying dark fiction means you condone it, but that all fiction holds up some kind of mirror to the world as it is. Killing innocent people doesn't make you an iconic lesbian girlboss it just makes you part of the mundane and stultifying black rot of the universe.

"But characters struggling with honour and goodness and the egoism of being good are so boring." Cool well some of us actually struggle with that stuff on the daily because being a good person is complicated and harder than being an edgelord.

Sure you can use fiction to explore the darkness of human nature and learn empathy, but the world doesn't actually suffer from a deficit of empathy for powerful and privileged people who do heinous stuff. You could literally kill a thousand babies in broad daylight and they'll find a way to blame your childhood trauma for it as long as you're white, cisgender, abled and attractive, and you'll be their poor little meow meow by the end of the week. Don't act like you're advocating for Quasimodo when you're just making Elon Musk hot, smart and gay.

#this is one of the reasons why#although i would kill antis in real life if i could#i also don't trust anyone who identifies as 'pro-ship'#it's just an excuse to shut down legitimate ethical questions and engaging in honest self-reflective media consumption and critique#art doesn't exist in a vacuum#it's a flat impossibility for it not to inform nor be informed by real world politics and attitudes#because that's what it means to be created by human hands#we can't even make machine learning thats not just human bias fed into an algorithm#if the way we interact with art truly didn't influence anything then there would be no value in it#just because antis have weaponized those points in the most bad faith ways possible#doesn't mean you can ignore them in good faith#anyway fandom stans villains because society loves to defend and protect abusers#it's not because you get the chance to be free and empathetic and indulge in your darkness and what not#it's just people's normal levels of attachment to shitty people with an added layer of justification for it#this blog is for boring do-gooder enjoyers only#lol#knee of huss#fandom wank#media critique#pop culture#fandom discourse

189 notes

·

View notes

Quote

True politeness is a polish, not a varnish; and should rather be acquired by observation than admonition.

Mary Wollstonecraft, Original Stories from Real Life

144 notes

·

View notes

Text

Dick and Jason would totally have a debate about reading. Except it wouldn’t be about whether Dick could read or not, it would be about whether classical literature is better than philosophical texts and they would hate the other because Jason would say that philosophical texts are a bunch of phony ramblings from old men who had nothing better to do and Dick would get mad and say classical literature is only popular because it’s too messed up for its time period and therefore the only reason it’s remembered and not because it’s actually good. 30 mins later Tim walks into the room with Jason and Dick not even a centimeter apart and screaming into each others faces and takes a slow sip of coffee and says “programming books are the most fun to read.”

Dick and Jason turn their heads so slowly to look at him that with each movement their neck muscles positively creak with pure revulsion for him.

Later Jason sends a half a dozen photos to the Titans and Bernard of Tim’s most awkward poses and Dick sidles up to Bruce and convinces him that Tim has gotten to the point where his sleep is affecting him so much he’s not functioning properly so Bruce takes matters into his own hands.

#Tim was not happy about Jason or Dick#dick would have billions of cute photos of his siblings for him to coo over#only his siblings realize the blackmail potential of these photos#jason stole the photos and sent them because dick takes the worst photos of people and doesn’t realize that they’re not cute#dick would absolutely be into philosophy#the art of war and the prince by Machiavelli and ethics by Aristotle and the republic by plato#he knows how to manipulate people and he knows how to run a government canonically so he’d be very much into reading works#about moral and political dilemmas#dick grayson#nightwing#jason todd#red hood#tim drake#red robin#batfam

288 notes

·

View notes

Text

oh why disrobed truth people eke solemn lies as truth that's your only truth

sunshine is laughing in my balcony

you arm him about aqua sarcophagi

i accidentally let the refrigerator die

jews are arabs are jews slew gardyloo grimy fremen at their borders

your honeycomb heart logarithmic

picks a thousand in a room of thousand

not i or moi thousand one minus one

kill or be killed pressroom briefings chinese first-class red tie

you're beautiful no one interprets you

you're gorgeous and there goes holi

a handful of halogen love potions currency speculation tipsy

sea and spring and chrysanthemums

someone drowing herself with you as you

with your reflection your planetary influence your impaired glutes

tax avoidance for coke zero coitus

someone is walking like a bazaar with russia eyes with an individualistic salt

#poetry#dhrit#spilled ink#inkskinned#politics#war#geopolitics#social#culture#economy#love#life#writerscreed#poetryriot#poeticstories#poems on tumblr#art#ideology#ethics#sociopolitical#nature#environment#light#beauty#popular#march#emotions#apology#meaning

56 notes

·

View notes